VI. Infinity: recursion, induction, infinite descent

Mathematical induction – i.e. proof by recurrence – is... imposed on us, because it is... the affirmation of a property of the mind itself.

Henri Poincaré (1854–1912)

Allez en avant, et la foi vous viendra. (Press on ahead, and understanding will follow.)

Jean le Rond d’Alembert (1717–1783)

Mathematics has been called “the science of infinity”. However, infinity is a slippery notion, and many of the techniques which are characteristic of modern mathematics were developed precisely to tame this slipperiness. This chapter introduces some of the relevant ideas and techniques.

There are aspects of the story of infinity in mathematics which we shall not address. For example, astronomers who study the night sky and the movements of the planets and stars soon note its immensity, and its apparently ‘fractal’ nature – where increasing the detail or magnification reveals more or less the same level of complexity on different scales. And it is hard then to avoid the question of whether the stellar universe is finite or infinite.

In the mental universe of mathematics, once counting, and the process of halving, become routinely iterative processes, questions about infinity and infinitesimals are almost inevitable. However, mathematics recognises the conceptual gulf between the finite and the infinite (or infinitesimal), and rejects the lazy use of “infinity” as a metaphor for what is simply “very large”. Large finite numbers are still numbers; and long finite sums are conceptually quite different from sums that “go on for ever”. Indeed, in the third century BC, Archimedes (c. 287–212 BC) wrote a small booklet called The Sand Reckoner, dedicated to King Gelon, in which he introduced the arithmetic of powers (even though the ancient Greeks had no convenient notation for writing such numbers), in order to demonstrate that – contrary to what some people had claimed – the number of grains of sand in the known universe must be finite (he derived an upper bound of approximately 8×1063 The influence wielded by ideas of infinity on mathematics has been profound, even if we now view some of these ideas as flights of fancy –

- from Zeno of Elea (c. 495 BC – c. 430 BC), who presented his paradoxes to highlight the dangers inherent in reasoning sloppily with infinity,

- through Giordano Bruno (1548–1600), who declared that there were infinitely many inhabited universes, and who was burned at the stake when he refused to retract this and other “heresies”,

- to Georg Cantor (1845–1918) whose groundbreaking work in developing a true “mathematics of infinity” was inextricably linked to his religious beliefs.

In contrast, we focus here on the delights of the mathematics, and in particular on how an initial doorway into “ideas of infinity” can be forged from careful reasoning with finite entities. Readers who would like to explore what we pass over in silence could do worse than to start with the essay on “infinity” in the MacTutor History of Mathematics archive:

http://www-history.mcs.st-and.ac.uk/HistTopics/Infinity.html.

The simplest infinite processes begin with recursion – a process where we repeat exactly the same operation over and over again (in principle, continuing for ever). For example, we may start with 0, and repeat the operation “add 1”, to generate the sequence:

Or we may start with 20 = 1 and repeat the operation “multiply by 2”, to generate:

Or we may start with 1.000000 ⋯, and repeat the steps involved in “dividing by 7” to generate the infinite decimal for :

We can then vary this idea of “recursion” by allowing each operation to be “essentially” (rather than exactly) the same, as when we define triangular numbers by “adding n” at the nth stage to generate the sequence:

In other words, the sequence of triangular numbers is defined by a recurrence relation:

and

when

We can vary this idea further by allowing more complicated recurrence relations – such as that which defines the Fibonacci numbers:

and

when

All of these “images of infinity” hark back to the familiar counting numbers.

- We know how the counting numbers begin (with 0, or with 1); and

- we know that we can “add 1” over and over again to get ever larger counting numbers.

The intuition that this process is, in principle, never–ending (so is never actually completed), yet somehow manages to count all positive integers, is what Poincaré called a “property of the mind itself”: that is, the idea that we can define an infinite sequence, or process, or chain of deductions (involving digits, or numbers, or objects, or statements, or truths) by

- specifying how it begins, and by then

- specifying in a uniform way “how to construct the next term ”, or “how to perform the next step”.

This idea is what lies behind “proof by mathematical induction”, where we prove that some assertion P(n) holds for all – so demonstrating infinitely many separate statements at a single blow. The validity of this method of proof depends on a fundamental property of the positive integers, or of the counting sequence

namely:

The Principle of Mathematical Induction: If a subset S of the positive integers

- contains the integer “1”,

and has the property that - whenever an integer k is in the set S, then the next integer is always in S too,

then S contains all positive integers.

6.1. Proof by mathematical induction I

When students first meet “proof by induction”, it is often explained in a way that leaves them feeling distinctly uneasy, because it appears to break the fundamental taboo:

never assume what you are trying to prove.

This tends to leave the beginner in the position described by d'Alembert's quote at the start of the chapter: they may “press on” in the hope that “understanding will follow”, but a doubt often remains. So we encourage readers who have already met proof by induction to take a step back, and to try to understand afresh how it really works. This may require you to study the solutions (Section 6.10), and to be prepared to learn to write out proofs more carefully than, and rather differently from, what you are used to.

When we wish to prove a general result which involves a parameter n, where n can be any positive integer, we are really trying to prove infinitely many results all at once. If we tried to prove such a collection of results in turn, “one at a time”, not only would we never finish, we would scarcely get started (since completing the first million, or billion, cases leaves just as much undone as at the start). So our only hope is:

- to think of the sequence of assertions in a uniform way, as consisting of infinitely many different, but similar–looking, statements P(n), one for each n separately (with each statement depending on a particular n); and

- to recognise that the overall result to be proved is not just a single statement P(n), but the compound statement that “P(n) is true, for all ”.

Once the result to be proved has been formulated in this way, we can

- use bare hands to check that the very first statement is true (usually P(1)); and

- try to find some way of demonstrating that,

– as soon as we know that “P(k) is true, for some (particular, but unspecified) ”,

– we can prove in a uniform way that the next result is then automatically true.

Having implemented the first of the two induction steps, we know that P(1) is true.

The second bullet point above then comes into play and assures us that (since we know that P(1) is true), P(2) must be true.

And once we know that P(2) is true, the second bullet point assures us that P(3) is also true.

And once we know that P(3) is true, the second bullet point assures us that P(4) is also true.

And so on for ever.

We can then conclude that the whole sequence of infinitely many statements are all true – namely that:

“every statement P(n) is true”,

or that

“P(n) is true, for all .”

In other words, if we define S to be the set of positive integers n for which the statement P(n) is true, then S contains the element “1”, and whenever k is in S, so is ; hence, by the Principle of Mathematical Induction we know that S contains all positive integers.

At this stage we should acknowledge an important didactical (rather than mathematical) ploy in our recommended layout here. It is important to underline the distinction between

(i) the individual statements P(n) which are the separate ingredients in the overall statement to be proved, namely:

“P(n) is true, for all ”,

where infinitely many individual statements have been compressed into a single compound statement, and

(ii) the induction step, where we

– assume some particular P(k) is known to be true, and

– show that the next statement is then automatically true.

To underline this distinction we consistently use a different “dummy variable” (namely “k”) in the latter case. This distinction is a psychological ploy rather than a logical necessity. However, we recommend that readers should imitate this distinction (at least initially).

6.2. ‘Mathematical induction’ and ‘scientific induction’

The idea of a “list that goes on for ever” arose in the sequence of powers of 4 back in Problem 16, where we asked

Do the two sequences arising from successive powers of 4:

- the leading digits:

and

- the units digits:

really “repeat for ever” as they seem to?

This example illustrates the most basic misconception that sometimes arises concerning mathematical induction – namely to confuse it with the kind of pattern spotting that is often called ‘scientific induction’.

In science (as in everyday life), we routinely infer that something that is observed to occur repeatedly, apparently without exception (such as the sun rising every morning; or the Pole star seeming to be fixed in the night sky) may be taken as a “fact”. This kind of “scientific induction” makes perfect sense when trying to understand the world around us – even though the inference is not warranted in a strictly logical sense.

Proof by mathematical induction is quite different. Admittedly, it often requires intelligent guesswork at a preliminary stage to make a guess that allows us to formulate precisely what it is that we should be trying to prove. But this initial guess is separate from the proof, which remains a strictly deductive construction. For example,

the fact that “1”, “”, “”, “”, etc. all appear to be successive squares gives us an idea that perhaps the identity

P(n):

is true, for all .

This guess is needed before we can start the proof by mathematical induction. But the process of guessing is not part of the proof. And until we construct the “proof by induction” (Problem 231), we cannot be sure that our guess is correct.

The danger of confusing ‘mathematical induction’ and ‘scientific induction’ may be highlighted to some extent if we consider the proof in Problem 76 above that “we can always construct ever larger prime numbers”, and contrast it with an observation (see Problem 228 below) that is often used in its place – even by authors who should know better.

In Problem 76 we gave a strict construction by mathematical induction:

- we first showed how to begin (with say);

- then we showed how, given any finite list of distinct prime numbers , it is always possible to construct a new prime (as the smallest prime number dividing ).

This construction was very carefully worded, so as to be logically correct.

In contrast, one often finds lessons, books and websites that present the essential idea in the above proof, but “simplify” it into a form that encourages anti–mathematical “pattern–spotting” which is all–too–easily misconstrued. For example, some books present the sequence

as a way of generating more and more primes.

(a) Are 3, 7, 31, 211 all prime?

(b) prime?

(c) Is prime? A

We have already met two excellent historical examples of the dangers of plausible pattern–spotting in connection with Problem 118. There you proved that:

“if is prime, then n must be prime.”

You then showed that , , , are all prime, but that is not. This underlines the need to avoid jumping to (possibly false) conclusions, and never to confuse a statement with its converse.

In the same problem you showed that:

“if is to be prime and , then a must be even, and b must be a power of 2.”

You then looked at the simplest family of such candidate primes namely the sequence of Fermat numbers :

It turned out that, although fo, are all prime, and although Fermat (1601–1665) claimed (in a letter to Marin Mersenne (1588–1648)) that all Fermat numbers are prime, we have yet to discover a sixth Fermat prime!

There are times when a mathematician may appear to guess a general result on the basis of what looks like very modest evidence (such as noticing that it appears to be true in a few small cases). Such “informed guesses” are almost always rooted in other experience, or in some unnoticed feature of the particular situation, or in some striking analogy: that is, an apparent pattern strikes a chord for reasons that go way beyond the mere numbers. However those with less experience need to realise that apparent patterns or trends are often no more than numerical accidents.

Pell’s equation (John Pell (1611–1685)) provides some dramatic examples.

- If we evaluate the expression “” for , we may notice that the outputs never give a perfect square. And this is to be expected, since the next square after is

and this is always greater than .

- However, if we evaluate “” for we may observe that the outputs never seem to include a perfect square. But this time there is no obvious reason why this should be so – so we may anticipate that this is simply an accident of “small” numbers. And we should hesitate to change our view, even though this accident goes on happening for a very, very, very long time: the smallest value of n for which gives rise to a perfect square is apparently

6.3. Proof by mathematical induction II

Even where there is no confusion between mathematical induction and ‘scientific induction', students often fail to appreciate the essence of “proof by induction”. Before illustrating this, we repeat the basic structure of such a proof.

A mathematical result, or formula, often involves a parameter n, where n can be any positive integer. In such cases, what is presented as a single result, or formula, is a short way of writing an infinite family of results. The proof that such a result is correct therefore requires us to prove infinitely many results at once. We repeat that our only hope of achieving such a mind–boggling feat is

- to formulate the stated result for each value of n separately: that is, as a statement P(n) which depends on n;

- then to use bare hands to check the “beginning” – namely that the simplest case (usually P(1)) is true;

- finally to find some way of demonstrating that,

– as soon as we know that P(k) is true, for some (unknown) ,

– we can prove that the next result is then automatically true.

We can then conclude that

“every statement P(n) is true”,

or that

“P(n) is true, for all ”.

Problem 229 Prove the statement:

“52n+2 – 24n – 25 is divisible by 576, for all ”.

When trying to construct proofs in private, one is free to write anything that helps as ‘rough work'. But the intended thrust of Problem 229 is two–fold:

to introduce the habit of distinguishing clearly between

(i) the statement P(n) for a particular n, and

(ii) the statement to be proved – namely “P(n) is true, for all ”; and

to draw attention to the “induction step” (i.e. the third bullet point above), where

(i) we assume that some unspecified P(k) is known to be true, and

(ii) seek to prove that the next statement must then be true.

The central lesson in completing the “induction step” is to recognize that:

to prove that is true, one has to start by looking at what says.

In Problem 229 says that

“ is divisible by 576”.

Hence one has to start the induction step with the relevant expression

and look for some way to rearrange this into a form where one can use P(k) (which we assume is already known to be true, and so are free to use).

It is in general a false strategy to work the other way round – by “starting with P(k), and then fiddling with it to try to get ”. (This strategy can be made to work in the simplest cases; but it does not work in general, and so is a bad habit to get into.) So the induction step should always start with the hypothesis of .

The next problem invites you to prove the formula for the sum of the angles in any polygon. The result is well–known; yet we are fairly sure that the reader will never have seen a correct proof. So the intention here is for you to recognise the basic character of the result, to identify the flaws in what you may until now have accepted as a proof, and to try to find some way of producing a general proof.

Problem 230 Prove by induction the statement:

“for each , the angles of any n–gon in the plane have sum equal to radians.”

The formulation certainly involves a parameter ; so you clearly need to begin by formulating the statement P(n). For the proof to have a chance of working, finding the right formulation involves a modest twist! So if you get stuck, it may be worth checking the first couple of lines of the solution.

No matter how P(n) is formulated, you should certainly know how to prove the statement P(3) (essentially the formula for the sum of the angles in a triangle). But it is far from obvious how to prove the “induction step”:

“if we know that P(k) is true for some particular , then must also be true”.

When tackling the induction step, we certainly cannot start with P(k)! The statement says something about polygons with sides: and there is no way to obtain a typical –gon by fiddling with some statement about polygons with k sides. (If you start with a k–gon, you can of course draw a triangle on one side to get a –gon; but this is a very special construction, and there is no easy way of knowing whether all –gons can be obtained in this way from some k–gon.) The whole thrust of mathematical induction is that we must always start the induction step by thinking about the hypothesis of – that is in this case, by considering an arbitrary –gon and then finding some guaranteed way of “reducing” it in order to make use of P(k).

The next two problems invite you to prove some classical algebraic identities. Most of these may be familiar. The challenge here is to think carefully about the way you lay out your induction proof, to learn from the examples above, and (later) to learn from the detailed solutions provided.

Problem 231 Prove by induction the statement:

“ holds, for all ”

The summation in Problem 231 was known to the ancient Greeks. The mystical Pythagorean tradition (which flourished in the centuries after Pythagoras) explored the character of integers through the ‘spatial figures’ which they formed. For example, if we arrange each successive integer as a new line of dots in the plane, then the sum “” can be seen to represent a triangular number. Similarly, if we arrange each odd number in the sum “” as a “k-by-k reverse L-shape”, or gnomon (a word which we still use to refer to the L-shaped piece that casts the shadow on a sundial), then the accumulated L-shapes build up an n by n square of dots - the “1” being the dot in the top left hand corner, the “3” being the reverse L-shape of 3 dots which make this initial “1” into a 2 by 2 square, the “5” being the reverse L-shape of 5 dots which makes this 2 by 2 square into a 3 by 3 square, and so on. Hence the sum “” can be seen to represent a square number.

There is much to be said for such geometrical illustrations; but there is no escape from the fact that they hide behind an ellipsis (the three dots which we inserted in the sum between “5” and “”, which were then summarised when arranging the reverse L-shapes by ending with the words “and so on”). Proof by mathematical induction, and its application in Problem 231, constitute a formal way of avoiding both the appeal to pictures, and the hidden ellipsis.

Problem 232 The sequence

is defined by its first two terms , and by the recurrence relation:

.

(a) Guess a closed formula for the nth term un.

(b) Prove by induction that your guess in (a) is correct for all .

Problem 233 The sequence of Fibonacci numbers

is defined by its first two terms , and by the recurrence relation:

when .

Prove by induction that, for all ,

Problem 234 Prove by induction that

is divisible by 19, for all .

Problem 235 Use mathematical induction to prove that each of these identities holds, for all :

(a)

(b)

(c)

(d)

(e) .

Problem 236 Prove by induction the statement:

“, for all ”.

We now know that, for all :

.

And if we sum these “outputs” (that is, the first n natural numbers), we get the nth triangular number:

.

The next problem invites you to find the sum of these “outputs”: that is, to find the sum of the first n triangular numbers.

Problem 237

(a) Experiment and guess a formula for the sum of the first n triangular numbers:

(b) Prove by induction that your guessed formula is correct for all .

A We now know closed formulae for

“”

and for

“”.

The next problem hints firstly that these identities are part of something more general, and secondly that these results allow us to find identities for the sum of the first n squares:

for the first n cubes:

and so on.

Problem 238

(a) Note that

.

Use this and the result of Problem 237 to derive a formula for the sum:

.

(b) Guess and prove a formula for the sum

.

Use this to derive a closed formula for the sum:

.

It may take a bit of effort to digest the statement in the next problem. It extends the idea behind the “method of undetermined coefficients” that is discussed in Note 2 to the solution of Problem 237(a).

Problem 239

(a) Given distinct real numbers

find all possible polynomials of degree n which satisfy

for some specified number b.

(b) For each , prove the following statement:

Given two labelled sets of real numbers

,

and

,

where the ai are all distinct (but the b need not be), there exists a polynomial fn of degree n, such that

We end this subsection with a mixed bag of three rather different induction problems. In the first problem the induction step involves a simple construction of a kind we will meet later.

Problem 240 A country has only 3 cent and 4 cent coins.

(a) Experiment to decide what seems to be the largest amount, N cents, that cannot be paid directly (without receiving change).

(b) Prove by induction that “n cents can be paid directly for each ”.

Problem 241

(a) Solve the equation . Calculate , and check that is also an integer.

(b) Solve the equation . Calculate , and check that is also an integer.

(c) Solve the equation . Calculate , and check that is also an integer.

(d) Solve the equation , where k is an integer. Calculate z2, and check that is also an integer.

(e) Prove that if a number z has the property that is an integer, then is also an integer for each .

Problem 242 Let p be any prime number. Use induction to prove:

“ is divisible by p for all ”.

6.4. Infinite geometric series

Elementary mathematics is predominantly about equations and identities. But it is often impossible to capture important mathematical relations in the form of exact equations. This is one reason why inequalities become more central as we progress; another reason is because inequalities allow us to make precise statements about certain infinite processes.

One of the simplest infinite process arises in the formula for the “sum” of an infinite geometric series:

(for ever).

Despite the use of the familiar-looking “+” signs, this can be no ordinary addition. Ordinary addition is defined for two summands; and by repeating the process, we can add three summands (thanks in part to the associative law of addition). We can then add four, or any finite number of summands. But this does not allow us to “add” infinitely many terms as in the above sum. To get round this we combine ordinary addition (of finitely many terms) and simple inequalities to find a new way of giving a meaning to the above “endless sum”. In Problem 116 you used the factorisation

to derive the closed formula:

.

This formula for the sum of a finite geometric series can be rewritten in the form

.

At first sight, this may not look like a clever move! However, it separates the part that is independent of n from the part on the RHS that depends on n; and it allows us to see how the second part behaves as n gets large:

when , successive powers of r get smaller and smaller and converge rapidly towards 0,

so the above form of the identity may be interpreted as having the form:

— (an “error term”).

Moreover if , then the “error term” converges towards 0 as . In particular, if , the error term is always positive, so we have proved, for all :

and

the difference between the two sides tends rapidly to 0 as .

We then make the natural (but bold) step to interpret this, when , as offering a new definition which explains precisely what is meant by the endless sum

(for ever),

declaring that, when ,

(for ever) = .

More generally, if we multiply every term by a, we see that

(for ever) = .

Problem 243 Interpret the recurring decimal 0.037037037 ··· (for ever) as an infinite geometric series, and hence find its value as a fraction.

Problem 244 Interpret the following endless processes as infinite geometric series.

(a) A square cake is cut into four quarters, with two perpendicular cuts through the centre, parallel to the sides. Three people receive one quarter each - leaving a smaller square piece of cake. This smaller piece is then cut in the same way into four quarters, and each person receives one (even smaller) piece - leaving an even smaller residual square piece, which is then cut in the same way. And so on for ever. What fraction of the original cake does each person receive as a result of this endless process?

(b) I give you a whole cake. Half a minute later, you give me half the cake back. One quarter of a minute later, I return one quarter of the cake to you. One eighth of a minute later you return one eighth of the cake to me. And so on. Adding the successive time intervals, we see that

(for ever) = 1,

so the whole process is completed in exactly 1 minute. How much of the cake do I have at the end, and how much do you have?

Problem 245 When John von Neumann (1903-1957) was seriously ill in hospital, a visitor tried (rather insensitively) to distract him with the following elementary mathematics problem.

Have you heard the one about the two trains and the fly? Two trains are on a collision course on the same track, each travelling at 30 km/h. A super-fly starts on Train A when the trains are 120 km apart, and flies at a constant speed of 50 km/h - from Train A to Train B, then back to Train A, and so on. Eventually the two trains collide and the fly is squashed. How far did the fly travel before this sad outcome?

6.5. Some classical inequalities

The fact that our formula for the sum of a geometric series gives us an exact sum is very unusual - and hence very precious. For almost all other infinite series - no matter how natural, or beautiful, they may seem - you can be fairly sure that there is no obvious exact formula for the value of the sum. Hence in those cases where we happen to know the exact value, you may infer that it took the best efforts of some of the finest mathematical minds to discover what we know.

One way in which we can make a little progress in estimating the value of an infinite series is to obtain an inequality by comparing the given sum with a geometric series.

Problem 246

(a)(i) Explain why

,

so

.

(ii) Explain why are all , so

(b) Use part (a) to prove that

, for all .

(c) Conclude that the endless sum

(for ever)

has a definite value, and that this value lies somewhere between and 2.

The next problem presents a rather different way of deriving a similar equality. Once the relevant inequality has been guessed, or given (see Problem 247(a) and (b)), the proof by mathematical induction is often relatively straightforward. And after a little thought about Problem 246, it should be clear that much of the inaccuracy in the general inequality arises from the rather poor approximations made for the first few terms (when n = 1, when n = 2, when n = 3, etc.); hence by keeping the first few terms as they are, and only approximating for , or , or , we can often prove a sharper result.

Problem 247

(a) Prove by induction that

, for all .

(b) Prove by induction that

for all .

The infinite sum

(for ever)

is a historical classic, and has many instructive stories to tell. Recall that, in Problems 54, 62, 63, 236, 237, 238 you found closed formulae for the sums

and for the sums

.

Each of these expressions has a “natural” feel to it, and invites us to believe that there must be an equally natural compact answer representing the sum. In Problem 235 you took this idea one step further by finding a beautiful closed expression for the sum

When we began to consider infinite series, we found the elegant closed formula

.

We then observed that the final term on the RHS could be viewed as an “error term”, indicating the amount by which the LHS differs from , and noticed that, for any given value of r between −1 and +1, this error term “tends towards 0 as the power n increases”. We interpreted this as indicating that one could assign a value to the endless sum

(for ever) = .

In the same way, in the elegant closed formula

the final term on the RHS indicates the amount by which the finite sum on the LHS differs from 1; and since this “error term” tends towards 0 as n increases, we may assign a value to the endless sum

(for ever) = 1.

It is therefore natural to ask whether other infinite series, such as

(for ever)

may also be assigned some natural finite value. And since the series is purely numerical (without any variable parameters, such as the “r” in the geometric series formula), this answer should be a strictly numerical answer. And it should be exact - though all we have managed to prove so far (in Problems 246 and 247) is that this numerical answer lies somewhere between and 1.68.

This question arose naturally in the middle of the seventeenth century, when mathematicians were beginning to explore all sorts of infinite series (or “sums that go on for ever”). With a little more work in the spirit of Problems 246 and 247 one could find a much more accurate approximate value. But what is wanted is an exact expression, not an unenlightening decimal approximation. This aspiration has a serious mathematical basis, and is not just some purist preference for elegance. The actual decimal value is very close to

.

But this conveys no structural information. One is left with no hint as to why the sum has this value. In contrast, the eventual form of the exact expression suggests connections whose significance remains of interest to this day.

The greatest minds of the seventeenth and early eighteenth century tried to find an exact value for the infinite sum - and failed. The problem became known as the Basel problem (after Jakob Bernoulli (1654-1705) who popularised the problem in 1689 - one of several members of the Bernoulli family who were all associated with the University in Basel). The problem was finally solved in 1735 - in truly breathtaking style - by the young Leonhard Euler (1707-1783) (who was at the time also in Basel). The answer

illustrates the final sentence of the preceding paragraph in unexpected ways, which we are still trying to understand.

In the next problem you are invited to apply similar ideas to an even more important series. Part (a) provides a relatively crude first analysis. Part (b) attacks the same question; but it does so using algebra and induction (rather than the formula for the sum of a geometric series) in a way that is then further refined in part (c).

Problem 248

(a)(i) Choose a suitable r and prove that

.

(ii) Conclude that

for every ,

and hence that the endless sum

(forever)

can be assigned a value “e” satisfying .

(b) (i) Prove by induction that

, for all .

(ii) Use part (i) to conclude that the endless sum

(forever)

can be assigned a definite value “e”, and that this value lies somewhere between 2.5 and 3.

(c) (It may help to read the Note at the start of the solution to part (c) before attempting parts (c), (d).)

(i) Prove by induction that

for all .

(ii) Use part (i) to conclude that the endless sum

(for ever)

can be assigned a definite value “e”, and that this value lies somewhere between 2.6 and 2.75.

(d) (i) Prove by induction that

.

(ii) Use part (i) to conclude that the endless sum

(for ever)

can be assigned a definite value “e”, and that this value lies somewhere between 2.708 and 2.7222... (forever).

We end this section with one more inequality in the spirit of this section, and two rather different inequalities whose significance will become clear later.

Problem 249 Prove by induction that

, forall .

Problem 250 Let a, b be real numbers such that , and . Prove by induction that

, for all .

Problem 251 Let x be any real number . Prove by induction that

, for all

6.6. The harmonic series

The great foundation of mathematics is the principle of contradiction, or of identity, that is to say that a statement cannot be true and false at the same time, and that thus A is A, and cannot be not A. And this single principle is enough to prove the whole of arithmetic and the whole of geometry, that is to say all mathematical principles.

Gottfried W. Leibniz (1646-1716)

We have seen how some infinite series, or sums that go on for ever, can be assigned a finite value for their sum:

We say that these series converge (meaning that they can be assigned a finite value).

This section is concerned with another very natural series, the so-called harmonic series

It is not entirely clear why this is called the harmonic series. The natural overtones that arise in connection with plucking a stretched string (as with a guitar or a harp) have wavelengths that are the basic wavelength, or of the basic wavelength, and so on. It is also true that, just as each term of an arithmetic series is the arithmetic mean of its two neighbours, and each term of a geometric series is the geometric mean of its two neighbours, so each term of the harmonic series after the first is equal to the harmonic mean (see Problems 85., 89.) of its two neighbours:

Unlike the first two series above, there is no obvious closed formula for the finite sum

Certainly the sequence of successive sums

does not suggest any general pattern.

Problem 252 Suppose we denote by S the “value” of the endless sum

(i) Write out the endless sum corresponding to “”.

(ii) Remove the terms of this endless sum from the endless sum S, to obtain another endless sum corresponding to “” .

(iii) Compare the first term of the series in (i) (namely ) with the first term in the series in (ii) (namely 1); compare the second term in the series in (i) with the second term in the series in (ii); and so on. What do you notice?

The Leibniz quotation above emphasizes that the reliability of mathematics stems from a single principle – namely the refusal to tolerate a contradiction. We have already made explicit use of this principle from time to time (see, for example, the solution to Problem 125.). The message is simple: whenever we hit a contradiction, we know that we have “gone wrong” – either by making an error in calculation or logic, or by beginning with a false assumption. In Problem 252. the observations you were expected to make are paradoxical: you obtained two different series, which both correspond to “”, but every term in one series is larger than the corresponding term in the other! What one can conclude may not be entirely clear. But it is certainly clear that something is wrong: we have somehow created a contradiction. The three steps ((i), (ii), (iii)) appear to be relatively sensible. But the final observation “” (since , , etc.) makes no sense. And the only obvious assumption we have made is to assume that the endless sum

can be assigned a value “S”, which can then be manipulated as though it were a number.

The conclusion would seem to be that, whether or not the endless sum has a meaning, it cannot be assigned a value in this way. We say that the series diverges. Each finite sum

has a value, and these values “grow more and more slowly” as n increases:

- the first term immediately makes the sum = 1

- it takes 4 terms to obtain a sum > 2;

- it takes 11 terms to obtain a sum > 3; and

- it takes terms before the series reaches a sum > 10.

However, this slow growth is not enough to guarantee that the corresponding endless sum corresponds to a finite numerical value.

The danger signals should already have been apparent in Problem 249., where you proved that

The term tends to 0 as n increases; so the finite sums grow ever more slowly as n increases. However, the LHS can be made larger than any integer K simply by taking K2 terms. Hence there is no way to assign a finite value to the endless sum

Problem 253.

(a)(i) Explain why

(ii) Explain why

(iii) Extend parts (i) and (ii) to prove that

(iv) Finally use the fact that, when ,

to modify the proof in (iii) slightly, and hence show that

(b)(i) Explain why

(ii) Explain why

(iii) Extend parts (i) and (ii) to prove that

(c) Combine parts (a) and (b) to show that, for all , we have the two inequalities

Conclude that the endless sum

cannot be assigned a finite value.

The result in Problem 253.(c) has an unexpected consequence.

Problem 254 Imagine that you have an unlimited supply of identical rectangular strips of length 2. (Identical empty plastic CD cases can serve as a useful illustration, provided one focuses on their rectangular side profile, rather than the almost square frontal cross-section.) The goal is to construct a ‘stack’ in such a way as to stick out as far as possible beyond a table edge. One strip balances exactly at its midpoint, so can protrude a total distance of 1 without tipping over.

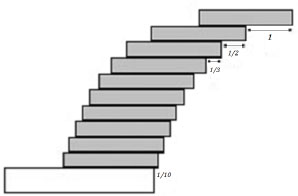

(a) Arrange a stack of n strips of length 2, one on top of the other, with the bottom strip protruding distance beyond the edge of the table, the second strip from the bottom protruding beyond the leading edge of the bottom strip, the third strip from the bottom protruding beyond the leading edge of the strip below it, and so on until the strip from the bottom protrudes distance beyond the leading edge of the strip below it, and the top strip protrudes distance 1 beyond the leading edge of the strip below it (see Figure 10). Prove that a stack of n identical strips arranged in this way will just avoid tipping over the table edge.

(b) Conclude that we can choose n so that an arrangement of n strips can (in theory) protrude as far beyond the edge of the table as we wish – without tipping.

The next problem illustrates, in the context of the harmonic series, what is in fact a completely general phenomenon: an endless sum of steadily decreasing positive terms may converge or diverge; but provided the terms themselves converge to 0, then the the corresponding “alternating sum” – where the same terms are combined but with alternately positive and negative signs – always converges.

Figure 10: Overhanging strips, n = 10.

Problem 255

(a) Let

(where the final operation is “+” if n is odd and “-” if n is even).

(i) Prove that

for all .(ii) Conclude that the endless alternating sum

can be assigned a value s that lies somewhere between and .

(b) Let

be an endless, decreasing sequence of positive terms (that is, for all ). Suppose that the sequence of terms converges to 0 as .

(i) Let

(where the final operation is “+” if n is odd and “−” if n is even). Prove that

(ii) Conclude that the endless alternating sum

can be assigned a value s that lies somewhere between and .

Just as with the series

we can show relatively easily that

can be assigned a value s. It is far less clear whether this value has a familiar name! (It is in fact equal to the natural logarithm of 2: “”.) A similarly intriguing series is the alternating series of odd terms from the harmonic series:

You should be able to show that this endless series can be assigned a value somewhere between and ; but you are most unlikely to guess that its value is equal to . This was first discovered in 1674 by Leibniz (1646-1716). One way to obtain the result is using the integral of from 0 to 1: on the one hand the integral is equal to evaluated when (that is, ); on the other hand, we can expand the integrand as a power series , integrate term by term, and prove that the resulting series converges when . (It does indeed converge, though it does so very, very slowly.)

The fact that the alternating harmonic series has the value seems to have been first shown by Euler (1707-1783), using the power series expansion for .

6.7. Induction in geometry, combinatorics and number theory

We turn next to a mixed collection of problems designed to highlight a range of applications.

Problem 256 Let , . Prove by induction that has at least k distinct prime factors.

Problem 257

(a) Prove by induction that n points on a straight line divide the line into parts.

(b)(i) By experimenting with small values of n, guess a formula for the maximum number of regions which can be created in the plane by an array of n straight lines.

(ii) Prove by induction that n straight lines in the plane divide the plane into at most regions.

(c)(i) By experimenting with small values of n, guess a formula for the maximum number of regions which can be created in 3-dimensions by an array of n planes.

(ii) Prove by induction that n planes in 3-dimensions divide space into at most regions.

Problem 258 Given a square, prove that, for each , the initial square can be cut into n squares (of possibly different sizes).

Problem 259 A tree is a connected graph, or network, consisting of vertices and edges, but with no cycles (or circuits). Prove that a tree with n vertices has exactly edges.

The next problem concerns spherical polyhedra. A spherical polyhedron is a polyhedral surface in 3-dimensions, which can be inflated to form a sphere (where we assume that the faces and edges can stretch as required). For example, a cube is a spherical polyhedron; but the surface of a picture frame is not. A spherical polyhedron has

- faces (flat 2-dimensional polygons, which can be stretched to take the form of a disc),

- edges (1-dimensional line segments, where exactly two faces meet), and

- vertices (0-dimensional points, where several faces and edges meet, in such a way that they form a single cycle around the vertex).

Each face must clearly have at least 3 edges; and there must be at least three edges and three faces meeting at each vertex.

If a spherical polyhedron has V vertices, E edges, and F faces, then the numbers V, E, F satisfy Euler's formula

For example, a cube has vertices, edges, and faces, and .

Problem 260

(a) (i) Describe a spherical polyhedron with exactly 6 edges.

(ii) Describe a spherical polyhedron with exactly 8 edges.

(b) Prove that no spherical polyhedron can have exactly 7 edges.

(c) Prove that for every , there exists a spherical polyhedron with n edges.

Problem 261 A map is a (finite) collection of regions in the plane, each with a boundary, or border, that is `polygonal' in the sense that it consists of a single sequence of distinct vertices and – possibly curved – edges, that separates the plane into two parts, one of which is the polygonal region itself. A map can be properly coloured if each region can be assigned a colour so that each pair of neighbouring regions (sharing an edge) always receive different colours. Prove that the regions of such a map can be properly coloured with just two colours if and only if an even number of edges meet at each vertex.

Problem 262 (Gray codes) There are sequences of length n consisting of 0s and 1s. Prove that, for each , these sequences can be arranged in a cyclic list such that any two neighbouring sequences (including the last and the first) differ in exactly one coordinate position.

Problem 263 (Calkin-Wilf tree) The binary tree in the plane has a distinguished vertex as `root', and is constructed inductively. The root is joined to two new vertices; and each new vertex is then joined to two further new vertices – with the construction process continuing for ever (Figure 11). Label the vertices of the binary tree with positive fractions as follows:

- the root is given the label

- whenever we know the label of a ‘parent’ vertex, we label its `left descendant' as , and its `right descendant' .

(a) Prove that every positive rational occurs once and only once as a label, and that it occurs in its lowest terms.

(b) Prove that the labels are left-right symmetric in the sense that labels in corresponding left and right positions are reciprocals of each other.

Problem 264 A collection of n intervals on the x-axis is such that every pair of intervals have a point in common. Prove that all n intervals must then have at least one point in common.

6.8. Two problems

Problem 265 Several identical tanks of water sit on a horizontal base. Each pair of tanks is connected with a pipe at ground level controlled by a valve, or tap. When a valve is opened, the water level in the two connected tanks becomes equal (to the average, or mean, of the initial levels). Suppose we start with tank T which contains the least amount of water. The aim is to open and close valves in a sequence that will lead to the final water level in tank T being as high as possible. In what order should we make these connections?

Figure 11: A (rooted) binary tree.

Problem 266 I have two flasks. One is ‘empty’, but still contains a residue of a dangerous chemical; the other contains a fixed amount of solvent that can be used to wash away the remaining traces of the dangerous chemical. What is the best way to use the fixed quantity of solvent? Should I use it all at once to wash out the first flask? Or should I first wash out the flask using just half of the solvent, and then repeat with the other half? Or is there a better way of using the available solvent to remove as much as possible of the dangerous chemical?

6.8. Infinite descent

In this final section we touch upon an important variation on mathematical induction. This variation is well-illustrated by the next (probably familiar) problem.

Problem 267 Write out for yourself the following standard proof that is irrational.

(i) Suppose to the contrary that is rational. Then for some positive integers m, n. Prove first that m must be even.

(ii) Write , where is also an integer. Show that n must also be even.

(iii) How does this lead to a contradiction?

Problem 267. has the classic form of a proof which reaches a contradiction by infinite descent.

- We start with a claim which we wish to prove is true. Often when we do not know how to begin, it makes sense to ask what would happen if the claim were false. This then guarantees that there must be some counterexample, which satisfies the given hypothesis, but which fails to satisfy the asserted conclusion.

- Infinite descent becomes an option whenever each such counterexample gives rise to some positive integer parameter n (such as the denominator in Problem 267.(i)).

- Infinite descent becomes a reality, if one can prove that the existence of the initial counterexample leads to a construction that produces a counterexample with a smaller value of the parameter n, since repeating this step then gives rise to an endlessly decreasing sequence of positive integers, which is impossible (since such a chain can have length at most n).

- Hence the initial assumption that the claim was false must itself be false – so the claim must be true (as required).

Proof by “infinite descent” is an invaluable tool. But it is important to realise that the method is essentially a variation on proof by mathematical induction. As a first step in this direction it is worth reinterpreting Problem 267. as an induction proof.

Problem 268 Let be the statement:

“ cannot be written as a fraction with positive denominator ”.

(i) Explain why is true.

(ii) Suppose that is true for some . Use the proof in Problem 267. to show that must then be true as well.

(iii) Conclude that is true for all , whence must be irrational.

Problem 268. shows that, in the particular case of Problem 267. one can translate the standard proof that “ is irrational” into a proof by induction. But much more is true. The contradiction arising in step 3. above is an application of an important principle, namely

The Least Element Principle: Every non-empty set S of positive integers has a smallest element.

The Least Element Principle is equivalent to The Principle of Mathematical Induction which we stated at the beginning of the chapter:

The Principle of Mathematical Induction: If a subset S of the positive integers

then S contains all positive integers.

- contains the integer “1”, and has the property that

- whenever an integer k is in the set S, then the next integer is always in S too,

Problem 269

(a) Assume the Least Element Principle. Suppose a subset T of the positive integers contains the integer “1”, and that whenever k is in the set T, then is also in the set T. Let S be the set of all positive integers which are not in the set T. Conclude that S must be empty, and hence that T contains all positive integers.

(b) Assume the Principle of Mathematical Induction. Let T be a non-empty set of positive integers, and suppose that T does not have a smallest element. Let S be the set of all positive integers which do not belong to the set T. Prove that “1” must belong to S, and that whenever the positive integer k belongs to S, then so does . Derive the contradiction that T must be empty, contrary to assumption. Conclude that T must in fact have a smallest element.

To round off this final chapter you are invited to devise a rather different proof of the irrationality of .

Problem 270 This sequence of constructions presumes that we know – for example, by Pythagoras' Theorem – that, in any square , the ratio

“diagonal: side” .

Let be a square. Let the circle with centre Q and passing through P meet at . Construct the perpendicular to at , and let this meet at .

(i) Explain why . Construct the point to complete the square .

(ii) Join . Explain why .

(iii) Suppose . Conclude that .

(iv) Prove that , and hence that , .

(v) Explain how, if m, n can be chosen to be positive integers, the above sequence of steps sets up an “infinite descent” – which is impossible.

6.10. Chapter 6: Comments and solutions

Note: It is important to separate the underlying idea of “induction” from the formal way we have chosen to present proofs. As ever in mathematics, the ideas are what matter most. But the process of struggling with (and slowly coming to understand why we need) the formal structure behind the written proofs is part of the way the ideas are tamed and organised.

Readers should not be intimidated by the physical extent of the solutions to this chapter. As explained in the main text it is important for all readers to review the way they approach induction proofs: so we have erred in favour of completeness – knowing that as each reader becomes more confident, s/he will increasingly compress, or abbreviate, some of the steps.

(a) Yes.

(b) Yes.

and , so we only need to check prime factors up to .(c) No.

and so we might have to check all possible prime factors up to . However, we only have to start at [Why?], and checking with a calculator is very quick. In fact factorises rather prettily as .

229. Note: The statement in the problem includes the quantifier “for all ”.

What is to be proved is the compound statement

“ is true for all ”.

In contrast, each individual statement refers to a single value of n.

It is essential to be clear when you are dealing with the compound statement, and when you are referring to some particular statement , or , or .

Let be the statement:

“ is divisible by ”.

• is the statement:

“ is divisible by ”.

That is:

“ is divisible by ”,

which is evidently true.

- Now suppose that we know is true for some . We must show that is then also true.

To prove that is true, we have to consider the statement . It is no use starting with . However, since we know that ) is true, we are free to use it at any stage if it turns out to be useful.

To prove that is true, we have to show that

“ is divisible by ”.

So we must start with and try to “simplify” it (knowing that “simplify” in this case means “rewrite it in a way that involves ”).

The first term on the RHS is a multiple of , so is divisible by (since we know that P(k) is true); the second term on the RHS is a multiple of 242 = 576; and the third term on the RHS is the expression arising in P(1), which we saw is equal to 576.

the whole RHS is divisible by

the LHS is divisible by , so is true.

Hence

- is true; and

- whenever is true for some , we have proved that must be true.

is true for all .

230. Let be the statement:

“the angles of any p-gon, for any value of p with , have sum exactly radians”.

is the statement:

“the angles of any triangle have sum radians”.

This is a known fact: given triangle ΔABC, draw the line through A parallel to , with X on the same side of as B and Y on the same side of as C. Then and (alternate angles), so

- Now we suppose that is known to be true for some . We must show that is then necessarily true.

To prove that is true, we have to consider the statement : that is,

“the angles of a p-gon, for any value of p with , have sum exactly radians”.

This can be reworded by splitting it into two parts:

“the angles of any p-gon for have sum exactly radians;”

and

“the angles of any (k + 1)-gon have sum exactly radians”.

The first part of this revised version is precisely the statement , which we suppose is known to be true. So the crux of the matter is to prove the second part – namely that the angles of any (k + 1)-gon have sum .

Let be any (k + 1)-gon.

[Note: The usual move at this point is to say “draw the chord to cut the polygon into the triangle (with angle sum (by ), and the k-gon (with angle sum (by ), whence we can add to see that has angle sum . However, this only works

- if the triangle “sticks out” rather than in, and

- if no other vertices or edges of the (k+ 1)-gon encroach into the part that is cut off – which can only be guaranteed if the polygon is “convex”.

So what is usually presented as a “proof” does not work in general.

If we want to prove the general result – for polygons of all shapes – we have to get round this unwarranted assumption. Experiment may persuade you that “there is always some vertex that sticks out and which can be safely “cut off”; but it is not at all clear how to prove this fact (we know of no simple proof). So we have to find another way.]

Consider the vertex , and its two neighbours and .

Imagine each half-line, which starts at , and which sets off into the interior of the polygon. Because the polygon is finite, each such half-line defines a line segment , where X is the next point of the polygon which the half line hits (that is, X is one of the vertices , or a point on one of the sides ).

Consider the locus of all such points X as the half line swings from (produced) to (produced). There are two possibilities: either

(a) these points X all belong to a single side of the polygon; or

(b) they don't.

(a) In the first case none of the vertices or sides of the polygon intrude into the interior of the triangle , so the chord separates the (k + 1)-gon into a triangle and a k-gon . The angle sum of is then equal to the sum of (i) the angle sum of the triangle and (ii) the angle sum of – which are equal to and respectively (by ). So the angle sum of the (k + 1)-gon is equal to as required.

(b) In the second case, as the half-line rotates from to , the point X must at some instant switch from lying on one side of the polygon to lying on another side; at the very instant where X switches sides, must be a vertex of the polygon.

Because of the way the point X was chosen, the chord does not meet any other point of the (k + 1)-gon , and so splits the (k + 1)-gon into an m-gon (with angle sum by ) and a (k - m + 3)-gon (with angle sum by ). So the (k + 1)-gon has angle sum as required.

Hence is true.

is true for all .

231. Let be the statement

.

- LHS of ; RHS of . Since these two are equal, is true.

Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k,

.

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of :

If we now use , which we are supposing to be true, then the first bracket is equal to k2, so this sum is equal to:

Hence holds.

Combining these two bullet points then shows that “ holds, for all ”.

(a) The only way to learn is by trying and failing; then trying again and failing slightly better! So don't give up too quickly. It is natural to try to relate each term to the one before. You may then notice that each term is slightly less than 3 times the previous term.

(b) Note: The recurrence relation for involves the two previous terms. So when we assume that is known to be true and try to prove , the recurrence relation for will involve and , so needs to be formulated to ensure that we can use closed expressions for both these terms. For the same reason, the induction proof has to start by showing that both and are true.

Let be the statement:

“ for all m, ”.

- LHS of ; RHS of . Since

these two are equal, is true.

combines , and the equality of and ; since these two are equal, is true.

- Suppose that is true for some particular (unspecified) ; that is,

we know that, for this particular k,

“ for all m, .”

We wish to prove that must then be true.

Now requires us to prove that

“ for all m, .”

Most of this is guaranteed by , which we assume to be true. It remains for us to check that the equality holds for . We know that

And we may use ), which we are supposing to be true, to conclude that:

Hence holds.

Combining these two bullet points then shows that “ holds, for all ”.

233. Let be the statement:

“ for all m, ”,

where and .

LHS of ; RHS of . Since these two are equal, is true.

LHS of ; RHS of . Since these two are equal, is true.

Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k,

“ for all m, .”

We wish to prove that must then be true.

Now requires us to prove that

“ for all m, .”

Most of this is guaranteed by , which we assume to be true. It remains to check this for . We know that

And we may use , which we are supposing to be true to conclude that:

(since and are roots of the equation ) Hence holds.

Combining these two bullet points then shows that “ holds, for all ”.

Note: You may understand the above solution and yet wonder how such a formula could be discovered. The answer is fairly simple. There is a general theory about linear recurrence relations which guarantees that the set of all solutions of a second order recurrence (that is, a recurrence in which each term depends on the two previous terms) is “two dimensional” (that is, it is just like the familiar 2D plane, where every vector (p, q) is a combination of the two “base vectors” (1, 0) and (0, 1):

Once we know this, it remains:

- to find two special solutions (like the vectors (1, 0) and (0, 1) in the plane), which we do here by looking for sequences of the form “” that satisfy the recurrence, which implies that , so , or ;

- then to choose a linear combination of these two power solutions to give the correct first two terms: , , so , .

234. Let be the statement:

“ is divisible by ”.

- is the statement: “ is divisible by 19”, which is true.

- Now suppose that we know that is true for some . We must show that is then also true.

- To prove that is true, we have to show that

“ is divisible by ”.

The bracket in the first term on the RHS is divisible by 19 (by ), and the bracket in the second term is equal to 19. Hence both terms on the RHS are divisible by 19, so the RHS is divisible by 19. Therefore the LHS is also divisible by 19, so is true.

is true for all .

235.

Note: The proofs of identities such as those in Problem 235., which are given in many introductory texts, ignore the lessons of the previous two problems. In particular,

• they often fail to distinguish between

– the single statement for a particular n, and

– the “quantified” result to be proved (“for all ”),

and

• they proceed in the `wrong' direction (e.g. starting with the identity and “adding the same to both sides”).

This latter strategy is psychologically and didactically misleading – even though it can be justified logically when proving very simple identities. In these very simple cases, each statement to be proved is unusual in that it refers to exactly one configuration, or equation, for each . And since there is exactly one configuration for each n, the configuration or identity for can often be obtained by fiddling with the configuration for k. In contrast, in Problem 230., for each value of n, there is a bewildering variety of possible polygons with n vertices, ranging from regular polygons to the most convoluted, re-entrant shapes: the statement makes an assertion about all such configurations, and there is no way of knowing whether we can obtain all such configurations for in a uniform way by fiddling with some configuration for k.

Readers should try to write each proof in the intended spirit, and to learn from the solutions – since this style has been chosen to highlight what mathematical induction is really about, and it is this approach that is needed in serious applications.

(a) Let be the statement:

“”.

- LHS of ; RHS of . Since these two are equal, is true.

- Suppose that is true for some particular (unspecified) ; that is,

we know that, for this particular k,

“ ”.

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of ):

If we now use , which we are supposing to be true, then the first bracket is equal to , so the complete sum is equal to:

Hence holds.

If we combine these two bullet points, then we have proved that “ holds for all ”.

(b) Let be the statement:

“”.

- LHS of ; RHS of . Since these two are equal, is true.

- Suppose that is true for some particular (unspecified) ; that is,

we know that, for this particular k,

“”.

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of :

If we now use , which we assume is true, then the first bracket is equal to , so the complete sum is equal to:

Hence holds.

If we combine these two bullet points, we have proved that “ holds for all ”.

(c) Note: If , then the LHS is equal to n, but the RHS makes no sense. So we assume .

Let be the statement:

“ ”.

- LHS of ; RHS of . Since these two are equal, is true.

- Suppose that is true for some particular (unspecified) ; that is,

we know that, for this particular k,

“”.

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of :

If we now use , which we assume is true, then the first bracket is equal to

so the complete sum is equal to:

Hence holds.

If we combine these two bullet points, we have proved that “ holds for all ”.

(d) Note: The statement to be proved starts with a term involving “0!”. The definition

does not immediately tell us how to interpret “”. The correct interpretation emerges from the fact that several different thoughts all point in the same direction.

(i) When , then to get from to we multiply by (n + 1). If we extend this to , then “to get from to , we have to multiply by 1” – which suggests that .

(ii) When , counts the number of permutations of n symbols, or the number of different linear orders of n objects (i.e. how many different ways they can be arranged in a line). If we extend this to , we see that there is just one way to arrange 0 objects (namely, sit tight and do nothing).

(iii) The definition of as a product suggests that involves a “product with no terms” at all. Now when we “add no terms together” it makes sense to interpret the result as “= 0” (perhaps because if this “sum of no terms” were added to some other sum, it would make no difference). In the same spirit, the product of no terms should be taken to be “= 1” (since if this empty product were included at the end of some other product, it would make no difference to the result).

Let be the statement:

“”.

• LHS of ; RHS of . Since these two are equal, is true.

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k,

“”.

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of ):

If we now use , which we assume is true, then the first bracket is equal to , so the complete sum is equal to:

Hence holds.

If we combine these two bullet points, we have proved that “ holds for all ”.

(e) Let be the statement:

“ ”

• LHS of ; RHS of . Since these two are equal, is true.

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k,

“ ”.

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of :

If we now use , which we assume is true, then the first bracket is equal to , so the complete expression is equal to:

Hence holds.

If we combine these two bullet points, we have proved that “ holds for all ”.

236. Let be the statement:

“ ”.

• LHS of ; RHS of . Since these two are equal, is true.

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k,

“ ”.

We wish to prove that must then be true.

Now is an equation, so we start with one side of and try to simplify it in an appropriate way to get the other side of . In this instance, the RHS of is the most promising starting point (because we know a formula for the triangular number, and so can see how to simplify it):

If we now use , which we assume is true, then the first bracket is equal to

so the complete RHS is:

Hence holds.

If we combine these two bullet points, we have proved that “ holds for all ”.

Note: A slightly different way of organizing the proof can sometimes be useful. Denote the two sides of the equation in the statement by and respectively. Then

• ; and

• simple algebra allows one to check that, for each ,

It then follows (by induction) that for all .

(a) , , . These may not be very suggestive. But

and

may eventually lead one to guess that

Note 1: This will certainly be easier to guess if you remember what you found in Problem 17. and Problem 63.

Note 2: There is another way to help in such guessing. Suppose you notice that

– adding values for up to of a polynomial of degree 0 (such as ) gives an answer that is a “polynomial of degree 1”,

and

– adding values for up to of a polynomial of degree 1 (such as ) gives an answer that is a “polynomial of degree 2”,

Then you might guess that the sum

will give an answer that is a polynomial of degree 3 in n. Suppose that

We can then use small values of n to set up equations which must be satisfied by A, B, C, D and solve them to find A, B, C, D:

– when , we get ;

– when , we get ;

– when , we get ;

– when , we get .

This method assumes that we know that the answer is a polynomial and that we know its degree: it is called “the method of undetermined coefficients”.

There are various ways of improving the basic method (such as writing the polynomial in the form

which tailors it to the values that one intends to substitute).

(b) Let be the statement:

“”.

• LHS of ; RHS of . Since these two are equal, is true.

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k,

We wish to prove that must then be true.

Now is an equation, so we start with the LHS of and try to simplify it in an appropriate way to get the RHS of :

If we now use , which we assume is true, then the first bracket is equal to

so the complete sum is equal to:

Hence holds.

If we combine these two bullet points, we have proved that “ holds for all ”.

Note: The triangular numbers are also equal to the binomial coefficients . And the sum of these binomial coefficients is another binomial coefficient , so the result in Problem 237. can be written as:

You might like to interpret Problem 237. in the language of binomial coefficients, and prove it by repeated use of the basic Pascal triangle relation (Pascal (1623--1662)):

Start by rewriting

(a) We know from Problem 237.(b) that

Also

Therefore

(b) Guess:

The proof by induction is entirely routine, and is left for the reader.

Therefore

239.

(a) Let be any such polynomial. If , then we know (by the Remainder Theorem) that has as a factor. Since the are all distinct, and for each k, , we have

for some polynomial . And since we are told that has degree n, has degree 0, so is a constant. Hence every such polynomial of degree n has the form

Since , we can substitute to find C:

(b) Let be the statement:

“Given any two labelled sets of real numbers , and , where the are all distinct (but the need not be), there exists a polynomial of degree n, such that , , , …, .”

• When , we may choose to be the constant polynomial. Hence is true.

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k:

“Given any two labelled sets of real numbers , and , where the are all distinct (but the need not be), there exists a polynomial of degree k, such that , , .”

We wish to prove that must then be true.

Now is the statement:

“Given any two labelled sets of real numbers , and , where the are all distinct (but the need not be), there exists a polynomial of degree , such that , , , …, .”

So to prove that holds, we must start by considering

any two labelled sets of real numbers where the are all distinct (but the need not be).

We must then somehow construct a polynomial function of degree with the required property.

Because we are supposing that is known to be true, we can focus on the first numbers in each of the two lists – , and – and we can then be sure that there is a polynomial of degree k such that

The next step is slightly indirect: we make use of the polynomial which we are still trying to construct, and focus on the polynomial

satisfying

Part (a) guarantees the existence of such a polynomial of degree and tells us exactly what this polynomial function is equal to. Hence we can construct the required polynomial ) by setting it equal to , which proves that is true.

If we combine these two bullet points, we have proved that “ holds for all ”.

240.

(a) 5 cents cannot be made; ; ; ; ; etc.

Guess: Every amount > N = 5 can be paid directly (without receiving change).

(b) Let be the statement:

“n cents can be paid directly (without change) using 3 cent and 4 cent coins”.

– is the statement: “6 cents can be paid directly”. And , so is true.

– Now suppose that we know is true for some . We must show that must then be true.

To prove we consider the statement :

“ cents can be paid directly”.

We know that is true, so we know that “k cents can be paid directly”.

– If a direct method of paying k cents involves at least one 3 cent coin, then we can replace one 3 cent coin by a 4 cent coin to produce a way of paying cents.

Hence we only need to worry about a situation in which the only way to pay k cents directly involves no 3 cent coins at all – that is, paying k cents uses only 4 cent coins. But then there must be at least two 4 cent coins (since ), and we can replace two 4 cent coins by three 3 cent coins to produce a way of paying cents directly.

Hence

• is true; and

• whenever is true for some , we know that is also true.

is true for all .

(a) , so (these are the two primitive sixth roots of unity).

(the two primitive cube toots of unity), and

(b) , so (repeated root). and .

(c) (d) , so .

As soon as one starts calculating z2 and , it becomes clear that it is time to p-a-u-s-e and think.

so whenever is an integer,

is also an integer.

(e) Let be the statement:

“if z has the property that is an integer, then is also an integer for all m, ”.

• and are clearly both true; and was proved in part (d) above.

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k:

“if z has the property that is an integer, then is also an integer for all m, ”.

We wish to prove that must then be true.

If is an integer, then, by ,

“ is also an integer for all m, ”.

So to prove that holds, we only need to show that

“ is an integer”.

By the Binomial Theorem:

The LHS is an integer (since is an integer), and (by ) every term on the RHS is an integer except possibly the first. Hence the first term is the difference of two integers, so must also be an integer.

Hence is true.

If we combine these two bullet points, we have proved that “ holds for all ”. QED

Note: If is even, the expansion of has an odd number of terms, so the RHS of the above re-grouped expansion ends with the term , which is also an integer.

242.

Note: In the solution to Problem 241. we included the condition on z as part of the statement .

In Problem 242. the result to be proved has a similar background hypothesis – “Let p be a prime number”. It may make the induction clearer if, as in the statement of the Problem, this hypothesis is stated before starting the induction proof.

Let p be any prime number. We let be the statement:

“ is divisible by p ”.

• is true (since , which is divisible by p).

• Suppose that is true for some particular (unspecified) ; that is, we know that, for this particular k:

“ is divisible by p”.

We wish to prove – that is,

“ is divisible by p”

must then be true. Using the Binomial Theorem again we see that

By , the first bracket on the RHS is divisible by p; and in each of the other terms each of the binomial coefficients , ,

• is an integer, and

• has a factor “p” in the numerator and no such factor in the denominator.

Hence each term in the second bracket is a multiple of p. So the RHS (and hence the LHS) is divisible by p.

Hence is true.

If we combine these two bullet points, we have proved that “ holds for all ”. QED

243.

This is a geometric series with first term and common ratio , and so has sum

244.

(a) Each person receives in total:

(here ).

(b) You have

(here , ); I have

(here , ).

245. The trains are 120 km apart, and the fly travels at 50 km/h towards Train B, which is initially 120 km away and travelling at 30 km/h.

The relative speed of the fly and Train B is 80 km/h, so it takes hours before they meet. In this time Train A and Train B have each travelled 45 km, so they are now 30 km apart. The fly then turns right round and flies back to Train A.

The relative speed of the fly and Train A is then also 80 km/h, so it takes just hours (or 22.5 minutes) for the fly to return to Train A. Train A and Train B have each travelled km in this time, so they are now km apart. The fly then turns round and flies straight back to Train B.

Train B is km away and the relative speed of the fly and Train B is again 80 km/h, so the journey takes hours (or 5.625 minutes).

Continuing in this way, we see that the fly takes