1. Introduction

The Evidence Crisis and the Evidence Revolution

© 2022 William J. Sutherland, CC BY-NC 4.0 https://doi.org/10.11647/OBP.0321.01

Alongside many examples of highly effective practice, there are numerous studies showing ineffective practice. These studies suggest substantial improvements in efficiency are possible, meaning considerably more could be achieved for the same budget. Other fields, such as medicine and aviation, have shown how large improvements can be achieved through collecting and applying evidence effectively. A number of barriers hinder more effective practice, such as the challenges of accessing appropriate information and evidence complacency, where evidence is not sought despite being available. This chapter thus outlines the problems: the subsequent chapters provide the solutions.

1 Conservation Science Group, Department of Zoology, University of Cambridge, The David Attenborough Building, Pembroke Street, Cambridge, UK

2 Biosecurity Research Initiative at St Catharine’s (BioRISC), St Catharine’s College, Cambridge, UK

1.1 The Aim of the Book

This book seeks to convince policy makers and practitioners that there are considerable problems with how decisions are usually made and that they need to take this seriously. It seeks to outline a range of straightforward practical solutions that can improve the use of evidence, improve the decision-making process and then embed evidence into practice. This increase in efficiency could either reduce expenditure or allow greater impact with the same budget. Furthermore, this increased efficiency could well make the field more attractive to fund. The overall aim is that better, and more, practice will result in a better planet.

1.2 The Evidence Crisis

There are innumerable success stories in which problems have been revealed and solved through a mix of science, policy and practice. These include many stunning protected areas (with Yellowstone in the USA as a pioneering example); improved species management meaning numerous species have been restored that would otherwise almost certainly have gone extinct (Bolam et al., 2021); numerous effective reintroduction projects (such as the beaver to numerous locations); reducing the problem of acid rain on forests and lakes by regulating to reduce sulphur dioxide and nitrogen oxide emissions; the Montreal Protocol restricting the use of various chemicals that cause the degradation of the ozone layer; the banning of dichlorodiphenyltrichloroethane (DDT) and related pesticides due to evidence of severe impacts of wildlife; the spread of many once severely persecuted species such as lynx due to reductions in persecution; and the recovery of the great whales from overexploitation.

Alongside these wonderful examples of effective use of evidence, there are many examples (Box 1.1) of poor evidence use and decision-making that lead to downright poor decisions to the detriment of both nature and people.

Although there are clearly substantial gains to be made by adopting effective practice, various studies (e.g. Pullin et al., 2004; Sutherland et al., 2004; Bayliss et al., 2011; Young and Van Aarde, 2011; Cvitanovic et al., 2014) have shown that conservation managers tend not to use scientific evidence to support their decision making. Instead they mainly rely on personal experience, anecdotes and the advice of colleagues. Walsh et al. (2015) asked 92 conservation managers, predominantly from Australia, New Zealand and the UK, whose work involved reducing predation on threatened birds, to provide opinions on 28 management techniques to reduce this problem. Managers were asked their opinions before and after giving them a summary of the literature about the interventions’ effectiveness. On average, each survey participant changed their likelihood of implementing 45% of the interventions after reading the evidence of effectiveness. Unsurprisingly they switched to preferring actions shown to be effective and avoided the ineffective ones. This shows how enabling practitioners to access evidence can considerably change their preferred decisions toward more effective practices.

In his PhD, Andrea Santangeli (Santangeli, 2013) presented experimental tests of the effectiveness of ten conservation measures for protecting birds of prey on the resulting production of fledglings. Six were beneficial, two made no difference and two were detrimental. In a subsequent paper, Santangeli and Sutherland (2017) calculated that a programme solely carrying out the effective measures would save about €78,854 (or 21.9% of the budget) annually. They then calculated the gain from this research: given the initial investment of about €156,211 for a PhD thesis, the financial return over a 10-year period ranges between 292% and 326%, depending on how costs are estimated. This shows that research on the effectiveness of actions can be hugely cost effective.

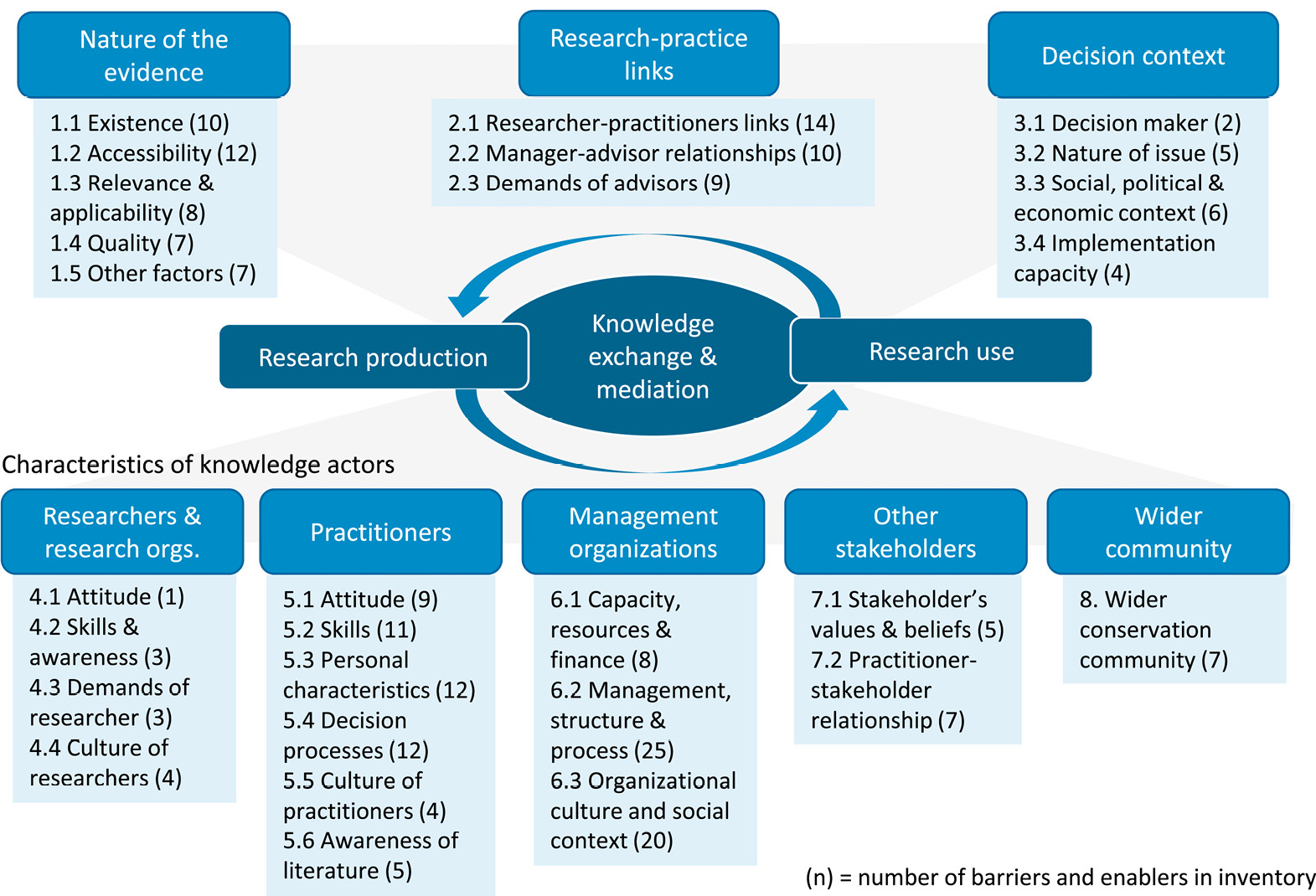

1.3 Why is Poor Decision Making So Common?

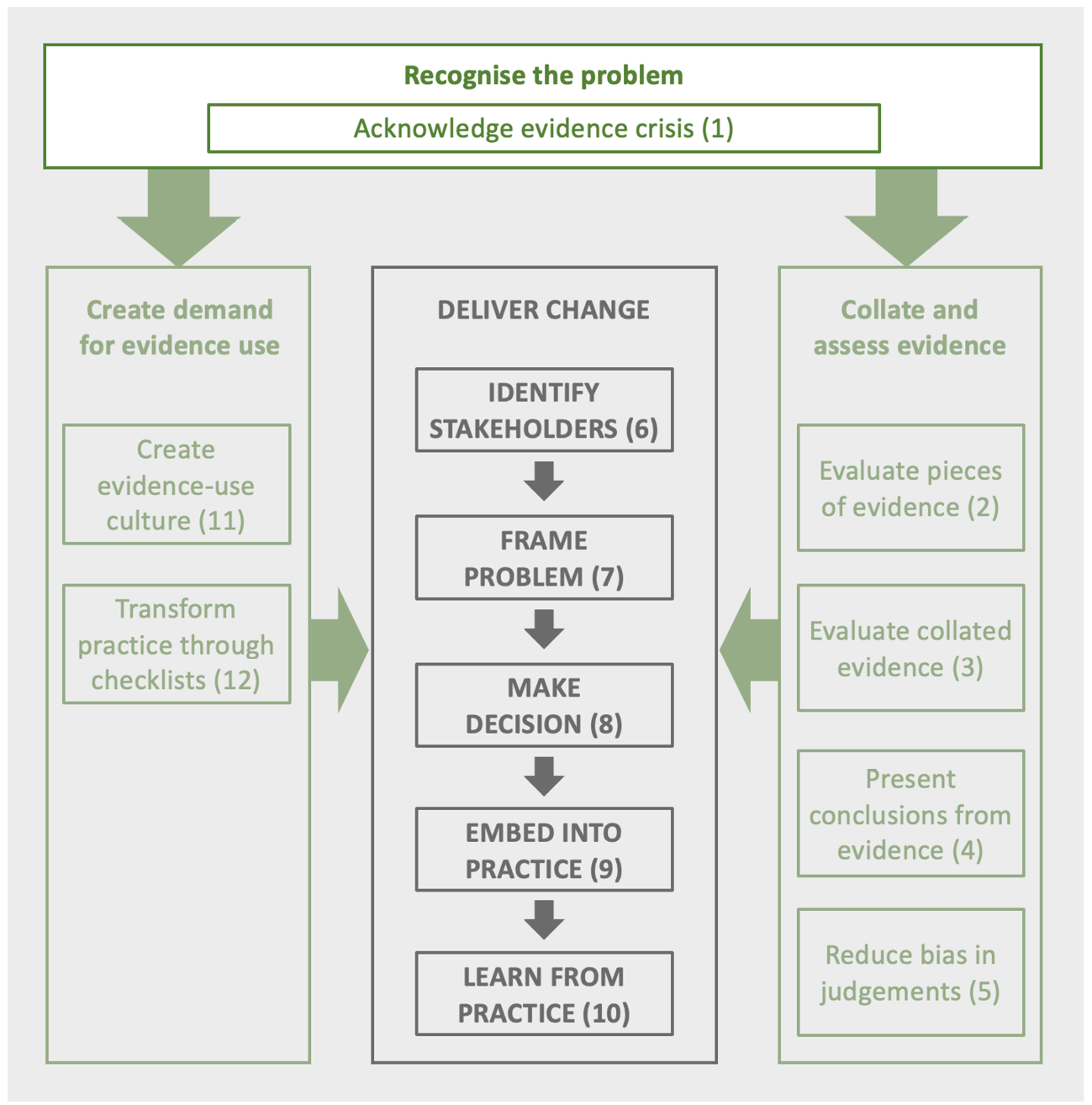

There are a host of practical reasons that hinder the use of evidence in decision making. The lack of availability of evidence can be a significant issue, due to (1) the subject being obscure or having not yet been researched resulting in a lack of relevant information, (2) the key information being behind a paywall, or (3) the evidence having not been compiled for a particular issue making it difficult to find or use. However, there is also an extensive range of further challenges to using evidence. Walsh et al. (2019) collated a comprehensive inventory of 230 factors that facilitate or limit the use of scientific evidence in conservation management decisions (Figure 1.1).

Figure 1.1 Taxonomy of 230 barriers and enablers to using scientific evidence in conservation management and planning decisions. (Source: Walsh et al., 2019, CC-BY-4.0)

However, alongside these many understandable reasons, there is also the widespread failure to use evidence even when it is easily available and practical to do so. There seem to be four main reasons for such poor decision making.

Conflict of interest

Conflict issues include cases of corruption because of financial or political gain. For example, the oil industry was aware of climate change yet undermined the crucial science (Oreskes and Conway, 2010). More commonly, personal values, interests and preferences (such as preferring a particular project or individual or disliking a particular outcome) can influence decisions; such motivational bias can lead to an unfavourable decision.

System 1 thinking

The Nobel Prize-winning psychologist Daniel Kahneman, in his book Thinking Fast and Slow (2011), made the distinction between two processes for thinking. System 1 is ‘fast, instinctive and emotional’, and well suited to the majority of quick, daily decisions. System 2 is ‘slower, more deliberative and more logical’, and suited to the occasional serious challenge. Evoking System 1 thinking for more complex decisions instead of slow, careful, analytical thinking (System 2) can lead to poor decisions.

Overconfidence effect

The overconfidence effect is an unwarranted confidence in ability. This can be illustrated by three phenomena: (1) people routinely overestimate their actual performance (e.g. the number of answers they got right); (2) people routinely overestimate their performance relative to others (e.g. most believing they are in the top 10%); (3) when asked to give an estimate range within which the answer lies, people are unjustifiably precise in their accuracy – so the correct answer regularly lies outside the stated range (Moore and Healy, 2008). These phenomena have been shown in repeated studies, including by those who are identified as experts (Burgman, 2015).

Evidence complacency

Almost 20 years ago, Sutherland et al. (2004) identified the serious challenges facing conservation practitioners wishing to read the scientific literature. These included journal paywalls, the lack of effective search engines and the challenges of extracting messages from scientific papers, resulting in practitioners making limited use of the available science. Since then, the power of search engines, open access papers and the development of evidence-based conservation has reduced these barriers, yet some of the challenges shown in Figure 1.1 remain. Although some conservation organisations produce and use excellent science, Sutherland and Wordley (2017) suggest that a culture of ‘evidence complacency’ remains in many areas of policy and practice. They used this term to describe a way of working in which, despite availability, evidence is not sought or used to make decisions, and the impact of actions is not tested.

As illustrated by the continuation of mistaken guidance to place babies on their fronts to sleep (see Section 1.4), ideas can become standard practices without consideration of the evidence or without a process of testing and evaluation. Another example is building bat bridges (placed to encourage bats to fly higher to reduce the risk of vehicle collisions), which were shown to be ineffective (Berthinussen and Altringham, 2012) yet continued to be installed with high financial cost. Such failures to test or search the literature wastes money and effort and also undermines confidence in expertise. Innovation is important, but novel ideas require testing before widespread adoption.

This book describes processes that can be adopted to reduce conflicts of interest, System 1 thinking, overconfidence and evidence complacency from undermining effective decision-making to the detriment of society.

1.4 The Evidence Revolution

We define evidence as ‘relevant information used to assess one or more assumptions related to a question of interest’ (modified from Salafsky et al., 2019). This includes published studies as well as reports, observations, citizen science and local knowledge.

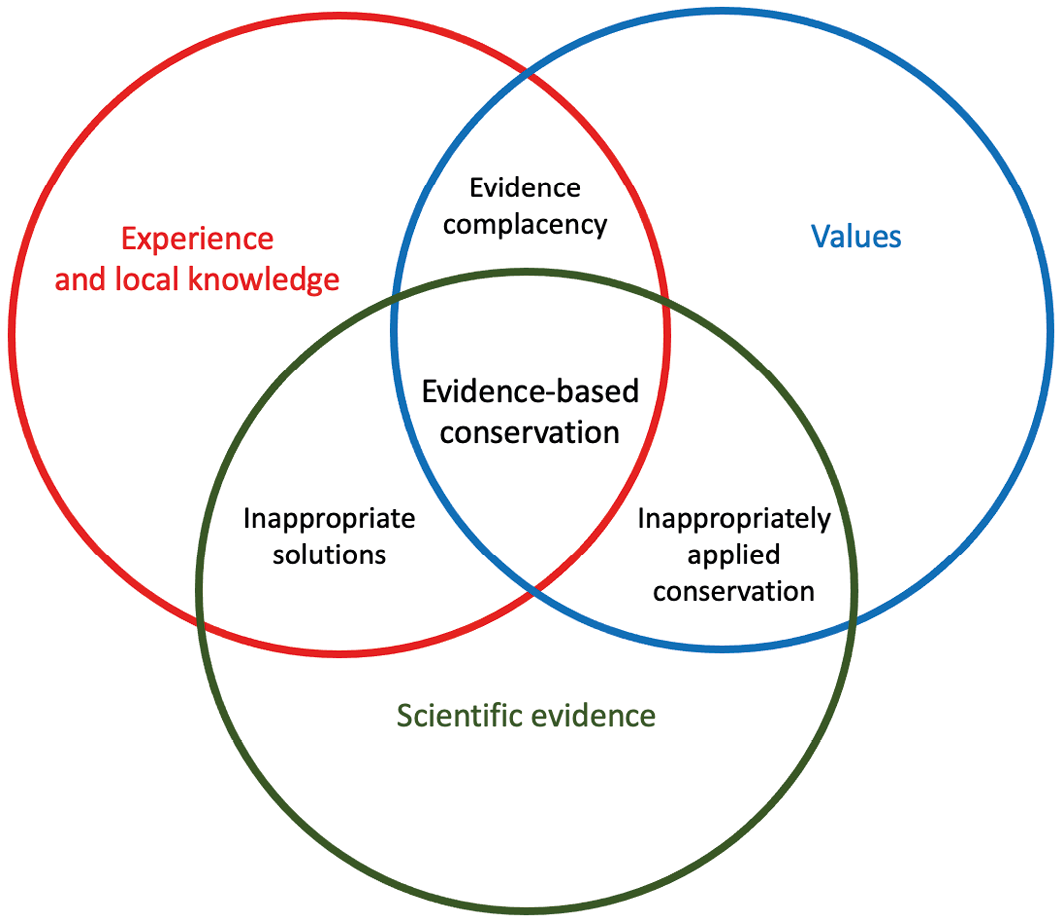

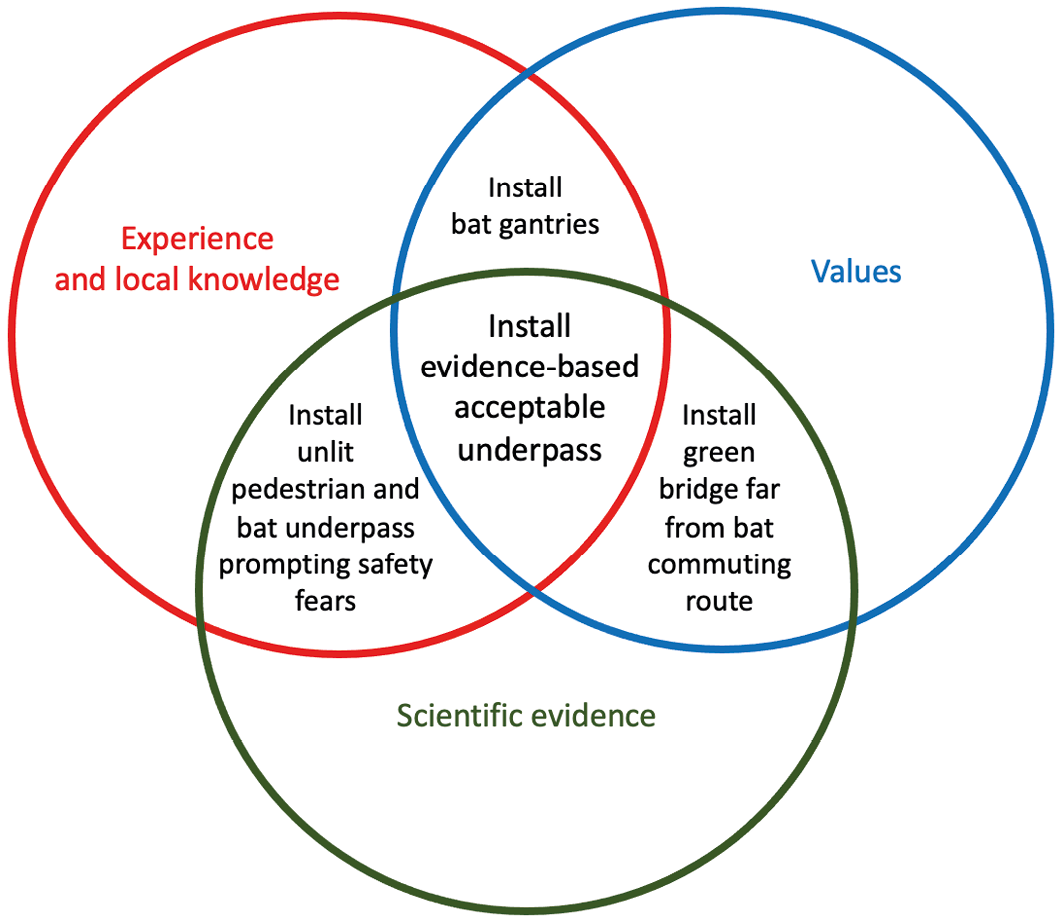

Sackett et al. (1996) described evidence-based clinical decision making as combining research evidence with clinical expertise, alongside consideration of the preferences of the patient. Clinical expertise is the proficiency and judgement that individual clinicians acquire through clinical experience and clinical practice (Satterfield et al., 2009). Figure 1.2 similarly shows how scientific evidence, values, and experience and local knowledge can be combined and how a combination of all three is usually essential.

Figure 1.2 The role of evidence in evidence-based conservation, where values incorporate ethical, social, political and economic concerns. Top: how the components interact. Bottom: an example of how this might be applied to the problem of bats being killed when flying across a road (Source: author, with thanks to Claire Wordley).

The idea of systematically using evidence has a long history. Box 1.2 outlines some components of this history of evidence use in medicine and conservation.

Medicine provides the classic example of policy and practice being transformed by evidence. Seventeen million parents owned and followed a copy of Spock’s (1946) book, The Common Sense Book of Baby and Child Care. One key issue was whether to place babies on their front or back whilst sleeping. Spock’s suggestion was to place the baby on its front, for the common sense reason of reducing the risk of choking following vomiting; sleeping on the front was routinely recommended in books up to 1988 (Gilbert et al., 2005). Gilbert and colleagues’ 2005 analysis showed that by 1970 the published data indicated that there was a statistically significant increased risk of sudden infant death syndrome for front-sleeping babies. They concluded that had the evidence been reviewed and adopted in 1970, those results might have prevented over 10,000 infant deaths in the UK, with many more globally. Surely few things are more important than unnecessary baby deaths, yet the research was not converted into practice. A series of evidence reviews have also similarly shown fallacies in common sense treatments (enemas for women in labour [Cuervo et al., 1999], resting in bed after heart attacks [Sackett et al., 1996], giving corticosteroids to women about to have a premature baby to help the baby breathe [Liggins and Howie, 1972]). Medicine has been transformed with multiple mechanisms in place to reduce the chance of practising actions known to be ineffective or damaging.

But is evidence-based practice actually more effective? Emparanza et al. (2015) took advantage of the creation of an evidence-based practice unit in a hospital in Spain in 2003. They compared the fate of patients before and after the creation of the unit and in comparison with the rest of the hospital, which remained unchanged. Patients in the evidence-based unit had a significantly lower risk of death, 6.27% vs 7.75%, and a shorter length of stay, 6.01 vs 8.46 days, than those receiving standard practice. This is a 19% reduction in deaths and a 29% reduction in hospital stays.

Other fields show how improvements in decision making can be made. Aircraft flight safety has an impressive record: the total number of annual fatalities has tumbled 5–10 fold since the 1970s (Aviation Safety Network database https://aviation-safety.net/database) despite enormous increases in passengers carried over that time (Gössling and Humpe, 2020). Each aircraft disaster is followed by review, reflection and, if necessary, changes in practice. As an early example, after a series of crashes in the B-17 aircraft in 1942, Alphonso Chapanis interviewed pilots who had survived such crashes. He identified the problem of pilots muddling the adjacent, and similar-looking, landing gear and wing flap levers, which sometimes led to disastrous consequences (Syed, 2015). Adding wheel-shaped symbols to the landing gear lever and wedge-shaped symbols to the wing flap levers overcame the problem and became routine.

As another example, the manager of the low-budget Oakland Athletics baseball team used statistical analysis over the opinions of scouts in selecting team members. Following a 20-game winning streak, this evidence-based approach has become universal across baseball teams (Lewis, 2003).

Whilst the conservation community could review previous problematic programmes such as the Common Agricultural Policy, Indian tree-planting project, or the various unsuccessful mangrove planting schemes (Box 1.1) and incorporate the lessons in future plans, this feedback loop does not form a routine part of current processes, leading to the perpetuation of mistakes. Similar transformations to medicine are possible (and crucial) for other fields. As described in Chapters 2 and 3, for conservation, evidence collation is being delivered at scale, and a number of conservation organisations have restructured themselves around the use of evidence in delivering action (Chapter 11).

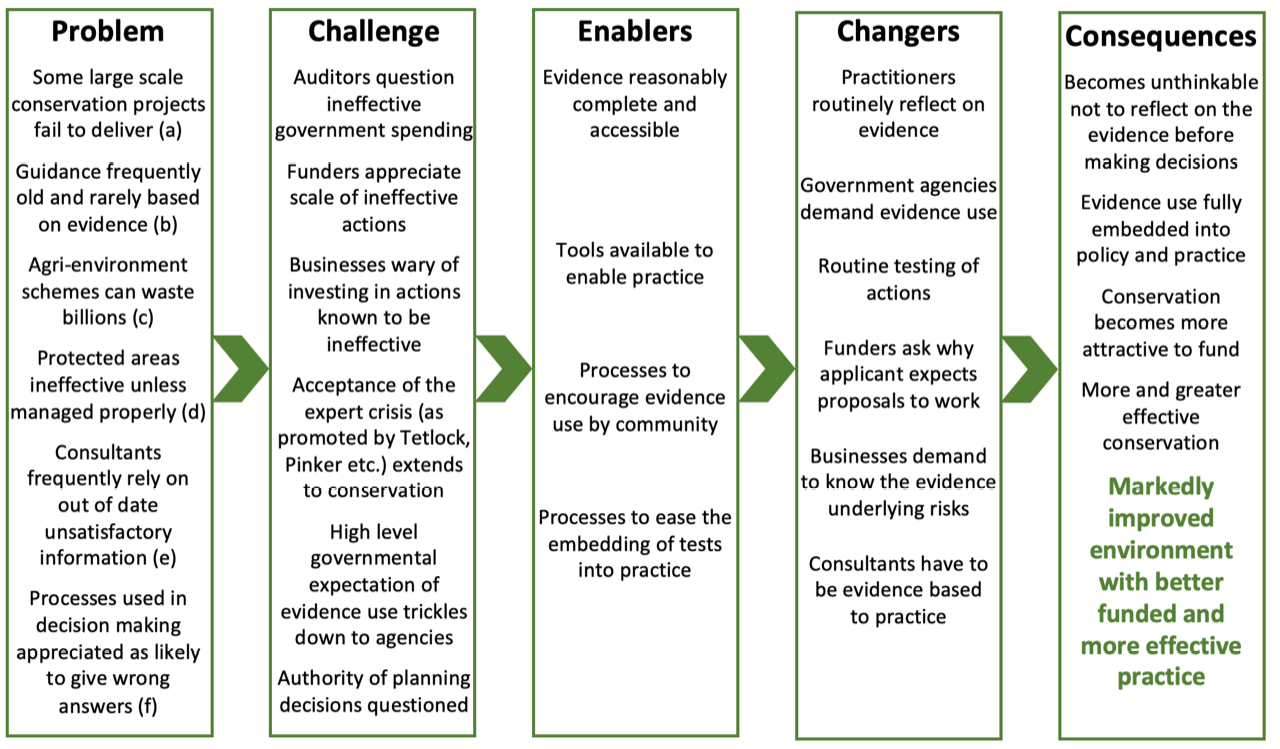

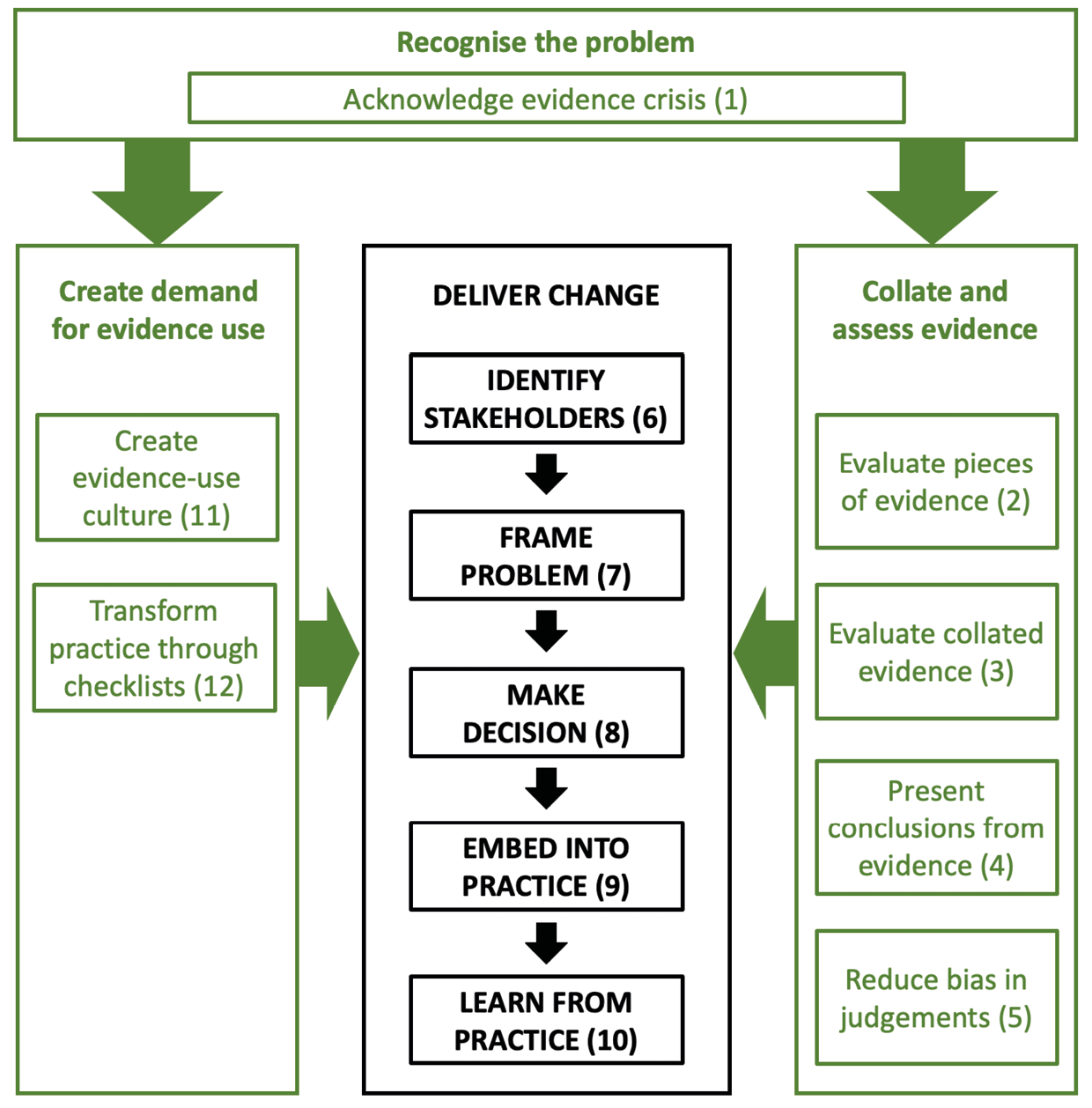

The need for evidence is being increasingly recognised. Figure 1.3 is an attempt to bring together the challenges and options for change. The idea is that crises, such as evidence failures, drive demands for change. This book describes how to deliver tools and approaches that may change how practitioners, policy makers, businesses, and funders work, leading to an improved world.

Figure 1.3 How the various evidence crises are likely to create demands for change that the enablers described in this book could help deliver, resulting in a series of improvements and a markedly better planet. References: (a) Coleman et al. (2021); (b) Downey et al. (2022); (c) Dicks et al. (2014); Pe’er et al. (2020); (d) European Court of Auditors (2020); Giakoumi et al. (2018); Wauchope et al. (2022); (e) Hunter et al. (2021); Laurance (2022); (f) Burgman (2015); Kahneman et al. (2021); Kahneman (2011); Pinker (2021); Tetlock (2009). (Source: author)

1.5 The Case for Adopting Evidence Use

The main argument for increasing the use of evidence is that it will improve practice resulting in better outcomes and reduced costs. It is also likely that improving effectiveness will attract or secure further funding (Box 1.3).

1.5.1 The potential gains from transforming conservation

This chapter outlines what seems to be an overwhelming case for taking evidence use much more seriously. This includes billions of euros, dollars and pounds spent on practices known to be ineffective, and numerous studies showing that evidence is rarely used, alongside studies showing guidance is typically out of date and poorly evidence-based. Table 1.1 lists those studies that test gains from improving efficiency as reviewed in Section 1.2 showing the clear opportunity to improve following the good practice.

Subsequent chapters will show similar, equally serious problems in other stages of the decision-making process: as examples, it is rare to start by considering the full range of possible options; experts are usually used in ways that extensive research shows are likely to enhance bias and produce wrong answers; decision-making rarely follows processes known to be effective; costs are usually not presented in the manner that makes them comparable; other sources of knowledge are typically used haphazardly; there is ineffective learning from failures and there is rarely any effective learning.

Table 1.1 Summary of studies looking at inefficiencies or potential gains in investment from using evidence.

|

Action |

Percentage change in effectiveness |

Reference |

|

19% reduction in deaths; 29% reduction in hospital stays |

Emparanza et al. (2015) |

|

|

29% not positively influencing fish populations |

Gill et al. (2017) |

|

|

Compared to controls, 6% of studies showed decreases in species richness or abundance, 17% showed increases for some species and decreases for other species, 23% showed no change at all in response to agri-environment schemes (46% in total) and 54% showed increases. There has been no increase in the effectiveness of measures over time. |

Kleijn and Sutherland (2003) Batáry et al. (2015) |

|

|

Experimental tests of the effectiveness of 10 conservation measures for protecting birds of prey |

Just carrying out effective measures could achieve the same outcomes for 22% less expense |

Santangeli and Sutherland (2017) |

|

Estimated effectiveness orangutan Pongo spp. conservation measures in relation to investment (US$1.2 billion) |

Some actions (habitat protection; patrolling activities) 300–400% more cost effective than others (habitat restoration, rescue and rehabilitation, and translocation) |

Santika et al. (2022) |

|

Review of the papers in Conservation Evidence Journal that test effectiveness of actions |

Of those applied interventions that were tested 31% could be considered as unsuccessful |

Spooner et al. (2015) |

|

27% of all populations positively impacted by protected areas; 21% negatively impacted; 48% no detectable impact |

Wauchope et al. (2022) |

1.6 The Inefficiency Paradox

The paradox described in this chapter is that society could relatively easily be more effective and save considerable amounts of money, but fails to be. Identifying solutions to this paradox is central to this book. Thus, the key chapters may seem to be those describing evidence assessment, expert elicitation or structured decision-making. Actually, they are the chapters describing how to achieve the cultural shift in which evidence becomes embedded in processes or organisational cultures.

Funders could achieve greater impact, governments could deliver more (or spend less), organisations could be more ambitious, consultants could be more effective, and businesses could reduce harm and increase benefits. Some pioneer organisations are taking evidence use seriously (see Chapter 11): they will lead the way in the necessary revolution.

1.7 Transforming Decision Making

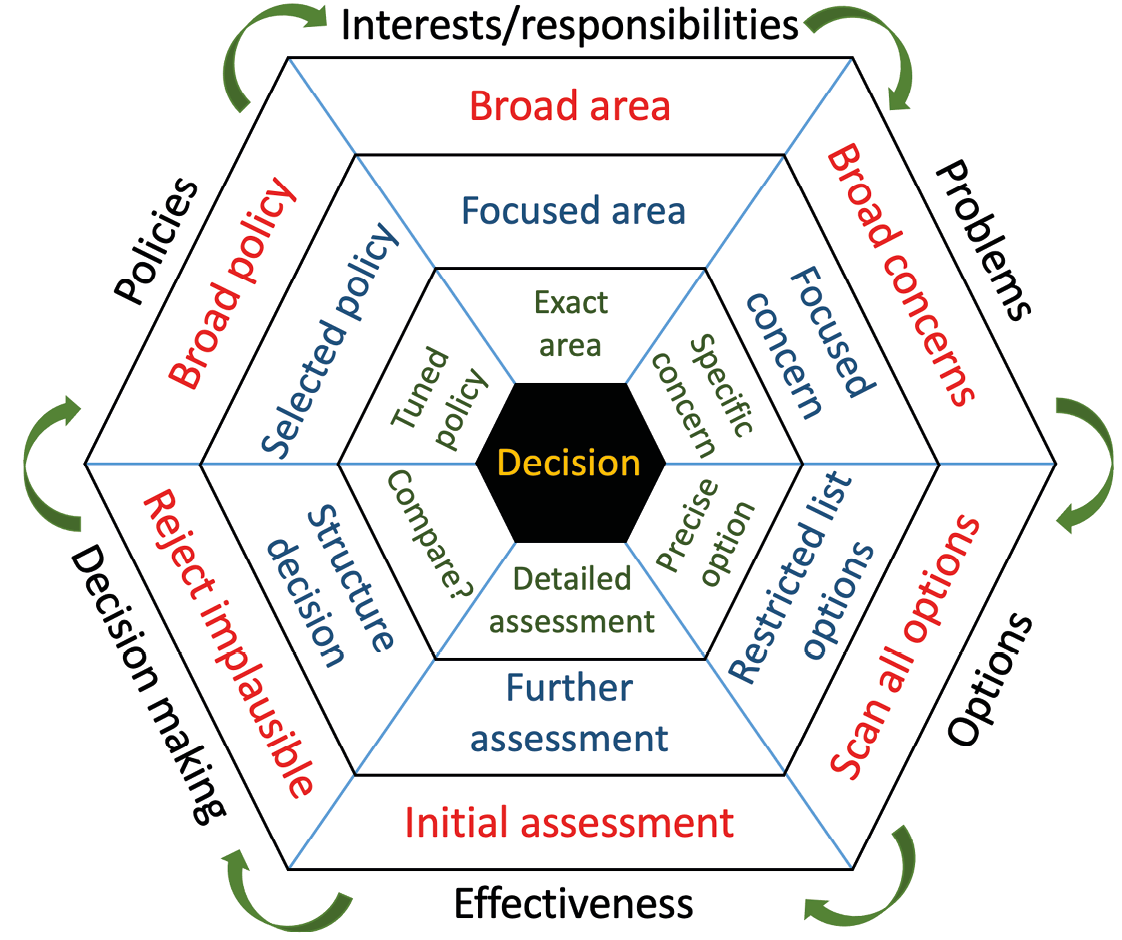

It is conventional to illustrate decision making as a policy cycle with a logical flow of steps from identifying problems to determining the policy; it is, however, generally accepted that policy making rarely, if ever, proceeds this way (Owens, 2015). As shown in Figure 1.4, in practice, there is rarely a single assessment of each stage (i.e. as if going once round the outer loop). In reality, decisions may start at any of the stages and the process of making the decision means each stage becomes more focused spiralling inwards towards a final decision. Evidence is embedded in many of these stages.

Figure 1.4 The Policy Hexagon. The logic is identifying responsibilities, identifying problems, finding options, considering effectiveness, and making a decision leading to the implementation of a policy or practice. (Source: author)

With this framework, the decision-making process is complex and does not always start at the same stage. For example, it may start with considering a responsibility, such as what to do to protect a threatened species on your land, or by considering a threat and the range of options, or sometimes with someone promoting a solution and the need to consider whether this is appropriate. Table 1.2 shows how thinking usually progresses from the general to the specific as the decision becomes articulated.

Table 1.2 How the components of decision making shown in Figure 1.4 become more precise as thinking moves around and inwards around the hexagon towards making a decision.

The approaches describe above seems to apply equally to other areas of practice. Table 1.3 lists a set of ten questions for identifying the extent of good practice.

Table 1.3 Suggested questions for determining the extent of good practice.

*or, ‘how do they deal with the issue in the UK?’ if in Japan

1.8 Structure of the Book

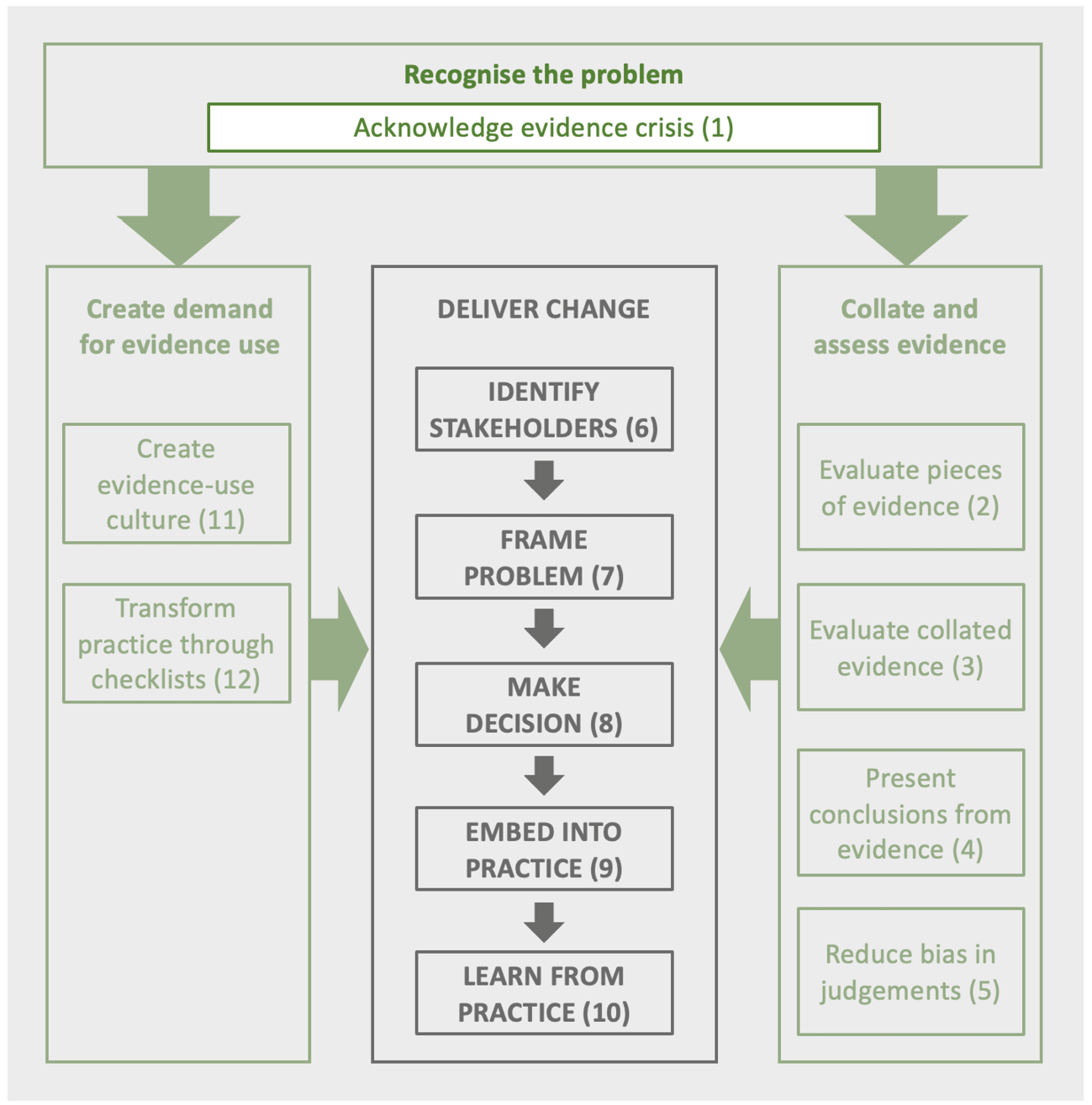

This book brings together the leading experts in the different elements of the process of improving decision making in conservation. The logic of the book follows two elements (presenting evidence and transforming culture) that feed into the decision-making process (Figure 1.5). The idea of using information and experts to make judgements is, of course, routine and unsurprising. The differences sought here are aiming to improve rigour, transparency and repeatability.

Figure 1.5 How the book sections and chapters (numbered) link together. The recognition of the evidence crisis leads to both a demand for evidence use (left) and processes for collating and assessing evidence and reducing bias in judgements (right). The central decision-making process running from identifying who should be involved to learning from the decision is driven by the demand for evidence and fed by evidence and judgements. (Source: author)

References

Amano, T., Székely, T., Sandel, B., et al. 2018. Successful conservation of global waterbird populations depends on effective governance. Nature 553: 199–202, https://doi.org/10.1038/nature25139.

Batáry, P., Dicks, L.V., Kleijn, D., et al. 2015. The role of agri-environment schemes in conservation and environmental management. Conservation Biology 29: 1006–16, https://doi.org/10.1111/cobi.12536.

Bayliss, H.R., Wilcox, A., Stewart, G.B., et al. 2011. Does research information meet the needs of stakeholders? Exploring evidence selection in the global management of invasive species. Evidence and Policy 8: 37–56, https://doi.org/10.1332/174426412X620128.

Berthinussen, A. and Altringham, J. 2012. Do bat gantries and underpasses help bats cross roads safely? PLoS ONE 7: e38775, https://doi.org/10.1371/journal.pone.0038775.

Bolam, F.C., Mair, L., Angelico, M., et al. 2021. How many bird and mammal extinctions has recent conservation action prevented? Conservation Letters 14: e12762, https://doi.org/10.1111/conl.12762.

Bown, S.R. 2003. Scurvy: How a Surgeon, a Mariner, and a Gentleman Solved the Greatest Medical Mystery of the Age of Sail (New York: Thomas Dunne Books, St. Martin’s Press).

Broom, D.M. 2021. A method for assessing sustainability, with beef production as an example. Biological Reviews 96: 1836–53, https://doi.org/10.1111/brv.12726.

Brown, B., Fadillah, R., Nurdin, Y., et al. 2014. Case study: Community based ecological mangrove rehabilitation (CBEMR) in Indonesia. SAPIENS 7(2), http://journals.openedition.org/sapiens/1589.

Burgman, M.A. 2015. Trusting Judgements: How to Get the Best out of Experts (Cambridge: Cambridge University Press), https://doi.org/10.1017/CBO9781316282472.

Cochrane, A.L. 1972. Effectiveness and Efficiency: Random Reflections on Health Services (Nuffield Trust), https://www.nuffieldtrust.org.uk/research/effectiveness-and-efficiency-random-reflections-on-health-services.

Coleman, E.A., Schultz, B., Ramprasad, V., et al. 2021. Limited effects of tree planting on forest canopy cover and rural livelihoods in Northern India. Nature Sustainability 4: 997–1004, https://doi.org/10.1038/s41893-021-00761-z.

Cuervo, L.G., Rodriguez, M.N. and Delgado, M.B. 1999. Enemas during labour (Cochrane review). Cochrane Database of Systematic Reviews 4: Article Number CD000330, https://doi.org/10.1002/14651858.CD000330.

Cvitanovic, C., Fulton, C.J., Wilson, S.K., et al. 2014. Utility of primary scientific literature to environmental managers: An international case study on coral-dominated marine protected areas. Ocean and Coastal Management 102: 72–78, https://doi.org/10.1016/j.ocecoaman.2014.09.003.

Dicks, L.V., Hodge, I., Randall, N., et al. 2014. A transparent process for ‘evidence‐informed’ policy making. Conservation Letters 7: 119–25, https://doi.org/10.1111/conl.12046.

Downey, H., Bretagnolle, V., Brick, C., et al. 2022. Principles for the production of evidence-based guidance for conservation actions. Conservation Science and Practice 4: e12663, https://doi.org/10.1111/csp2.12663.

Emparanza, J.I., Cabello, J.B. and Burls, A. 2015. Does evidence-based practice improve patient outcomes? Journal of Evaluation in Clinical Practice 21: 1059–65, https://doi.org/10.1111/jep.12460.

European Court of Auditors. 2020. Special Report 26/2020. Marine Environment: EU Protection is Wide but not Deep (Publications Office of the European Union), https://op.europa.eu/webpub/eca/special-reports/marine-environment-26-2020/en/.

Garrone, M., Emmers, D., Olper, A., et al. 2019. Jobs and agricultural policy: Impact of the common agricultural policy on EU agricultural employment. Food Policy 87: 101744, https://doi.org/10.1016/j.foodpol.2019.101744

Giakoumi, S., McGowan, J., Mills, M., et al. 2018. Revisiting ‘success’ and ‘failure’ of marine protected areas: A conservation scientist perspective. Frontiers in Marine Science 5: Article 223, https://doi.org/10.3389/fmars.2018.00223.

Gilbert, R., Salanti, G., Harden, M., et al. 2005. Infant sleeping position and the sudden infant death syndrome: systematic review of observational studies and historical review of recommendations from 1940 to 2002. International Journal of Epidemiology 34: 874–87, https://doi.org/10.1093/ije/dyi088.

Gill, D., Mascia, M., Ahmadia, G., et al. 2017. Capacity shortfalls hinder the performance of marine protected areas globally. Nature 543: 665–69, https://doi.org/10.1038/nature21708.

Gössling, S. and Humpe, A. 2020. The global scale, distribution and growth of aviation: Implications for climate change. Global Environmental Change 65: 102194, https://doi.org/10.1016/j.gloenvcha.2020.102194.

Gray, J. 2000. The common agricultural policy and the re-invention of the rural in the European Community. Sociologia Ruralis 40: 30–52, https://doi.org/10.1111/1467-9523.00130.

Guyatt, G., Cairns, J., Churchill, D., et al. 1992. Evidence-based medicine: A new approach to teaching the practice of medicine. JAMA 268: 2420–25, https://doi.org/10.1001/jama.1992.03490170092032.

Hunter, S.B., zu Ermgassen, S.O.S.E., Downey, H., et al. 2021. Evidence shortfalls in the recommendations and guidance underpinning ecological mitigation for infrastructure developments. Ecological Solutions and Evidence 2: e12089, https://doi.org/10.1002/2688-8319.12089.

Kahneman, D. 2011. Thinking, Fast and Slow (New York: Macmillan).

Kahneman, D., Sibony, O. and Sunstein, C.R. 2021. Noise. A Flaw in Human Judgement (London: William Collins).

Kleijn, D., Berendse, F., Smit, R., et al. 2001. Agri-environment schemes do not effectively protect biodiversity in Dutch agricultural landscapes. Nature 413: 723–25, https://doi.org/10.1038/35099540.

Kleijn, D. and Sutherland, W.J. 2003. How effective are European agri-environment schemes in conserving and promoting biodiversity? Journal of Applied Ecology 40: 947–69, https://doi.org/10.1111/j.1365-2664.2003.00868.x.

Kodikara, K.A.S., Mukherjee, N., Jayatissa, L.P., et al. 2017. Have mangrove restoration projects worked? An in-depth study in Sri Lanka. Restoration Ecology 25: 705–16, https://doi.org/10.1111/rec.12492.

Laurance, W.F. 2022. Why environmental impact assessments often fail. Therya 13: 67–72, https://doi.org/10.12933/therya-22-1181.

Lee, S.Y., Hamilton, S., Barbier, E.B., et al. 2019. Better restoration policies are needed to conserve mangrove ecosystems. Nature Ecology and Evolution 3: 870–72, https://doi.org/10.1038/s41559-019-0861-y.

Lewis, M. 2003. Moneyball. The Art of Winning an Unfair Game (New York: W.W. Norton and Company).

Liggins, G.C. and Howie, R.N. 1972. A controlled trial of antepartum glucocorticoid treatment for prevention of the respiratory distress syndrome in premature infants. Pediatrics 50: 515–25, https://doi.org/10.1542/peds.50.4.515.

Lind, J. 1753. A Treatise of the Scurvy. In Three Parts. Containing an Inquiry into the Nature, Causes, and Cure, of that Disease; Together with A Critical and Chronological View of what has been Published on the Subject, First edition (Edinburgh: A. Kincaid and A. Donaldson).

Milne, I. 2012. Who was James Lind, and what exactly did he achieve? The James Lind Library Bulletin: Commentaries on the history of treatment evaluation 105: 503–08, https://www.jameslindlibrary.org/articles/who-was-james-lind-and-what-exactly-did-he-achieve/.

Moore, D.A. and Healy, P. J. 2008. The trouble with overconfidence. Psychological Review 115, 502–17, https://doi.org/10.1037/0033-295X.115.2.502.

Oreskes, N. and Conway, E.M. 2010. Merchants of Doubt: How a Handful of Scientists Obscured the Truth on Issues from Tobacco Smoke to Global Warming (New York: Bloomsbury Press).

Owens, S. 2015. Knowledge, Policy, and Expertise: The UK Royal Commission on Environmental Pollution 1970–2011 (Oxford: Oxford University Press), https://doi.org/10.1093/acprof:oso/9780198294658.001.0001.

Pe’er, G., Bonn, A., Bruelheide, H., et al. 2020. Action needed for the EU Common Agricultural Policy to address sustainability challenges. People and Nature 2: 305–16, https://doi.org/10.1002/pan3.10080.

Pinker, S. 2021. Rationality: What it is, Why it Seems Scarce, Why it Matters (London: Allen Lane).

Pullin, A.S. and Knight, T.M. 2001. Effectiveness in conservation practice: Pointers from medicine and public health. Conservation Biology 15: 50–54, https://doi.org/10.1111/j.1523-1739.2001.99499.x.

Pullin, A.S., Knight, T.M., Stone, D.A., et al. 2004. Do conservation managers use scientific evidence to support their decision making? Biological Conservation 119: 245–52, https://doi.org/10.1016/j.biocon.2003.11.007.

Rosanna, G. 2003. Al-Haytham the man of experience. First steps in the science of vision. Journal of the International Society for the History of Islamic Medicine 2: 53–55, https://www.ishim.net/ishimj/4/10.pdf.

Sackett, D.L., Rosenberg, W.M. and Gray, J.A.M. 1996. Evidence based medicine: What it is and what it isn’t. BMJ 312: 71–72, https://doi.org/10.1136/bmj.312.7023.71.

Salafsky, N., Boshoven, J., Burivalova, Z., et al. 2019. Defining and using evidence in conservation practice. Conservation Science and Practice 1: e27, https://doi.org/10.1111/csp2.27.

Santangeli, A. 2013. Assessing the Effectiveness of Different Approaches to Species Conservation (PhD thesis, Helsinki, Finland: University of Helsinki), https://helda.helsinki.fi/bitstream/handle/10138/38675/santangeli_dissertation.pdf?sequence=1&isAllowed=y.

Santangeli, A. and Sutherland, W.J. 2017. The financial return from measuring impact. Conservation Letters 10: 354–60, https://doi.org/10.1111/conl.12284.

Santika, T., Sherman, J., Voigt, M., et al. 2022. Effectiveness of 20 years of conservation investments in protecting orangutans. Current Biology 32: 1754–63, https://doi.org/10.1016/j.cub.2022.02.051.

Satterfield, J.M., Spring, B., Brownson, R.C., et al. 2009. Toward a transdisciplinary model of evidence-based practice. The Milbank Quarterly 87: 368–90, https://doi.org/10.1111/j.1468-0009.2009.00561.x.

Spock, B. 1946. The Common Sense Book of Baby and Child Care (New York: Duell, Sloan, and Pearce).

Spooner, F., Smith, R.K. and Sutherland, W.J. 2015. Trends, biases and effectiveness in reported conservation interventions. Conservation Evidence 12: 2–7, https://conservationevidencejournal.com/reference/pdf/5494.

Sutherland, W.J. 2000. The Conservation Handbook: Research, Management and Policy. (Oxford: Blackwell Scientific), https://doi.org/10.1002/9780470999356.

Sutherland, W.J., Pullin, A.S., Dolman, P.M., et al. 2004. The need for evidence-based conservation. Trends in Ecology and Evolution 19: 305–08, https://doi.org/10.1016/j.tree.2004.03.018.

Sutherland, W.J. and Wordley, C.F.R. 2017. Evidence complacency hampers conservation. Nature Ecology and Evolution 1: 1215–16, https://doi.org/10.1038/s41559-017-0244-1.

Syed, M. 2015. Black Box Thinking (New York: Penguin Random House).

Tetlock, P. 2009. Expert Political Judgement: How Good Is It? How Can We Know? (Princeton, New Jersey: Princeton University Press), https://doi.org/10.1515/9781400830312.

Walsh, J.C., Dicks, L.V., Raymond, C.M., et al. 2019. A typology of barriers and enablers of scientific evidence use in conservation practice. Journal of Environmental Management 250: 109481, https://doi.org/10.1016/j.jenvman.2019.109481.

Walsh, J.C., Dicks, L.V. and Sutherland, W.J. 2015. The effect of scientific evidence on conservation practitioners’ management decisions. Conservation Biology 29: 88–98, https://doi.org/10.1111/cobi.12370.

Wauchope, H.S., Jones, J.P.G., Geldmann, J., et al. 2022. Protected areas have a mixed impact on waterbirds, but management helps. Nature 605: 103–07, https://doi.org/10.1038/s41586-022-04617-0.

Young, K.D. and Van Aarde, R.J. 2011. Science and elephant management decisions in South Africa. Biological Conservation 144: 876–85, https://doi.org/10.1016/j.biocon.2010.11.023.