11. Creating a Culture of Evidence Use

© 2022 Chapter Authors, CC BY-NC 4.0 https://doi.org/10.11647/OBP.0321.11

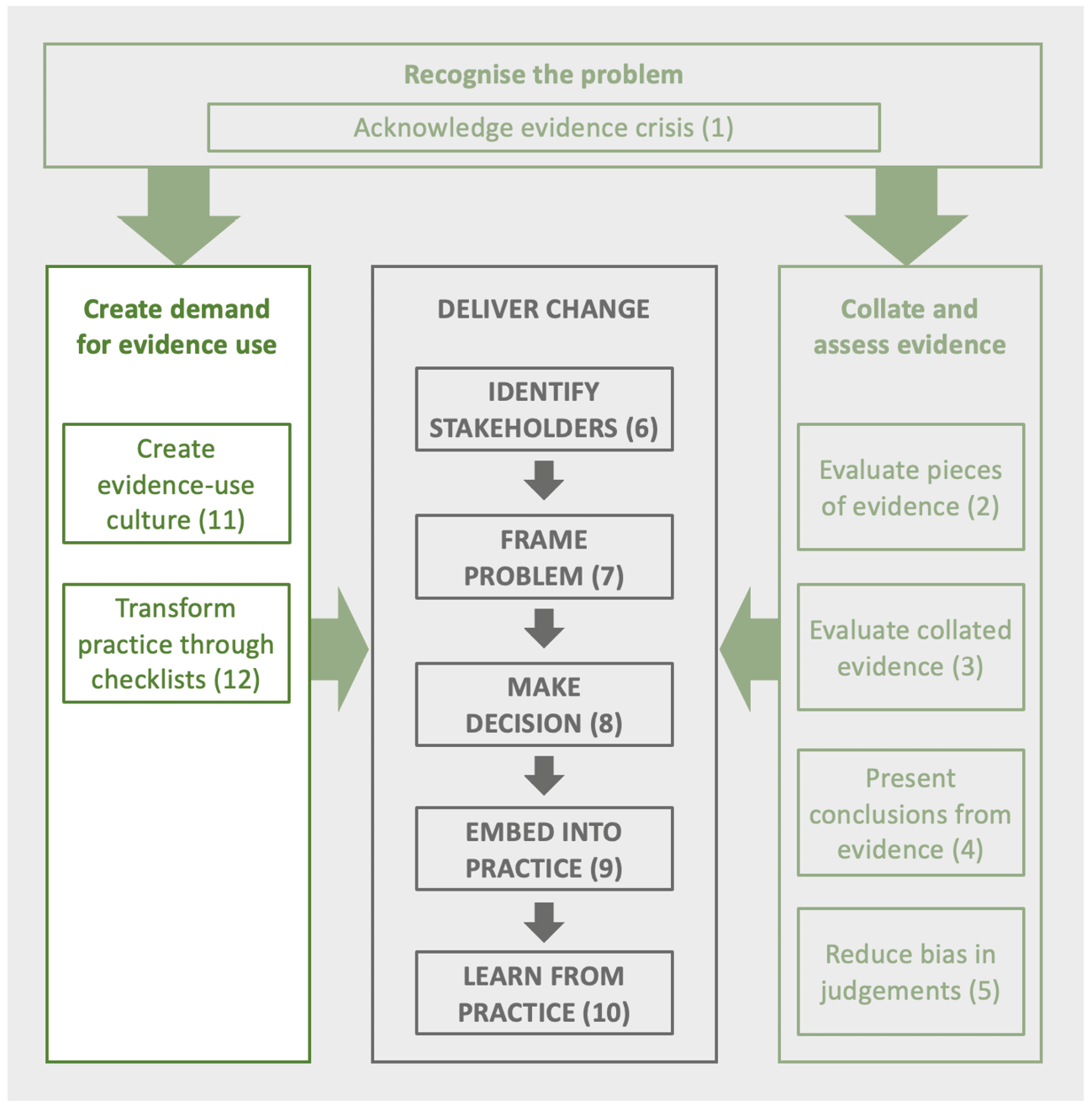

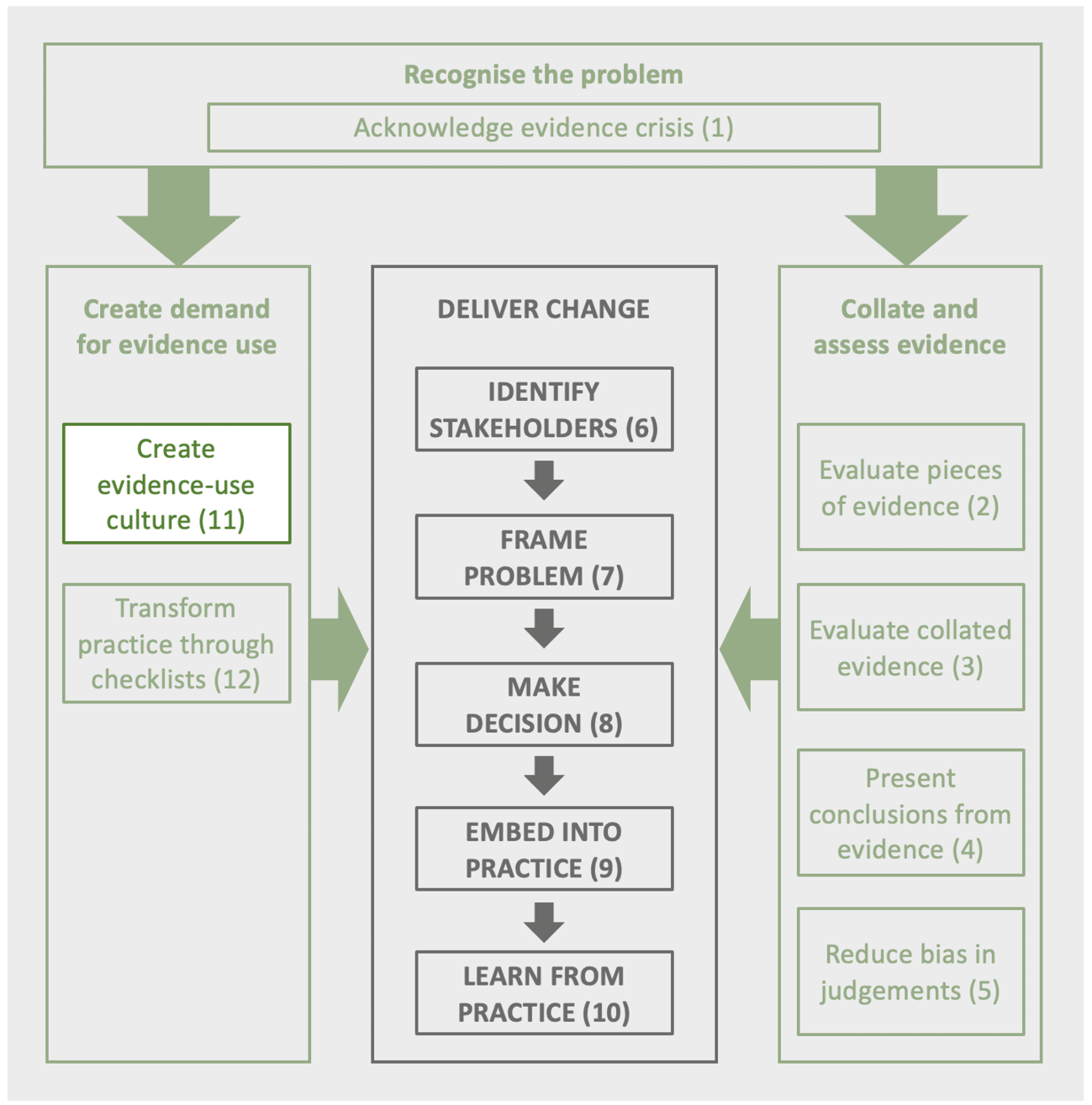

Evidence is a prerequisite for effective conservation decisions, yet its use is not ubiquitous. This can lead to wasted resources and inadequate conservation decisions. Creating a culture of evidence use within the conservation and environmental management communities is key to transforming conservation. At present, there are a range of ways in which organisations can change so that evidence use becomes routinely adopted as part of institutional processes. Auditing existing use is a useful first stage followed by creating an evidence-use plan. A wide range of possible actions should encourage evidence use and ensure the availability of resources needed. Seven case studies show how very different organisations, from funders to businesses to conservation organisations, have reworked their processes so that evidence has become fundamental to their effective practice.

1 Bat Conservation International, 1012 14th Street NW, Suite 905, Washington, D.C. 20005, USA

2 Department of Ecology and Evolutionary Biology, University of California Santa Cruz, 130 McAllister Way, Santa Cruz, CA 95060, USA

3 Canadian Centre for Evidence-Based Conservation, Department of Biology and Institute of Environmental and Interdisciplinary Science, Carleton University, Ottawa, ON, Canada, K1S 5B6

4 Birdlife International, The David Attenborough Building, Pembroke Street, Cambridge, UK

5 Woodland Trust, Kempton Way, Grantham, Lincolnshire, UK

6 US Fish and Wildlife Service, National Wildlife Refuge System 2800 Cottage Way, Suite W-2606

Sacramento, CA USA 95825

7 Beeflow, E Los Angeles Ave., Somis CA, USA 93066

8 The Whitley Fund for Nature, 110 Princedale Road London, UK

9 Ingleby Farms and Forests, Slotsgade 1A, 4600 Køge, Denmark

10 Small Mammal Conservation Organization (SMACON), Plot 8 Christina Imudia Street, Benin City, Nigeria

11 NatureScot, Silvan House, 231 Corstorphine Road, Edinburgh, UK

12 Kent Wildlife Trust, Tyland Barn, Chatham Road, Sandling, Maidstone, UK; current address: Butterfly Conservation, Manor Yard, East Lulworth, Wareham, Dorset, UK

13 Pacific Rim Conservation, PO Box 61827, Honolulu, HI, USA 96839

11.1 Why Changing Cultures is Critical

Much of this book is devoted to detailing methods that could transform practice and increase effectiveness. However, creating a culture of evidence use in conservation practice has to overcome existing barriers to shift behaviour, priorities, and norms among potential evidence users and overcome the common disconnect between conservation scientists and the practitioners on the ground.

Shifting the field of conservation practice towards a de facto norm of evidence use requires an incisive and frank analysis of how conservation organisations operate, and how they can adapt and improve. It requires turning our analytic attention inward, being willing to admit failure, and ultimately adopting a mindset that results in adaptive practice. Changing attitudes and work practices can be challenging; most of us lack the necessary training required to help foster such changes.

As discussed in Chapter 1, shifting to evidence use as the norm has been successful in other fields, including medicine (Shortell et al., 2001), and is becoming increasingly common in other areas such as education (Slavin, 2002). Medical practice has standard processes for converting evidence into practice and has embedded evidence use into educational programmes (Ilic and Maloney, 2014). Yet, that revolution happened relatively quickly within a few decades (Guyatt et al., 1992). In medical practice, the cost of failure is human lives. The cost of failure of conservation practice is similarly high, with biodiversity and sometimes human lives at stake (Díaz et al., 2006), although the repercussions of the failure of conservation are not as immediate and therefore often discounted. However, we can learn from fields that have adopted evidence use as the cultural norm as well as from professional fields that have achieved cultural change.

Evidence use can be complicated, so there is a need for increasing conservation practitioners’ familiarity and skills with evidence use as well as providing further training. In this chapter, we explore some of the challenges and offer ideas for how organisations can lead the conservation movement by shifting norms toward evidence use. All the authors of this chapter work for organisations that have increasingly adopted evidence-based processes.

11.2 Auditing Current Evidence Use

When organisations commit to using evidence, a useful starting point is to consider how evidence is currently used by the organisation as well as how it is used and valued by their peer network. Organisations can start with a self-assessment by asking questions about how often evidence is considered in decision making, what training is currently provided or available to staff, and how often tests of actions are carried out. This self-assessment can happen as part of strategic planning, priority setting, annual goal-setting, or as a stand-alone initiative. Several resources exist to help organisations get started with self-assessments and auditing their evidence use, including this book and the checklists in Chapter 12. Other online resources, such as the Conservation Standards (www.conservationstandards.org), offer a range of resources for developing new practices or training staff.

An initial audit of current evidence use may uncover areas where evidence use is already underway, even if not explicitly recognised. For example, Bat Conservation International was already publishing scientific papers testing actions to reduce threats to bats, but this was not recognised as part of their commitment to evidence use until they incorporated it as part of their contract with Conservation Evidence (www.conservationevidence.com) to become ‘Evidence Champions’ (see Section 11.4). Formalising this evidence creation as part of their contract as an Evidence Champion provided new recognition within the organisation of the value of staff time generating scientific products, and helped showcase how these products serve to aid conservation practice rather than simply as academic outputs.

An initial audit should ideally identify areas to improve or create new practices toward achieving routine evidence use. There is no single formula for success since organisations will vary in their structure and existing practices. The first step is to recognise the limits of current practices and identify ways in which new practices can become organisational habits. Studies of successful habit formation (Clear, 2018; Wood, 2019) can provide useful insights. For example, Clear (2019) makes a case for breaking big goals into smaller steps. Organisations could thus not just set strategic goals around evidence use but also create specific incremental tasks. Even small changes and modest accomplishments build success and lay a foundation for organisational habit formation for evidence use, as discussed further in Section 11.4 on Creating Expectations and Opportunities for Evidence Use.

An initial audit of evidence use should end with, 1) an understanding of the current practices of evidence use within an organisation, 2) some strategic goals toward changing practices, and 3) some specific targets for adopting new practices with plans for how to achieve such targets. If the audit reveals that the organisation has ample room for improvement (e.g. a low score on Checklist 11.1 below), it may be advisable to start with just one area of the organisation or try a pilot programme to build success and apply adaptive learning.

Checklist 11.1 provides a checklist that can serve as a starting point for auditing current practice and can also be adapted for periodic assessments to measure improvement, measure change over time or compare different branches within an organisation. The checklist can easily be adapted based on organisational structure and priorities.

In addition to doing a general audit of current evidence use by an organisation, each project/area of work could be audited to ensure staff are following the best available evidence or guidance and, if not, provide justification or reasoning behind decision making. An example from the medical field is the practice of regular audits by the National Health Service England (https://www.england.nhs.uk/clinaudit). If a doctor offers healthcare advice that differs from what is recommended in the National Institute for Health Care Excellence guidelines (www.nice.org.uk), then the doctor must justify their decision. These guidelines are not mandatory; however, their use is incentivised in part by the pressure of the audit to ensure reasonable justification for deviating from them.

Could a similar audit process be set up in conservation? Internal audits by organisations are valuable, but they may be inconsistent among organisations or may not be sufficiently self-critical. External audits that are part of project evaluations or reporting to funders, stakeholders, or collaborators may provide useful visibility and accountability. These external audits could include encouraging conservationists to explain how they reached their decision and what evidence they used. The Evidence-to-Decision tool (https://www.evidence2decisiontool.com/) could be adapted or used for this kind of audit (Christie et al., 2022). Many grant applications now require metrics of success, which could be used as a starting point for what evidence could be collected on a particular programme (Chapter 9). Similar audits could also take place on businesses/developers/consultants in their work to avoid, minimise, and offset biodiversity impacts, to ensure they are also based on the best available evidence.

11.3 Creating an Evidence-Use Plan

Once an organisation has the results of their initial audit of evidence use, it can begin to craft a plan for integrating evidence into its conservation practice. This will depend on how the group is organised. Is it a small team or part of a large organisation? Has leadership ensured that using evidence will be fundamental to the organisation’s business (top down), or is this an effort where individuals and small teams are working to integrate evidence on a project-by-project basis (bottom-up)? It is worth the time to think through the desired achievements and how they will be delivered. At the organisational change scale, it will be important to develop a plan that works within the existing structure. Does it first need leadership buy-in, or is leadership directing the change? Will developing pilot projects to demonstrate the benefits be important as a first step, or is it possible to implement it across all projects at the same time (Keller and Schaninger, 2019)?

For example, a Conservation Measures Partnership (CMP) team is working on a project to provide guidance for the adoption of Conservation Standards by organisations (CMP 2021). Their approach involves breaking down the problem and identifying strategies and outcomes within the organisation. Successfully introducing new methods or practices for adoption by an organisation may require a plan outlining how the change will be implemented including a timeline. Organisations and teams can then initiate changes using high-priority decisions and evaluating the level of evidence needed (Sutherland et al., 2021). A decision process for prioritising time and effort is important as these practices become norms.

Box 11.1 lays out questions that can be used while developing the organisational plan or for individual projects. Not every project will create evidence or use the same level of evidence. At the start of a project, an assessment can be made to determine if evidence use is necessary for the project and the time and resources that can be dedicated to that part of the project. This can help to focus again on the highest priority evidence needs.

11.4 Creating Expectations and Opportunities for Evidence Use

Creating expectations of evidence use needs to happen within and among organisations to drive the cultural sea-change toward new norms. Organisations need ways to publicly share their values, commitments, and approaches, but also ways to create internal processes and set expectations within their organisational culture. Changing organisational practices is akin to building new (and better) habits. The science of habit formation suggests that the level of ‘friction’ associated with a habit can be a strong determinant of whether it gets adopted (Wood, 2019). Behavioural change revolves around three elements: a cue, a routine, and a reward. Frictionless habits are those where the cues are obvious and attractive, and adoption happens with ease (Clear, 2018). These ideas can be used to help organisations find ways to make practices around evidence use as ‘frictionless’ as possible so that evidence use becomes routine and ultimately habitual.

Creating an expectation of evidence use within organisations requires participation by all staff. Senior leaders must lead by setting expectations for using evidence as desirable and routine. Without leadership engagement there may be less incentive, and thus slower adoption, for middle management or junior staff to allocate time and attention to practices that incorporate evidence use. To set the expectations, supervisors and leaders should set strategic priorities but also create structures and incentives for staff time spent on activities related to evidence use. Leaders also need to provide training for all staff so the practice of evidence use is clearly defined and staff can meet expectations. Creating expectations without accompanying support to implement changes is unlikely to succeed.

Ideally, staff across all levels in an organisation know how to use evidence in decision making and value its use. However, this may take time and investment to achieve. Organisational leaders may need to help direct staff to use available tools, such as the Evidence-to-Decision tool (Section 9.10.3; Christie et al., 2022) or the Conservation Standards (CMP, 2020), to identify what evidence is needed for different activities and decisions. All staff can contribute to setting expectations by identifying processes and workflows where evidence is identified, gathered, stored, and made available to others in the organisation. Expectations are reinforced among staff when evidence is presented or mentioned during briefings or other types of information exchange within organisations (e.g. if junior staff are briefing senior staff on a programme). An indicator that can be used to determine if an evidence practice is routine is to ask this question: Does your manager routinely ask about the underlying evidence for proposed projects? If this is a standard question during project briefings and annual reviews, it can help set expectations and determine if evidence practice is becoming routine. Likewise, external reviews and exchanges between organisations can help organisations innovate and adapt. Checklists (Chapter 12) are useful tools for adopting new practices that can be embedded at different stages of workflows for reinforcing best practices.

Time seems the most limited commodity in every organisation. Therefore, creating specific times for staff to complete tasks related to evidence use on a regular and recurring basis is effective. This can take place in the form of recurring meetings where staff review a checklist together or assign action items with deadlines during different stages of a workflow so that evidence is evaluated before decisions are made. Here, the concept of habit-stacking may be helpful. With habit-stacking, an existing habit is used as the cue for a new habit to make it more obvious and easier to adopt. Organisations may want to identify the triggers for using new tools such as evidence-use checklists or the Evidence-to-Decision tool by attaching them to routine tasks, such as at the start of re-occurring check-in meetings, before a proposal is submitted, or during the review of reports.

When organisations conduct goal-setting or performance evaluations for staff, review forms and meetings can include questions about work activities involving evidence use. Incorporating questions about evidence practice in such reviews can reinforce organisational values and also makes it explicit to staff that time spent on evidence use is valued and reviewed. Including questions about staff activities related to evidence practice in annual performance reviews or goal-setting provides an opportunity for feedback and for managers to learn if strategies to change practices are working. Feedback may also discover if sufficient time and resources have been allocated to achieve desired goals. Likewise, organisational practices and values around evidence use should be shared during the onboarding process for new employees. Specific practices and terminology may not be clear to new employees, and offering training materials and sharing expectations at the outset of employment may accelerate adoption and organisational progress. Setting expectations for evidence use can also be explicit in the hiring process. Evidence use can be mentioned in job descriptions, and questions on evidence use are incorporated into job interviews and the selection of candidates. How to structure this will vary depending on the type of position being offered and core duties, but statements about expectations of evidence use as an organisational commitment may be a way to signal an organisation’s commitment and values related to evidence use.

Committing publicly to evidence use is an important part of creating professional norms of including evidence use (Sutherland and Wordley, 2017). The Evidence Champion programme by Conservation Evidence recognises those organisations that have adopted practices to help deliver evidence-based approaches. To earn recognition as an Evidence Champion, organisations must commit to at least one of a range of practices related to evidence use in conservation. Evidence Champions can then use the badge or logo on websites or branded materials to signal their commitment. Public statements of commitments to evidence use through logos, mission statements, or other branded outreach make explicit that an organisation takes evidence seriously.

Beyond formal processes and institutions involved in building capacity for evidence use or engaging in evidence application, there are also informal institutions that can be effective. Of particular note is the growing movement focused on developing ‘communities of practice’. Communities of practice tend to be bottom-up informal collaboratives where a wide range of individuals with common interests support each other through sharing of practical experience (Lave and Wenger, 1991). A community of practice is already evident in the environmental evidence realm (see Cooke et al., 2017), which is particularly promising and bodes well for the continued development of the capacity for conducting evidence synthesis and incorporating it into decision-making processes. The Miradi Share platform available from the Conservation Measures Partnership is an example of a community of practices to help develop evidence-use approaches in conservation (https://www.miradishare.org).

11.5 Providing the Capacity to Deliver Evidence Use

There are now numerous tools available to help organisations develop better practices around evidence use, but creating capacity will require investments in staff time and expertise to establish and reinforce such practices. Creating or identifying staff positions with core responsibilities and duties centred around evidence use is ideal. For example, the United States government requires a named personnel position, Evaluation Officer, at major departments and bureaus who is responsible for an evidence-building plan (or ‘learning agenda’). Not all organisations may be able to hire a new position specifically dedicated to evidence use, so finding ways to build evidence responsibility into existing roles is critical. As described above, there are many ways to create and reinforce both expectations and opportunities by adapting existing workflows within organisations.

Whether a new position is needed may depend on existing expertise within an organisation and the ability to shift workloads to accommodate new duties. Ultimately, the capacity needed within organisations includes staff members who have the time and expertise to design projects to use and create evidence, evaluate progress and identify success or failure, lead training, collaborate with and mentor co-workers, and answer questions. Organisations can use the Strategic Evidence Assessment Framework (Sutherland et al., 2021) to allocate available capacity most effectively. As evidence use becomes the adopted norm, hopefully, there will be a rise in organisations creating posts with responsibility for evidence use. Positions with evidence in the title have recently become much more common (see Chapter 13 for a list).

Ultimately, evidence use requires evidence to be available. Therefore, addressing the lack of availability of evidence is an important part of providing the capacity to deliver evidence use. Positions with a primary focus to create or contribute evidence (e.g. scientists) are essential to effective conservation delivery. Routine checks of what evidence is available and the quality of that evidence (using the Conservation Evidence database or other tools) and having plans for how to disseminate or share results of research that tests the efficacy of actions is key. Coordination among staff who are trained in scientific study design, with staff responsible for carrying out conservation projects, can help assure that conservation practice is adaptive and effective.

11.6 Training, Capacity Building, and Certification

Building a community of practice around evidence use requires creating and using training and capacity-building opportunities. The Conservation Measures Partnership has resources available for training and offers training courses (www.conservationstandards.org). The IUCN and others offer various training modules that provide examples of online resources for training materials, such as those available through the Conservation Training website (https://www.conservationtraining.org). The Evidence in Conservation Teaching Initiative (Downey et al., 2021; https://www.britishecologicalsociety.org/applied-ecology-resources/about-aer/additional-resources/evidence-in-conservation-teaching) provides a range of open-access teaching material about evidence use, including lectures in nine different languages. There is a need for more resources and opportunities for training at all levels, including integration with University programmes, but also professional training courses. Foundations of Success (https://fosonline.org) offers courses on using the Conservation Standards that can be bespoke for organisations. Training courses could also lead to certification, which reinforces visibility and recognition of the importance of evidence use. The Conservation Evidence programme offers its Evidence Champion certification — certification based on commitments developed for each organisation.

On a larger scale, the US Cooperative Extension Service shows that it is possible to create a model for long-term and substantial government investment in transferring knowledge from scientific research to practitioners (Franz and Townson, 2008). This community-based education entails providing effort and resources toward educating, training, and motivating practitioners (Warner and Christenson, 2019).

There are myriad opportunities for providing training and certifications through professional or academic societies to bolster the conservation evidence movement. Like many themes in this chapter, the structure and nature of such training may depend on the target audiences and organisations involved, given the diversity of organisations involved in conservation practice around the world. Knowledge sharing is critical, as global approaches to conservation are developed, and historic and current inequities to access knowledge must also be acknowledged. Too often, certifications and training are costly and therefore inaccessible to many. Creating open-access tools and training (and making them accessible in multiple languages) are necessary to advance equitable and just approaches to conservation.

11.7 Learning from Failure

Failure in conservation can be defined as ‘a lack of success in meeting stated outcomes and objectives’ (Catalano et al., 2019). In practice, failure and success are usually not binary categorisations but it is often more useful to imagine projects along a spectrum, ranging from 100% failure to 100% success.

In business, learning from failure is recognised as part of innovating toward success. However, most people avoid the discomfort of acknowledging and discussing failure, which then limits opportunities for learning and improving. This point is illustrated by quotes from aeroscientist and former Indian president A.P. J. Abdul Kalam, ‘if you fail, never give up because F.A.I.L. means “First Attempt In Learning”’, and Bill Gates, ‘it’s fine to celebrate success but more important to heed the lessons of failure’. Chambers et al. (2022) express the concern that embracing, or even celebrating, failure may mean unsuccessful projects can be reframed as successes; they emphasise the need to ensure the lessons lead to change.

In some cases, the failure that occurs is a result of experimentally testing actions, which is considered ‘intelligent failure’ because it advances the knowledge frontier (Edmondson, 2011). Some other failures may be due to events that could not have reasonably been predicted, such as the outbreak of a novel disease. This section focuses on the remaining majority of failures where an action seemed as if it should have worked but did not, like pilots crashing fully functioning planes by confusing levers, as described in Chapter 1, or the various examples of failures to engage with communities described in Chapter 6.

How failure is best framed may vary with the project stage. During planning — where the aim is typically to identify factors that could negatively influence the project’s results, assess their potential impact and develop appropriate mitigation strategies — potential failures are considered as ‘risks’. During implementation, the terms ‘challenges’ or ‘issues’ might be more appropriate to ensure the gathering of information that can inform practice. After project completion, where the aim is to document learning to inform future practice, the term ‘lessons learnt’ may be most effective.

11.7.1 Why does failure occur?

Conditions are ripe for intelligent failure in conservation. Firstly, conservation action takes place in natural systems with high complexity and uncertainty. Secondly, many conservation actions involve actions whose effectiveness is uncertain. Thirdly, many conservation projects are dependent on a range of individuals and so may fail due to challenges in execution or ineffective processes.

When a plane crashes, it is obvious that failure has occurred and it is often relatively straightforward to determine how it happened and why the problem developed. For many conservation efforts, establishing these elements is considerably more challenging, with perceptions differing between individuals alongside the complexity and variability in ecological systems. Some may consider a project a success because it met all its short-term objectives, such as the successful training courses, while others might consider the same project a failure because it ultimately failed to contribute to a wider long-term goal, such as the species declining at the same rate. Similarly, some stakeholders may consider a project as an overall success because only a single component of a wider initiative failed to meet its aims, while others may perceive the entire initiative as a failure because the component that failed was the only one they considered important.

A group of conservation practitioners developed a taxonomy of ‘root causes’ of failure in conservation projects, as listed in Table 11.1 (Dickson et al., 2022). This taxonomy can be used to help identify root causes of failure, summarise projects in a standard manner, collate experiences on common issues, and help causes.

In practice, individuals may have different perceptions of the root causes. For example, one might believe the main reason a project failed was the unexpected bad weather that caused a key piece of equipment to fail, another might attribute failure to inadequate planning in selecting weather-proof equipment, while another might state the whole project was overdependent on technology.

Table 11.1 A taxonomy of reasons for project failure. (Source: from Dickson et al., 2022)

11.7.2 Learning from failure

Because failure is generally associated with blame, and is typically emotionally unpleasant, active leadership is needed to create an organisational culture that values failure as a learning opportunity, to devise processes that provide safe ways to discuss failure without blame (Edmondson, 2011), and encourage innovation and experimentation.

The key stages are to consider four questions. What was expected to happen? What actually happened? What went well and why? What can be improved and how? The learning process will often centre around gathering and analysing an individual’s perceptions of failure, both in relation to whether something is considered a failure and in relation to how and why it occurred. These perceptions may differ considerably between individuals and stakeholder groups depending on their role, knowledge, attitudes or underlying motivations. Understanding divergent views is often key to diagnosing the problem.

Improvement then occurs as a result of reflecting on the cause of the problem, considering what could have been done differently, and then considering lessons from other projects and studies. Testing different possible options (see Chapter 10), such as restructuring how some teams operate or providing additional training, would help create more of an evidence base to improve delivery.

This process can be adopted when a project is underway by reflecting on the outcome or by examining individual cases of failure. The process may be an informal consideration or a formal process of review. Table 11.2 below outlines a range of methods that can be applied at various stages of the project cycle.

Workflow practices, such as Scrum or Agile workflows, are built on ideas of transparency, inspection, and adaptation (Schwaber and Sutherland, 2020). Work is conducted in defined sprints with each sprint including a retrospective that allows the team to reflect and individuals to evaluate what did and did not work, and what measures could be taken to improve in the next sprint. Even if not all work in conservation fits the sprint workflow, this approach of routine assessment and transparency to discuss failure and incremental progress are valuable approaches to creating an organisational culture that adopts evidence use (Catalano et al., 2021). The Objectives and Key Results (OKR) framework can also be used as a way of tracking success in this middle-ground by collaboratively setting goals and identifying key measurable results to track (Panchadsaram, 2020).

At all stages, trying to understand potential underlying motivations, agendas and relationships between those involved is particularly useful. Specific categorisations of failure (or success) are sometimes adopted to defend a specific viewpoint or further a particular agenda where emphasising supportive criteria, aligning with particular allies, or incorporating a story into a wider narrative is given greater emphasis than an objective assessment of whether, how, and why the failure occurred.

Seeking evidence for the how and the why, using a common framework for describing problems, testing options, collating lessons, developing a process for learning, and sharing experiences will help ensure that efforts to learn from failure in conservation help drive similar improvements to those seen in other areas of practice.

Table 11.2 Summary of learning from failure methods.

|

Method |

Description |

Useful for |

Links/guidance |

|

Pause & Reflect Session |

Exercise where participants are asked to review the following statements:

|

Analysing progress on a continual basis, for example, as a means of periodically reviewing previously identified risks, and potential new ones, and deciding on whether any adaptations need to be made. |

USAID — Facilitating Pause & Reflect (https://usaidlearninglab.org/resources/facilitating-pause-reflect) |

|

After-Action Review |

Addressing similar questions to Pause & Reflect but carried out after a project or wider initiative has finished, or in response to a specific incident. |

Assessing and analysing root reasons/root causes of failure concerning efforts that have finished, or after a specific incident/case of failure has occurred |

USAID — After Action Review Factsheet (https://usaidlearninglab.org/resources/after-action-review-aar-guidance) |

|

Risk Assessment |

Prior assessment of potential risks and underlying assumptions. |

Assessing, before work begins, assumptions, potential risks/causes of failure and developing potential mitigation strategies. The resulting information then forms a useful basis for ongoing review and assessment of progress and likelihood of failure and success. |

Ecosystem risk assessment science for ecosystem restoration (https://www.iucn.org/news/ecosystem-management/202112/using-ecosystem-risk-assessment-science-ecosystem-restoratihttps://www.iucn.org/news/ecosystem-management/202112/using-ecosystem-risk-assessment-science-ecosystem-restoration) |

|

Pre-mortem |

A similar exercise to a risk assessment where the emphasis is specifically on identifying potential reasons/root causes of failure. |

Useful supplement to a wider risk assessment. Gathers input in an interactive, participatory way that may work better with some audiences than a more formal risk assessment. |

Performing a project pre-mortem |

11.8 Case Studies: Organisations who Shifted to Embrace Evidence Use

A wide range of organisations, from NGOs to governments, have shifted their working so that evidence use is increasingly embedded in practice. The following case studies show the diversity of routes adopted by eight, very different, organisations to achieve a culture of evidence use.

11.8.1 Bat Conservation International

Bat Conservation International (BCI) is a non-profit conservation organisation dedicated to ending bat extinctions worldwide. Founded 40 years ago, BCI has a long-standing history of working for bat conservation globally. In 2020, BCI launched a new 5-year strategic plan that focuses on programmes that deliver conservation outcomes (www.batcon.org). The strategic plan identified a portfolio of work focused on four core missions: implementing endangered species interventions, protecting and restoring habitats, conducting priority research to develop scalable solutions, and inspiring through experience (BCI, 2020).

The strategic planning process was a multi-year effort that recalibrated BCI’s approach to focus more explicitly on strategies and activities that could achieve measurable outcomes (Salafsky et al., 2002). To start the strategic planning process, the organisation invested in a training course led by Nick Salafsky at Foundations of Success to teach staff the Open Standards for the Practice of Conservation (https://conservationstandards.org). This helped identify projects and activities with theories of change that could result in desired outcomes, as described in Chapter 7 (CMP, 2020). The course also helped BCI develop internal processes for evaluating projects, which included attention to assessing evidence. Perhaps more important than the course content itself, the course participation allowed staff to engage in shared learning and explore together whether existing work was evidence-based or not. Participating staff formed cross-functional teams that mixed across organisational hierarchy and departments, which helped reset organisational culture toward a more collaborative and growth-mindset community of practice.

A challenge for bat conservation practice is a paucity of evidence to support actions (Frick et al., 2020; Berthinussen et al., 2021). In the first edition of the synopsis of conservation evidence for bats published in 2014, there were only 78 actions identified and most of those had no evidence or unknown effectiveness (Berthinussen et al., 2014). In the latest edition of the synopsis (Berthinussen et al., 2021), there are now 200 actions identified. Yet, of those 200 actions, 60% (n = 119/200) have no evidence and another 22% (n = 44/200) are ranked with unknown effectiveness due to a limited number of studies (in most cases, there is a single study; www.conservationevidence.com). In sum, 81% of currently identified actions for bat conservation have no evidence or unknown effectiveness based on the evaluation standards set by Conservation Evidence. This lack of evidence limits the toolbox for implementing evidence-based strategies and indicates the need for integration of research to test strategies and report on efficacy.

One of the ways that BCI has responded to the need to create evidence is to commit to publishing results in scientific outlets on research testing actions, which is formally part of their Evidence Champion agreement with Conservation Evidence. In addition, BCI actively supports collaboration between its science and conservation departments and seeks opportunities for cross-functional teams and engagement. The commitment to do both conservation science and practice has proven successful. In the past five years, the number of staff with PhDs increased from 2 to 11. Organisational leaders also socialise the value of scientific products to advance the mission, and set expectations that work should be conducted in ways that can lead to scientific products, so that results are shared to advance conservation practice broadly. The agreement with Conservation Evidence to become Evidence Champions served to formalise a cultural shift that was already taking place within the organisation. The organisation created and hired a new Director of Conservation Evidence position in 2022.

The effort to integrate scientific practice into delivering conservation takes consistent attention and diligence. While growth at BCI has resulted in increased staff and number of projects, it also creates challenges to maintain consistency in processes. Even before the covid pandemic, BCI functioned as a distributed organisation with remotely located staff. Collaboration and team-based work happen almost entirely in a virtual space. Creating opportunities and time for cross-functional processes can be difficult as team sizes increase, especially in virtual environments. In reality, there are some gaps between organisational aspirations toward standardised evaluation of evidence use for projects and how all projects are actually developed. Some projects happen opportunistically or organically and, because most staff carry heavy workloads, sometimes due diligence toward standardised evaluations of evidence is skipped or overlooked. The key to continued success seems to be consistent leadership, to value and socialise the process and the need for evidence use, and to reflect those values in work management strategies. Much like adaptive management itself is an iterative learning process, the process of incorporating evidence use into organisational culture is itself an iterative and adaptive cycle.

11.8.2 Fisheries and Oceans Canada

Fisheries and Oceans Canada (DFO) is a federal government agency in Canada responsible for the protection and management of aquatic ecosystems and aquatic biodiversity to maintain ecosystem services (see https://www.dfo-mpo.gc.ca/index-eng.html). DFO is regarded as a ‘science-based’ organisation and has its own science unit to support management and decision-making. At present, there is no standard approach to evidence use within the Canadian federal government aside from general statements in Ministerial Mandate Letters about how evidence should inform and guide decisions. DFO has one of the most long-standing and formalised approaches to evidence use via a science advice process called the Canadian Science Advisory Secretariat (CSAS), which was founded in 1996/97. CSAS is the mechanism by which DFO provides peer-reviewed science advice used by DFO and made available to the public. Efforts focus on ensuring that CSAS outputs yield advice that is credible, relevant and legitimate and therefore provides the best possible advice to the Minister (who holds the ultimate decision-making authority), managers, rightsholders, stakeholders, and the public through peer review that is evidence-based, objective, impartial and respectful (CSAS, 2011). Evidence of various forms is synthesised and vetted by internal and external experts (spanning knowledge generators to evidence users from relevant sectors including NGOs, industry, rightsholders, etc.). There is no standard means of synthesis but it can range from a narrative review to a full systematic review with meta-analysis, often supplemented with expert advice.

Revisions to the fisheries protection provisions (largely about fish habitat) of the Fisheries Act, and its ongoing implementation, provide a good example of how CSAS is effective in making the consideration of evidence part of decision making. Early efforts included the development of a science framework (Rice et al., 2015) and, after a change in government and refinements to the Act, there began a series of CSAS exercises focused on different topics needed to inform its implementation. One particular topic explored in CSAS was the effectiveness of different off-setting strategies for fish habitat creation/restoration for substrate spawning fish. A full systematic review was commissioned that revealed that the evidence base was large but the evidence was generally of poor quality (Taylor et al., 2019). A second review was conducted that relaxed the criteria for inclusion (i.e. including studies that lacked replication or comparators) to assess the lower-quality evidence (Rytwinski et al., 2019). The CSAS was convened and at the workshop both evidence syntheses were discussed alongside expert input from habitat managers and scientists. Given that decisions will be made about habitat restoration with or without evidence, it was apparent that any information that nudged the practitioner into being able to make a ‘better’ decision would be beneficial. This approach highlighted the reality that evidence quality will vary and different synthesis methods can yield different outcomes, but, with an appropriate understanding of biases and limitations, all evidence has the potential to enable better decisions (CSAS, 2020).

The CSAS process is not perfect, especially as it relates to decisions for stock-specific fisheries’ management that lack the expediency and transparency desired (see Archibald et al., 2021). However, for files that are less time sensitive, the process seems to be useful for equipping decision makers, managers, and practitioners with science-based management advice. Social science research showed that DFO managers placed the highest credibility on products generated by the CSAS process, showing that evidence is both valued and used (Young et al., 2016). Young et al. (2016) found that the CSAS process was regarded as a validator of knowledge for government employees given its emphasis on the critical evaluation and synthesis of evidence, thereby yielding institutionally-endorsed knowledge. CSAS has embedded a culture of evidence synthesis and use within DFO and has also led to improvements in how science is conducted to ensure that it is done in a manner that contributes meaningfully to the evidence base through generating high quality science that can be used in evidence synthesis (CSAS, 2020). As the Canadian government moves towards understanding evidence synthesis and use ecosystem in federal agencies, it is likely that more formal processes, such as CSAS, will be adopted by other agencies with science-based portfolios.

11.8.3 Ingleby Farms

Ingleby Farms owns 101,000 hectares of farmland and forest across nine countries and specialises in the production of high-quality food through sustainable agriculture and environmental improvement by farming with nature, not against it. It is a Conservation Evidence Champion and, as part of this commitment, is continuously reviewing how to integrate evidence use into actions and decisions.

Ingleby uses monitoring, especially birds (conducted by local ornithologists) and earthworm surveys (total count in 20 x 20 cm cube) to broadly determine overall ecosystem health and detect positive and negative changes. This is used to identify areas of concern and opportunities as well as forming the basis for looking at the success of measures.

For each farm, Ingleby has identified features of significance for designation as Privately Protected Areas (PPAs). Priorities are identified through the Significant Species database (Table 11.3), which classifies species according to global priorities alongside their occurrence on the farms. The other categories of features of significance are cultural (historic or important sites for local traditions, such as communal grazing) or recreational, such as fishing or picnicking. Globally, Ingleby has 2,769 hectares of formally protected land, all with management plans.

Table 11.3 Identifying significant species within Ingleby Farms using IUCN Red List, National lists and the status on farms.

|

Conservation status |

Resident/breeding |

Visitor/migrant/possibly breeding |

Occasionally seen |

|

Critical |

Top priority |

High priority |

Medium priority |

|

Endangered |

High priority |

High priority |

Low priority |

|

Nationally critical |

High priority |

Medium priority |

Low priority |

|

Vulnerable |

Medium priority |

Low priority |

Monitor |

|

Threatened |

Low priority |

Low priority |

Monitor |

|

Near threatened |

Low priority |

Monitor |

Monitor |

|

Gradual decline |

Monitor |

Monitor |

Monitor |

|

Rare |

Monitor |

Monitor |

Monitor |

|

Special concern |

Monitor |

Monitor |

Monitor |

|

Least concern |

Monitor |

Monitor |

Monitor |

|

No special status |

Monitor |

Monitor |

Monitor |

|

Not listed |

Monitor |

Monitor |

Monitor |

Ingleby has used the Evidence Assessment Hierarchy (Sutherland et al., 2021) to identify an appropriate and realistic evidence strategy. This strategy is to assess the evidence for actions when faced with a problem or when updating farm management plans. With the time available, the plan is that, unless the decision was obvious or trivial, issues will be assessed by checking likely overall effectiveness (e.g. the effectiveness criteria of Conservation Evidence). If that appears contradictory to plans, or the issue is more critical, then this action will be considered in greater depth.

A few subjects require detailed assessment of evidence due to serious problems (such as the fly, Enallodiplosis discordis, affecting the highly important tropical dry forest in Peru) or global responsibilities for species, such as the golden sun moth (Victoria), Peruvian plantcutter (Peru) or rufous flycatcher (Peru). In these cases, Ingleby then works with the farm managers to consider the challenges (including as shown by monitoring) and options and uses the Evidence-to-Decision tool (Section 9.10.3; Christie et al., 2022) to systematically examine the issue, evaluate evidence, and make an informed decision. The evidence is balanced with local experience, knowledge, and values to assist the making of a decision. Ingleby’s agreed strategy for using evidence and conducting tests on-farm comprises

- Adopt effective actions, focusing on what is known to work from documented evidence or experience.

- Routinely test where the likely gain in knowledge exceeds the cost of testing. These will be small individual actions rather than complex projects, such as different crop varieties or different planting options.

- Look for occasional (ideally annually) opportunities for simple, but well-designed, tests to contribute to global knowledge.

- Increase experimental rigour where possible and appropriate (controls, replication, randomisation).

- Use annual bird and earthworm surveys on each farm to gauge overall progress and identify challenges.

Thus, when considering a problem, Ingleby identifies a range of options by consulting industry best practices, the available evidence (e.g. using the Conservation Evidence database), and what has worked in practice in the past. If there is uncertainty then experimental tests may be created. In Romania there was a decision to restore an area of meadow, but then debate as to whether to just leave it alone or spread meadow hay from an adjacent farm. This resulted in a simple split-plot experiment (Figure 11.1) currently underway. Another experiment recently created in New Zealand is to add bird perches in an attempt to increase natural tree regeneration.

Figure 11.1 Monitoring a test of adding hay (right) against a control (left) of no hay added. (Source: Tom McPherson, CC-BY-4.0)

Ingleby ensures the results from all trials and tests are made available to all within Ingleby, creating a database for collating and sharing the observed outcomes of all trials (production and conservation) on their intranet. Trials are added to the database as they commence to ensure all trial outcomes are documented, reducing the risk of documenting only the successes. Major projects are also communicated via Ingleby news and through social media.

Ingleby is also committed to supporting peer-reviewed environmental science and research, allowing interested parties to conduct unimpeded research on Ingleby’s farms and publish their findings, regardless of results; the research must be published open access.

11.8.4 Kent Wildlife Trust

Kent Wildlife Trust is based in the county of Kent, in South East England. The Trust manages around 80 nature reserves, and works to develop and deliver nature-based solutions to enable the restoration of biodiversity and bioabundance, and enhance the carbon sequestration potential and resilience of landscapes. It engages with a wide range of stakeholders, from politicians and business leaders to landowners and local communities, to undertake advocacy work, advise, or work in partnership to deliver conservation outcomes and reconnect people with nature.

The development of an evidence culture at Kent Wildlife Trust was catalysed in 2015 with the creation of a new role of Conservation Evidence Ecologist, tasked with creating, coordinating and delivering a programme of monitoring and evidence to support the Trust’s work. This was born out of a realisation that, while the organisation had always done monitoring, it had not always done so consistently, using standardised methods, or with methods appropriate to assessing target outcomes. Evidence did not inform adaptive management, interventions were used without consulting evidence for their effectiveness, and there was no culture of, or commitment to, testing effectiveness or publishing.

The new incumbent in the role of Conservation Evidence Ecologist attended a conference in 2017 at which Conservation Evidence was presenting, and recognised the significant opportunity provided by the summaries of evidence collated in its database and in becoming an Evidence Champion. The senior leadership of the Trust were persuaded of the benefits of pursuing a path towards becoming an Evidence Champion, though there was a challenge in resourcing the work needed, which delayed the ambition to progress. This was overcome when funding was secured from the National Lottery Heritage Fund as part of a project grant to resource the work required.

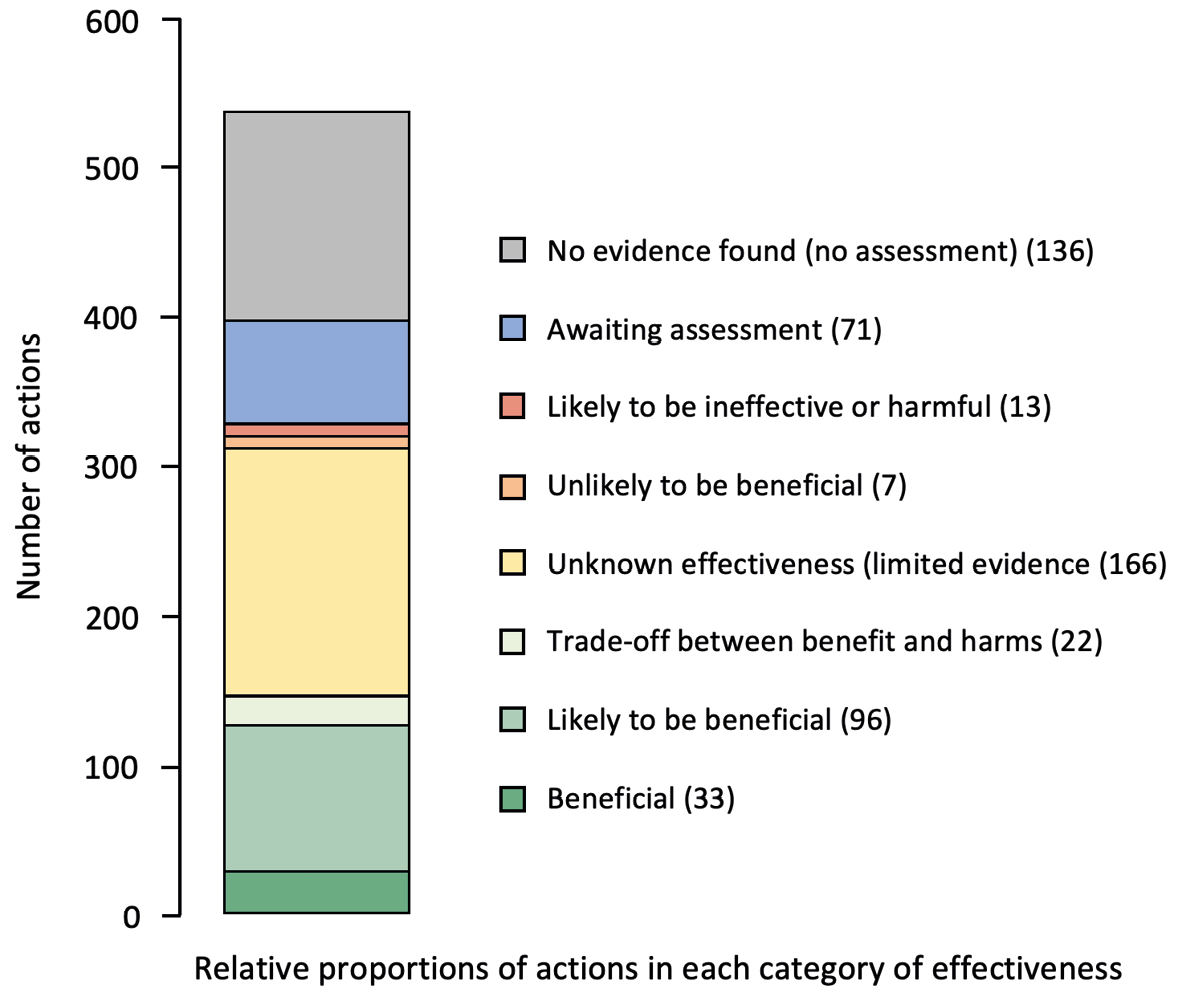

In support of becoming an Evidence Champion, a review was conducted in 2019 of 544 interventions that Kent Wildlife Trust carried out as part of its land management activities, for which Conservation Evidence had summarised the evidence. Each of these was assessed using the ‘effectiveness categories’ in the database to determine the likelihood of effectiveness based on the collated evidence. Close attention was paid to interventions that had been assessed as likely to be ineffective or harmful, unlikely to be beneficial, or as having a trade-off between benefits and harms. It was enlightening to discover how frequently interventions had been assessed in these categories (Figure 11.2). Interventions were then reviewed and a position on the continued use of each was determined, including a commitment to reviewing the use of ineffective interventions and regularly reviewing new evidence. The review of the 544 interventions took approximately one month for two staff members to carry out and was conducted by a Project Officer, supported by a volunteer, and overseen by the evidence lead.

Through a partnership agreement with Conservation Evidence, Kent Wildlife Trust has committed to periodically reviewing evidence for the interventions it uses, identifying opportunities to test the effectiveness of interventions and to publish results, contributing to the development of best practice in evidence use, and to producing an organisational conservation evidence strategy. At the time of writing, three tests of interventions are underway (bracken control, vegetated shingle restoration, and an experimental test of different wilding grazing regimes). Kent Wildlife Trust benefits from being able to publicly demonstrate an evidence-based approach with mechanisms to improve effectiveness that help to maximise project outcomes and lead the way in showing how organisations could adopt evidence-based practice. The Conservation Evidence function is now fully embedded and consists of a multidisciplinary team of ecologists, GIS specialists and data scientists overseen by a Conservation Evidence Manager, working to ensure that evidence informs adaptive management across all strands of the Trust’s work.

Figure 11.2 The number of interventions carried out by Kent Wildlife Trust classified by categories of effectiveness as then summarised by Conservation Evidence. (Source: Paul Tinsley-Marshall, CC-BY-4.0)

11.8.5 Pacific Rim Conservation

Pacific Rim Conservation (PRC) is a small US-based non-profit organisation whose mission is to maintain and restore native bird diversity, populations, and ecosystems in Hawaii and the Pacific Region. Pacific Rim Conservation was founded in 2006 out of a need for research-based management on native species, particularly birds, throughout Hawaii and the Pacific. Island species, particularly those in Hawaii, are some of the most imperilled on earth and, with so few individuals of some species, research was sorely needed to inform and deliver management actions. Pacific Rim Conservation works together with local communities, government agencies, and other conservation organisations to achieve their goals through direct conservation action. The organisation conducts research to understand avian biology, as well as changes and benefits to the ecosystem, in order to inform future conservation actions. It has published over 135 peer-reviewed papers on these outcomes.

For the first nine years of its existence, PRC operated as a small business before converting to a 501(c)3 non-profit organisation. That same year, PRC undertook strategic planning to better define their goals, and provide direct, measurable outcomes for whether they were achieving them. The result was a roadmap for how to design and implement projects in a step-wise, evidence-based manner. At a high level, this process entails

- Conducting prioritisation and structured decision making to select projects on the front end ensuring that the species and ecosystems most at risk are those benefiting from conservation actions.

- Once a project has been selected, establishing species and project-specific benchmarks that are tied to timelines.

- Post-action (at each project phase) and post-mortem (if wildlife mortality is experienced during a project) debrief through a combination of meetings and anonymous surveys of both staff and immediate stakeholders.

- Upon completion of a project or field season, immediate post-season dissemination of information to stakeholders and land managers through direct presentations and annual project reports.

- Publication of the final results in the peer-reviewed literature.

Due to the organisation’s small size (11 or fewer employees) and existing structure, they do not employ a single person responsible for evidence use, but have instead created a culture of evidence that is incorporated into every project and which ensures each staff member is accountable for collecting evidence. What follows is an example of how each of these steps has been put into practice with the organisation’s largest project.

The current flagship initiative of PRC is the No Net Loss initiative (https://www.islandarks.org), which has the goal of creating new habitat for seabirds in the US Tropical Pacific, on high islands, matching acre for acre that are currently being lost to sea level rise on low lying atolls. They accomplish this by creating ‘mainland islands’ through predator exclusion fencing on high islands, and then translocating or attracting birds into the area to create new breeding colonies safe from both predators and projected sea level rise. The first step of this project was to select species that were most vulnerable to sea level rise through a prioritisation exercise, and then to select the appropriate source and recipient colonies to move them to. To prioritise species, PRC compiled a list of all the breeding seabirds in the region, scored each species for 10 criteria that reflected their extinction risk and vulnerability to climate change and invasive predators, and then summed the scores of all criteria to obtain an overall score and ranked the species in terms of overall conservation need. The top 10 candidates then had species profiles compiled to determine if they were suitable for translocation.

A similar process was used to select source and recipient colonies. Factors that were considered in assessing suitability as a source or restoration site included elevation, presence of predators, ability to exclude or eradicate predators, and several other anthropogenic risk factors. Pacific Rim Conservation also considered a colony to be a suitable source if it was: 1) at risk of inundation from sea level rise and storm surge such that the long-term persistence of the colony is in jeopardy; 2) subject to predation by invasive species that has not been effectively managed and would be difficult to manage; and 3) large enough to sustain removal of the desired number of individuals for several years. They considered a site to be suitable for restoration if: 1) it was not at risk of inundation; 2) predators and other anthropogenic threats were absent, had been eliminated or effectively managed, or could be effectively managed on a long term scale; 3) there were no serious logistical constraints that could limit the ability to safely move birds to them in a timely manner, and sufficient facilities to carry out the action or the facilities could be reasonably constructed without damaging the integrity of the site. The result of these three prioritisation activities was a science-based repeatable approach that maximised potential success and minimised risk from an outcomes, safety and financial standpoint. The results of this exercise were then published in the peer-reviewed literature so that other organisations could use the exercise to potentially prioritise other actions (Young and VanderWerf, 2022).

Once the project parameters (species and sites) had been selected, project-specific benchmarks were set along with associated timelines. Since this project involved both restoration of habitat (i.e. building predator-free reserves) and the translocation of species, there were multiple categories used. The overall project benchmarks were:

- Habitat outcomes:

- The number of sites established

- The total number of acres of predator-free habitat on high islands that have been created

- The number of native plants out-planted

- The number or acreage of invasive plants removed

- Translocation outcomes:

- % of chicks that survive capture and transfer to release site

- Body size of fledged chicks

- % chicks that fledge from the new colony

- % translocated chicks that return to the release site (by age four)

- Number birds fledged from other colonies that visit the translocation site

- Number birds fledged from other sites that recruit to the new colony

- Reproductive performance of birds breeding in the new colony.

- The number of people reached:

The project has been ongoing since 2015, and has involved four species across three sites. To date, all habitat specific goals have been met, and two of the four species have met their final long-term outcomes with the other two having met the stage specific outcomes (i.e. not enough time has passed to achieve the long term objectives). While there have been stage and time specific failures (usually mortality events if associated with the translocation or predator breaches in the case of the enclosures), none of these was considered overall project failures.

What has been key to using evidence-based conservation within PRC is not only prioritising projects and setting stage specific benchmarks, but conducting regular evaluations with staff and project partners. This is typically done after each major project stage (i.e. completion of a predator fence, or completion of a translocation event for the year), and then at the end of each season. Feedback is gathered on the process as well as the metrics being used, and adjustments are made, if needed. Considerable effort is put into keeping stakeholders informed by presenting data at the end of each season both in written format (i.e. annual reports) and visually through presentations. Efforts are made to present both the challenges and successes in order to ensure a holistic picture is being put forward. Finally, at the end of each project, at least one, and often multiple peer-reviewed publications are distributed on the project to ensure the greater conservation community may benefit.

The system employed by PRC is one that started top down during a critical inflexion point in the organisation and has been incorporated into every staff position and project plan so much so that it is not treated as a stand-alone organisational unit. As such, it continues to have seamless integration into the existing work and culture of the organisation, ensuring its long-term persistence.

11.8.6 US Fish and Wildlife Service National Wildlife Refuges System

The US Fish and Wildlife Service’s National Wildlife Refuge System (NWRS) manages a network of 560 protected areas across the United States. Founded in 1903, NWRS has a long history of conservation of species and ecosystems. In 2010 the NWRS invested in an Inventory and Monitoring (I&M) programme designed to address information needs and evaluate the effectiveness of conservation strategies implemented on NWRS lands.

The NWRS is making progress toward supporting a culture of evidence use. Currently, the NWRS is engaged in a process to focus monitoring data collection on specific species or ecosystem at each refuge, develop SMART (specific, measurable, achievable, relevant, and time-bound) objectives for each species or ecosystem and identify what indicators will be used to evaluate the objectives that were set. In the NWRS Pacific Southwest Region, the I&M team used the Conservation Standards (CMP, 2020) to set up the structure for connecting conservation targets, conservation strategies and indicators. This has been designed as an iterative process where refuge staff use professional judgement in most cases to set conservation targets and evaluation criteria. As data for the indicators are either collected or existing data are analysed these initial target values will be re-evaluated and updated. The process of developing objectives, having data to evaluate the objectives and the ability to update targets, when moving from professional judgement to an evidence base, provides a flexible structure that can be scaled to the staff time and funding available. A subset of refuges identified strategies and outcomes of their strategies that can be used to increase learning and to better understand how the organisation’s conservation strategies impact its conservation targets. In addition to developing methods and processes for integrating evidence into decisions, there is a need for easy methods for capturing the data, results, and lessons learned. As part of the process of developing evidence, the I&M team has been developing tools, data standards, and visualisations of the data used to set targets, as well as the lessons learned. The change from only publishing information in summary reports to capturing information in standardised data structures has increased the I&M team’s ability to look across all the refuge conservation targets to identify common challenges, what evidence is available for different strategies, and where to focus efforts.

Trying to change the culture in a large organisation responsible for managing a wide variety of ecosystems, with varying levels of funding and staffing, takes time. Nevertheless, they are starting to see a change in how some individual staff approach projects to increase their learning and ensure that others can build on the knowledge they have gained. The changes are dependent on additional support for staff to learn how to use and develop evidence, and for leadership to support and ask for evidence. Now that they are seeing the change with early adopters, they are taking the next steps to scale it up to be an organisation-wide approach.

11.8.7 Whitley Fund for Nature

The Whitley Fund for Nature (WFN) is a fundraising and grant-giving nature conservation charity offering recognition, training, and grants to support the work of proven grassroots conservation leaders across the Global South. Since its founding in 1993 as a UK charity, WFN has channelled £20 million to 200 conservation leaders in 80 countries, primarily across Asia, Africa, and South America.

WFN’s grants programmes offer laddered support to those spearheading local solutions to the global biodiversity and climate crises. Through its flagship Whitley Awards Ceremony and Continuation Funding programme, WFN supports work rooted in science and community involvement that benefits wildlife, landscapes, and people. Award recipients gain funding, skills, and increased visibility, resulting in international profile boost, media attention, additional investment opportunities, and improved access to decision makers.

WFN supports a culture of evidence use and continues to fine tune its application process to take evidence into account. WFN started working with Conservation Evidence several years ago because, as funders, the organisation recognises the importance of not trying to reinvent the wheel, sharing knowledge, and learning from failure. As a grant-maker, WFN also wants to encourage openness among conservationists (including the sharing of both positive and negative results), avoid unnecessary duplication, and support the scale-up of effective environmental solutions. WFN therefore also recognises the value of adopting innovative approaches and sharing these results.

The use of Conservation Evidence was integrated into WFN’s application screening process, at first by checking the What Works in Conservation literature themselves and then by integrating it into their application guidelines for efficiency. WFN currently recommend all applicants check the Conservation Evidence website to reference examples of conservation interventions and their effectiveness captured in the literature. This will help to inform project design and monitoring.

For shortlisted applications to the Whitley Awards and Continuation Funding programme, WFN requires candidates to provide evidence of success by asking Principle Investigators what makes them confident the proposed activities will succeed and be effective in achieving the desired project outcomes. Applicants must provide evidence that their proposed methods will be effective, drawing from either their experience to-date, peer-reviewed publications, grey literature (non-published data), and relevant examples from other projects.

In this way, WFN is mindful that most published evidence originates from the Global North and is found behind paywalls, acting as a barrier to many, and thereby recognises the need to take valuable unpublished evidence and experience into account. This type of evidence is more accessible to NGOs and practitioners in the Global South, where conservation is underfunded yet much needed, and where effective work is being delivered by committed teams.

WFN asks shortlisted applicants to complete a form (see Table 11.4), focusing on their objectives and assumptions rather than providing a long list of every planned intervention or activity. This exercise aims to encourage applicants to scrutinise their project design, and base actions on evidence to increase the chance of successful outcomes. The main aspiration of this approach is to ensure that applicants have gone through a rigorous process as part of their decision making, even if the formal scientific evidence is sparse.

The WFN grant application process is, of course, an iterative learning process. It continues to evolve, as WFN takes on board feedback from grantees and practitioners on the ground.

Table 11.4 The Whitley Fund for Nature evidence summary table used for shortlisted projects.

|

Intervention: |

Removing ghost fishing gear |

||||

|

Evidence sourcea |

Type of evidenceb |

Direction and strength of resultsc |

Relevance (Low/Medium/High) d |

Evidence quality (L/M/H) e |

Overall confidence (L/M/H) f |

|

Personal experience |

Observation |

150 kg discarded nets removed from a small area of benthic habitat. Tube sponges quickly established. |

High |

Medium

|

High |

|

Peer-reviewed publication: Melli et al. (2017) assessing marine debris in a Site of Community Importance in the north-western Adriatic Sea |

Observation |

Litter-fauna interactions were high, with most of the debris (65.7%) entangling or covering benthic organisms. |

High |

Medium |

Medium |

|

Synthesis — no evidence |

none |

High |

High |

Medium |

|

a E.g. Peer-reviewed publication, expert opinion, grey literature report, personal experience

b E.g. synthesis, experimental, observational, anecdotal, theoretical/modelling.

c Was the result strongly positive, weakly positive, mixed, or no effect?

d How relevant is the evidence in terms of geography, taxa or habitat? Does the evidence relate directly to the intervention effectiveness?

e Depends on the type of evidence, but also sample size and experimental design.

f From a combination of the direction/strength of results, relevance and quality across all evidence types.

Among WFN’s network of Whitley Award winners across the globe, several have become Evidence Champions, acting as ambassadors for Conservation Evidence and putting the approach of evidence use into practice. WFN’s vision is that by taking evidence into account and using this to assess applications as a standard part of its review process, they will help practitioners improve the likelihood of success and the delivery of effective conservation outcomes that can be brought to scale. This mode of application assessment is already seen in the way many funders (including WFN) request a budget or Theory of Change. The result of including evidence is expected to not only be to aid the recovery of the natural world, but to also attract increased funding to the environment sector.

11.8.8 Woodland Trust

The Woodland Trust is a UK charitable organisation committed to tackling the climate and nature emergencies through trees and woods. Being ‘evidence-led’ is of critical importance for informing where and how to prioritise and deploy limited resources, in a cost-effective way to achieve maximum impact. Evidence is key to understanding the challenges our natural world is facing, and where the organisation’s expertise, advocacy, and practical delivery can best be applied to make impactful and meaningful change in the short and long term. By harnessing the power of evidence to inform and underpin our interactions and communications with a wide range of audiences, from decision makers, politicians, scientists, woodland managers, landowners and indeed its supporters, the Woodland Trust can act and speak with confidence and credibility.

The transformation to an effective culture of using evidence has required clarity on what being evidence-led means for the variety of different functions of the organisation and how it can help us target our limited resources for greatest value and impact. This transformation was given a real boost with the creation of a dedicated conservation team over 15 years ago, and in 2018 by greater focus and investment in a Conservation Evidence and Outcomes team. This team of scientists has a range of specific skills and experience and able to lead the development of improved engagement with science and research through a growing evidence toolkit, enhanced communications across the organisation and externally, including webinars with external guests, quarterly newsletters, and regular site visits with the organisation’s delivery teams. There has also been further development of systems, processes, and training with this shift.

This has led to an improved understanding of why and how the power of evidence should be harnessed, with evidence now becoming embedded within all functions of the organisation and clear visibility in our organisational strategy. Utility and application of evidence are being enabled through the synthesis of evidence into review papers and briefings, through funding and commissioning research to fill key evidence gaps, through the development of evidence-based best practice guides for the creation and restoration of trees and woods and collecting and harnessing data to understand where and how action for change should be delivered. The Woodland Trust published the first ever State of the UK’s Native Woods and Trees report in April 2021 (Reid et al., 2021). This provided a comprehensive review of the evidence, and this will continue to shape the Trust’s policy influencing goals, improved its reputation as trusted leaders with policy makers and landowners, identified evidence gaps, and significantly informed its own strategic direction, increasing the organisation’s ambitions for protection and restoration of existing woods and trees. Woodland Trust evidence reviews, practical guides, reports, and citizen science datasets are all freely shared for others to use. For example, evidence reviews are published in Applied Ecological Resources, where the Woodland Trust is a silver member.

Meanwhile, the culture and commitment to using evidence within the Woodland Trust continues to grow with new initiatives such as a new monitoring, evaluation and learning framework. This framework is currently in development using the open conservation standards and expert conservation training 366 References for practitioners and landowners. This training allows them to develop skills and capability in line with evidence-based guidance. Integrating evidence is the key to success. As Evidence Champions with the Conservation Evidence initiative, the Woodland Trust continues to build important collaborations and relationships with partners, to bring together and share knowledge, tools and evidence to galvanise action, and enhance the quality and longevity of conservation outcomes on the ground.

References

Archibald, D.W., McIver, R. and Rangeley, R. 2021. Untimely publications: Delayed Canadian fisheries science advice limits transparency of decision-making. Marine Policy 132: 104690, https://doi.org/10.1016/j.marpol.2021.104690.

Bat Conservation International (BCI). 2020. Our Mission to End Bat Extinctions Worldwide: Strategic Plan 2020–2025 (Austin, TX: Bat Conservation International), https://www.batcon.org/wp-content/uploads/2020/10/BCI_Strategic_Plan_2020_2025_web.pdf.

Berthinussen, A., Richardson, O.C. and Altringham, J.D. 2014. Bat Conservation: Global Evidence for the Effects of Interventions (Exeter, UK: Pelagic Publishing Ltd.).

Berthinussen, A., Richardson, O.C. and Altringham, J.D. 2021. Bat Conservation: Global Evidence for the Effects of Interventions (2021 Edition) (Cambridge: University of Cambridge), https://www.conservationevidence.com/synopsis/pdf/32.

Catalano, A.S., Jimmieson, N.L. and Knight, A.T. 2021. Building better teams by identifying conservation professionals willing to learn from failure. Biological Conservation 256: 109069, https://doi.org/10.1016/j.biocon.2021.109069.

Catalano, A.S., Lyons-White, J., Mills, M.M., et al. 2019. Learning from published project failures in conservation. Biological Conservation 238: 108223, https://doi.org/10.1016/j.biocon.2019.108223.

Chambers, J.M., Massarella, K. and Fletcher, R. 2022. The right to fail? Problematizing failure discourse in international conservation. World Development 150: 105723, https://doi.org/10.1016/j.worlddev.2021.105723.

Christie, A.P., Downey, H., Frick, W.F., et al. 2022. A practical conservation tool to combine diverse types of evidence for transparent evidence‐based decision‐making. Conservation Science and Practice 4: e579, https://doi.org/10.1111/csp2.579.