9. Creating Evidence-Based Policy and Practice

© 2022 Chapter Authors, CC BY-NC 4.0 https://doi.org/10.11647/OBP.0321.09

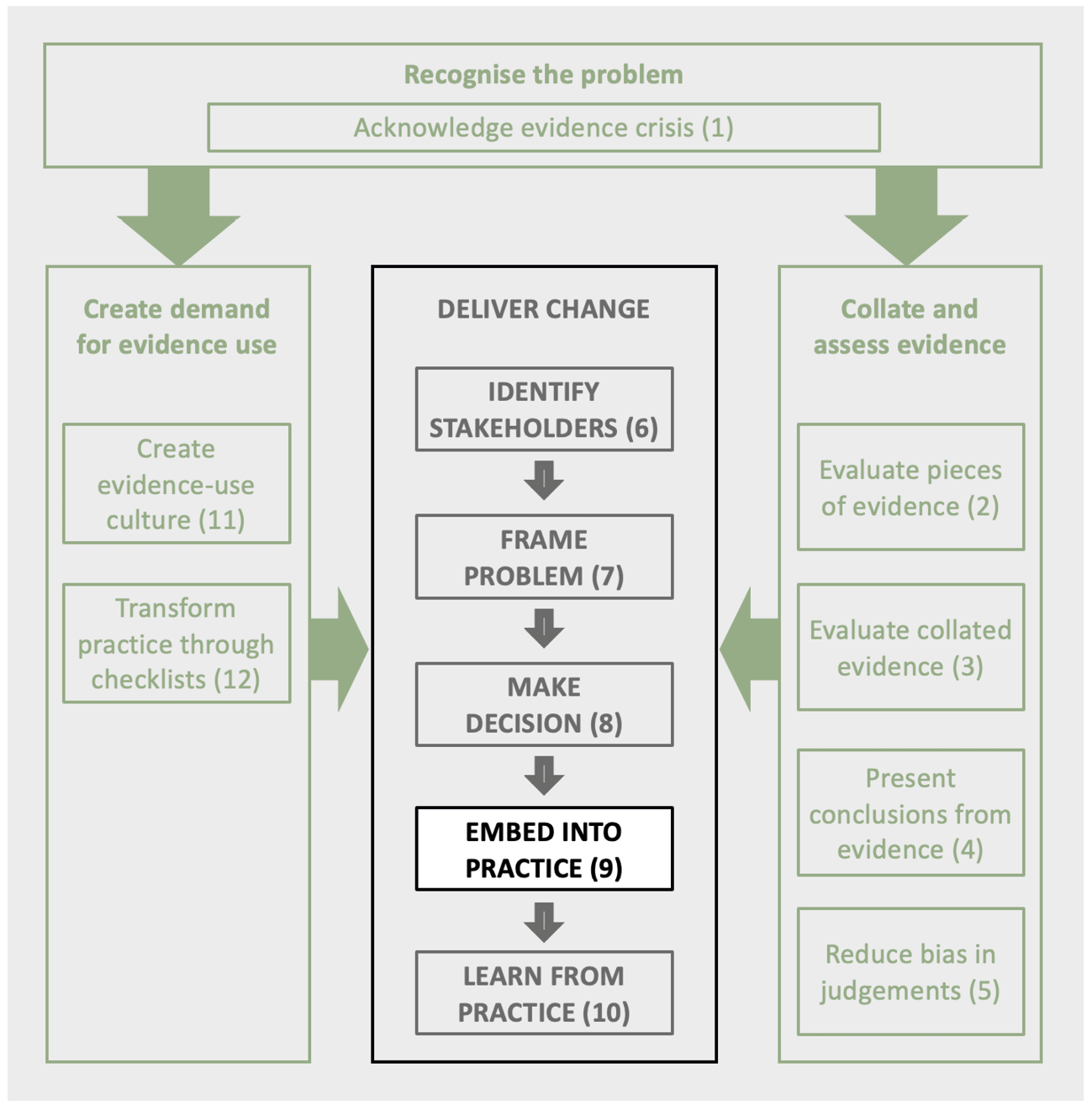

Transforming conservation depends on evidence being embedded within decision-making processes. This chapter presents general principles for embedding evidence into a wide range of approaches and processes. These include creating action plans (habitat or species), guidance documents, funding applications, policy, business environmental strategies, and management plans, as well as deciding what to fund, what to report or how to construct models. How well evidence is used in these processes can be evaluated through various processes and indices.

1 Woodland Trust, Kempton Way, Grantham, Lincolnshire, UK

2 Foundations of Success Europe, 7211 AA Eefde, The Netherlands

3 Houses of Parliament, Parliament Square, London SW1A 0PW

4 Biosecurity Research Initiative at St Catharine’s College (BioRISC), St Catharine’s College, Cambridge, UK

5 School of Natural Sciences, Trinity College, Dublin, Ireland

6 University of Cambridge Institute for Sustainability Leadership, The Entopia Building, 1 Regent Street, Cambridge CB2 1GG, UK

7 Department of Zoology, University of Cambridge, The David Attenborough Building, Pembroke Street, Cambridge, UK

8 UN Environment World Conservation Monitoring Centre, 219 Huntingdon Rd, Cambridge, UK

9 Partner, Conservation Measures Partnership (CMP), Montreal, Canada

10 Conservation Science Group, Department of Zoology, University of Cambridge, The David Attenborough Building, Pembroke Street, Cambridge, UK.

11 United States Fish and Wildlife Service International Affairs, 5275 Leesburg Pike, Falls Church, VA 22041, USA

12 NatureScot, Great Glen House, Leachkin Road, Inverness, UK

13 Endangered Landscapes Programme, Cambridge Conservation Initiative, The David Attenborough Building, Pembroke Street, Cambridge

14 International Institute for Environment and Development, 235 High Holborn, London UK

15 Science Media Centre, 183 Euston Road, London, UK

16 Forestry England, 620 Bristol Business Park, Coldharbour Lane, Bristol, UK

* The findings and conclusions in this chapter are those of the author and do not necessarily represent the views of the US Fish and Wildlife Service.

9.1 How Embedding Evidence Improves Processes

A common barrier to effective, evidence-based decision making is that many processes and systems do not appropriately integrate the available relevant evidence. This includes drafting policy recommendations, producing written guidance, writing management plans or devising organisational policies.

There are many benefits of adopting evidence-based processes, including:

- More effective decisions and actions. Transparently reviewing the evidence enables an assessment of the likely efficacy of the proposal.

- Retention of institutional knowledge. When staff leave an organisation, information and institutional memory may also be lost. Embedding processes that capture the information that is used to guide decision making minimises the risk of knowledge erosion or loss.

- Self reflection and self challenge. Creating time to pause and reflect on approaches can help break the cycle of ‘doing things the way they have always been done’, instead identifying where new approaches might be more effective.

- Filling knowledge gaps. Identifying key gaps in knowledge that underpins evidence may encourage further evidence creation.

- Skills building. Including the consideration of evidence in decision-making processes will often provide staff with new skills. As staff build these skills, evidence-based processes will naturally become part of everyday thinking and help effect cultural change (Chapter 11).

- Improved consistency and accountability. This is often useful when decisions need to be justified, for example to funders. Having consistent transparent processes makes this much clearer and provides a means of improving future decisions.

- Efficient actions and savings. By enabling more effective actions to be put in place, evidence use can reduce the resources required to reach given goals, thus saving money.

- Risk Reduction. Reducing the unsatisfactory outcomes, which may also lead to bad publicity or reputational damage.

- Improved morale. Staff and volunteers can be motivated by having the knowledge that similar interventions have been successful in the past.

There are, however, major barriers faced by conservation practitioners and policy makers when using evidence. Walsh et al. (2019) highlighted the particular importance of organisational structure, decision-making processes and culture, together with practitioner attitudes and relationships between scientists and practitioners. Additional specific barriers include assuming guidelines and advice are based on science; poor databases or dysfunctional information management systems; limited access to relevant evidence; confusing decision-making processes; deficient or absent planning processes that ensure use of evidence and no process to publish or document outcomes. These can all result in poor decision making, missing windows of opportunity from being insufficiently prepared, poor investments, and information and institutional memory being lost when staff leave (Possingham et al., 2012; Wilson et al., 2009). An effective way of ensuring that evidence is at least considered in decision making is to embed evidence review within organisational and governance processes. Fortunately, many such processes exist, as outlined in this chapter. Adopting evidence in these processes can help deliver a shift towards organisational-level evidence-based practice and create a culture of evidence use (see Chapter 11).

Processes in practice

Processes that automatically embed evidence into decisions need to be simple and operate smoothly, to be affordable and sustained in the long term. Systems that add significant workload without obvious benefit are likely to detract motivation for evidence-based practice. Of course, learning and adopting these processes will take some initial investment but the long-term result will be more robust, reliable, and effective end-products that enable quicker and more efficient updates. This in turn will motivate staff and volunteers, and increase the confidence of funders and project partners

9.2 General Principles for Embedding Evidence into Processes

9.2.1 When, what and how much evidence?

In any process embedding evidence there are some general questions that need answering.

When is evidence needed?

Not all the decisions need to be evidence-checked (Keeney, 2004; Hemming et al., 2022, Sutherland et al., 2021). As explained in Section 8.2, it may not be worthwhile to seek evidence for minor interventions, where the evidence is well known, or where the outcome is obvious.

Evidence is worth checking further where there is uncertainty of the outcome and the decision is important because of the consequences or the scale of investment or other sensitivities (e.g. perhaps there are public concerns around erecting fences or increasing populations of carnivores).

As discussed in Chapter 7, this can be thought about in terms of the assumptions or claims that are made when designing a project (e.g. in a Theory of Change approach). Some of these claims will be very critical to the success of the project, others may be inconsequential. Evidence should be focused on the claims being made where we are unsure of the evidence base and which are critical for success.

What evidence is needed?

As described in Chapter 2, the evidence base for a conservation decision may come from published literature (books or journals), manuals and reports (grey literature), previous personal experience, or be received through word of mouth from colleagues, peers, expert advisors, or local knowledge. Evidence may be on a mixture of subjects including the status of biodiversity, threats, stakeholder values, costs, and effectiveness of action. The two most important characteristics of each piece of evidence are its reliability and its relevance; both these components need consideration when assessing how much weight to put on a particular piece of evidence in the decision-making process (see Chapter 2). The type of audience will also determine the type and relevance of evidence; decision makers in environmental agencies may be interested in robust biological data but this may be meaningless to decision makers in, for example, ministries of finance or planning who will want to understand issues such as numbers of jobs created, returns on investment or contributions to GDP. It is critical, thus, to understand the political economy of decision making in order to understand the type of evidence that may help influence policy and practice (Bass et al., 2021).

How much evidence?

The level of detail of evidence needed for each decision will also depend on reliability and relevance. For example, a planned action to create nesting platforms at a lake to encourage breeding ospreys may require a few sentences to describe the relevant evidence, summarising previous studies on platform usage and breeding success. In comparison, a costly proposal to reintroduce a large-herbivore population to a new site is likely to warrant a detailed description of the status and population trends of the species, the suitability of the habitat, and the probability of success of the proposed translocation (including the source and number of animals, the method of translocation and the release approach). In such examples, it may be pertinent to include evidence about the context, importance, and feasibility of a proposed action. This could include information about acceptability, cost, logistical practicality, and the availability of necessary equipment and expertise.

How to present evidence

Box 9.1 outlines general principles for collating and presenting evidence, based upon Downey et al., (2022). Following these principles should become easier as information becomes more accessible, evidence synthesis becomes more common, and the culture changes described in Chapter 11 result in increased training in evidence use. These principles underpin all the approaches described in subsequent sessions.

When presenting evidence, referencing should be clear and consistent throughout the document, including in the methodology. If a recommendation is based upon expert opinion this should be stated. Chapters 2–4 describe how to assess and summarise evidence.

In some cases, it is inappropriate to give references, for example in leaflets or popular documents. In these cases, the evidence base can be alluded to by an appropriate choice of phrasing (Table 9.1). However, even if references are not included in a document, it is important that they are available should stakeholders wish to see them. Doing so increases trust and helps reduce the research needed for subsequent projects. With an increasing move to online information, these references could be included on project websites, with a related QR code or link on the leaflet.

Table 9.1 Examples of wording to describe different evidence support when omitting evidence sources (Modified from Downey et al. 2022)

9.3 Evaluating Evidence Use

9.3.1 Evidence-based capability maturity models

Organisations can gradually increase their capacity and their commitment toward evidence-based decision making. Capability Maturity Models (CMM) can help organisations initiate discussions on where they are now, where they want to be, and how to get there. CMMs usually depict 4–5 levels of maturity for a given discipline, outlining the characteristics applying at each level. The levels describe an evolutionary improvement from ad-hoc immature processes to disciplined high-quality effective processes (Stewart, 2016). Table 9.2 shows a CMM developed to help organisations improve their evidence-based practices. This can be used at various scales, but is most effective for organisations, programmes, or operational regions where it can encourage discussion about means of improving practice (Stewart, 2016).

Table 9.2 Evidence Use Capability Maturity Model (Adapted from Stewart, 2016)

Currently, many organisations would sit at level one. Moving to the higher levels may require an increase in capacity, training or investments in processes alongside commitments to routinely use the sorts of processes described in this chapter.

9.3.2 Learning agendas

Learning agendas, also known as evidence-building plans, are a tool for organisations to coordinate and elevate the use of evidence in policy, budget preparation and decision making. In developing a learning agenda, organisations identify and prioritise the questions that, once answered, are likely to have the biggest positive impact on organisation functioning, performance, value creation and impact generation. Learning agendas can thus be defined as a set of prioritised research questions and activities that guide an organisation’s evidence-building and decision-making practices (Nightingale et al., 2018).

Learning agendas in government

In the United States, government agencies are increasingly expected to develop and use learning agendas to identify priority questions and engage with the public and other key stakeholders on evidence. Guidance and requirements for learning agendas were developed following the 2018 law, The Foundations for Evidence-Based Policymaking Act (abbreviated as the Evidence Act), which advances data and evidence-building functions in the US government and builds on more than a decade of legislative actions to strengthen federal evidence-building (GAO, 2019). Learning agendas are described by the US Office of Management and Budget (OMB) as the ‘driving force for several of the activities required by and resulting from the Evidence Act,’ including the development of annual evaluation plans and assessments of an agency’s ability and infrastructure to carry out evidence-building activities (OMB, 2019). Box 9.2 describes a process for agenda development, intended as a cycle for continuous learning. Additional resources and US agency guidance include the Evidence Act Toolkit: A Guide to Developing Your Agency’s Learning Agenda (GSA OES, 2020), the OMB memo Evidence-Based Policymaking: Learning Agendas and Annual Evaluation Plans (OMB, 2020), and www.evaluation.gov.

9.3.3 Evidence-use index

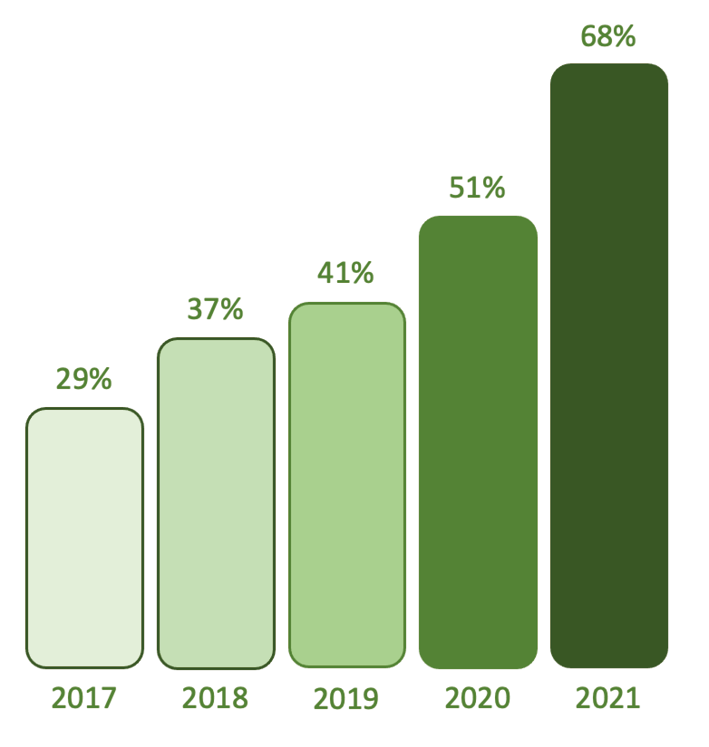

To meet the expectations and requirements established under the Evidence Act and related policies, two US agencies are routinely incorporating evidence within programme implementation. The international conservation programmes of the US Fish and Wildlife Service have proposed annually reporting an index of the percentage of financial awards implementing at least one action for which there is evidence of effectiveness. In this context, an action with evidence of effectiveness will be defined as a conservation intervention that has been categorised as Effective/Beneficial or Likely to be Effective/Beneficial by an independent public repository of evidence (e.g. Conservation Evidence www.conservationevidence.com), or similarly assessed by systematic review (e.g. CEEDER database environmentalevidence.org/ceeder/about-ceeder, or the Evidence for Nature and People Data Portal https://www.natureandpeopleevidence.org/#/).

Another US agency, AmeriCorps, has been tracking a similar metric since 2017. Through a programme that provides strategic grants to organisations that engage in service to address local and national challenges, AmeriCorps has increasingly invested in what works by supporting programmes with moderate to strong levels of evidence. Since establishing a baseline percentage of 29% in 2017, AmeriCorps programmes have annually improved and, by 2021, 68% of competitive grant funding was invested in programmes where interventions were underpinned by evidence (Figure 9.1). AmeriCorps uses evidence to allocate programme resources effectively, such that over time, ‘more grant dollars were awarded to applicants with strong and moderate levels of evidence… for proposed interventions, and fewer grant dollars were awarded to applicants with little to no evidence of effectiveness’ (CNCS, 2020).

Figure 9.1 The percentage of ASN competitive funding awarded to projects with moderate or strong levels of evidence of effectiveness. (Source: authors using data from CNCS, 2020)

These are innovative approaches using indices for measuring evidence use within organisations. Such metrics of evidence use can help drive improvements across an organisation and are likely to encourage further experiments and collation of evidence.

9.4 Evidence-Based Species and Habitat Management Plans

A management plan is a fundamental tool to translate objectives into practice. The process of writing a management plan allows management options and targets to be explored and defined, and the resultant plan guides practical interventions. A management plan is also an important record of intentions, which can be referred back to in the future and retained through staff successions to ensure continuity in management. It may also be a requirement of funding. Using evidence appropriately throughout the creation of the management plan helps substantiate the rationale for management decisions and ensures that the plan is robust, and well informed with the best chance of delivering its objectives.

The following sections describe key components in the process of creating an evidence-based management plan.

9.5 Evidence-Based Guidance

Guidance provides a practical means of obtaining synthesised advice on which interventions are effective, and how to implement them, often without the need to undertake time-consuming literature searches (Brancalion et al., 2020). Studies have shown that practitioners often assume that guidance is based on the scientific literature (Walsh et al. 2019), but this may not be the case.

A recent review of UK and Irish conservation guidance documents revealed a range of problems. These included a lack of referencing making explicit what evidence had been used, insufficient evidence to support the rationale for the recommendation; outdated guidance (often over ten years old); lack of clear methodology and explanation on how recommendations were derived; and an absence of consideration of uncertainty in the key evidence (Downey et al., 2022).

To improve the process, Downey et al. (2022) provide a detailed explanation of how guidance creation can become more evidence-based. The main idea is to adopt the principles shown in Box 9.1 so that the justification of all main claims is transparent and uses the types of phrasing suggested in Table 9.1. This would allow the reader to know what underpins the statement and where the advice is more speculative. One approach is to provide a user-friendly version, alongside a link to a more in-depth report that details the methodology and provides the underlying evidence (e.g. Cruickshanks, 2018).

As an example of how evidence can be collated and converted into guidance, The European Commission sought to support EU Member States in conserving farmland birds and wider biodiversity. Conservation of farmland birds is a Birds Directive obligation that is essential to the success of the EU Green Deal and the EU Biodiversity and Farm to Fork Strategies. The Birds@Farmland Initiative was launched in 2020 to co-develop evidence-based conservation schemes for farmland birds and their habitat with experts, agricultural and environmental authorities, farmers, NGOs and other relevant stakeholders. Development of the schemes followed a standardized approach and Member States were invited to consider these schemes for their National Strategic Plans of the EU’s Common Agricultural Policy (CAP), instruments that allow ambitious Member States to improve the environmental performance of the CAP (Pe’er et al., 2019).

Twenty-two conservation schemes were developed in ten Member States (Austria, Bulgaria, Czech Republic, Finland, France, Germany, Hungary, Italy, Portugal, and Spain), with each scheme focusing on one of ten specific agricultural systems or one of fifteen specific flagship farmland bird species (https://bit.ly/farmlandbirds). The schemes outlined the measures that could be adopted, their technical and financial implications, and their likely ecological and social consequences. The availability of collated evidence was essential for an effective co-development of this guidance. As shown in Table 9.3, if evidence was not readily available, then literature searches and expert opinion helped fill the gaps.

Table 9.3 Evidence used during different stages of creating agricultural schemes to benefit biodiversity including whether the necessary information is already collated and easily available (YES, PARTLY, NO) and how evidence gaps were filled.

9.6 Evidence-Based Policy

The advantages to basing policy on evidence may seem obvious, but despite this, evidence is not always used and evidence use can be mixed. Evidence that supports a popular policy may be stretched beyond its scope, while contrary evidence can be ignored or even suppressed (e.g. Hutchings, 2022). This is because policy-making is inherently political rather than scientific or technical (Bass et al., 2021). There is, however, increasing pressure from society through traditional and social media, citizen activism and from politicians themselves for policies to be grounded in sound science (reviewed in Parkhurst, 2017). Whilst scientific evidence usually informs policy, it does not necessarily determine policy development (Gluckman et al., 2021).

One of the major advantages of using evidence, from a policy point of view, is that any intervention that evidence suggests has worked in the past will be more appealing to risk-averse policy makers than untried approaches. Furthermore, solid evidence for a problem or intervention provides justification should decisions be questioned or if the policy is ineffective.

Chapter 1 describes the policy hexagon in which evidence is refined as it is incorporated into the decision making.

Suggestions for enhancing the uptake of evidence in policy creation include the following:

- Identify the broad change sought (e.g. conserving a species or site).

- Identify the specific change sought (e.g. species listed as protected, planning decision changed, modification of agri-environment scheme).

- Take advantage of any policy windows (Rose et al., 2020) resulting from an identified problem, public concern, a pledge to act or legislative horizon scanning to identify forthcoming legislation (Sutherland et al., 2022).

- Legislation and regulation typically follow a consistent series of papers and decisions. Understand the process, including which reports or papers underpin each stage, and how to embed evidence in these. For example, in the UK parliament, ‘Green Papers’ outline thinking and alternatives used for seeking views from interested parties, ‘White Papers’ present proposals for legislative changes, and a ‘Bill’ is the proposed legislation.

- Determine the key opinion formers, both inside and outside the policy-making institution, as well as who makes decisions.

- Understand stakeholder motivations, relationships and power dynamics, the ‘political economy’ of decision making, in order to improve understanding of when, how, and by whom information is used (Hou-Jones et al., 2021). For example, policy makers need to show change within the timescale of their mandate.

- Get into the decision maker’s mindset. What is politically advantageous? What will be seen to be popular? What is an appropriate response to current concerns? What will be seen to appeal to a higher self and not appear selfish? What is likely to be financially beneficial to society?

- Identify where evidence can make a difference such as identifying problems, elaborating on the consequences of issues or showing the effectiveness, or not, of possible actions.

- Change typically requires a mix of publicity to drive a concern, lobbying through credible organisations and attracting political support.

- Ensure language used fits local cultural norms (Barnes and Parkhurst, 2014; Rose, 2014).

- Build on successes. Evidence of successful interventions may also reinforce policy makers’ appetite for continued investment.

At a global level, key drivers of conservation policy include the Convention on Biological Diversity (CBD), the Intergovernmental Science-Policy Panel on Biodiversity and Ecosystem Services (IPBES) and the International Council for the Exploration of the Seas (ICES). Each of these produces reports that are heavily grounded in evidence and are transparent, with methods published in multiple languages and sources of data clearly referenced. The assessments tend to be associated with texts often thousands of pages long. Whilst aiming to provide authority, Sutherland (2013) pointed out that they can undermine the venture if they contain inaccuracies, or are unclear about the reliability and relevance of underlying evidence sources. One approach is to break down the assessment into more focused reports on the key issues and carefully assess the evidence for each, using some of the processes described in earlier chapters on evidence assessment, use of experts and decision making.

9.7 Evidence-Based Business Decisions

The success of any business is determined by the effectiveness of the strategy it follows. The strategy sets out the business’s vision and objectives. Management plans and policies can be set to support the delivery of these objectives and inform business decision making. An appropriate evidence base can inform these plans and guide them in delivering upon the prescribed objective. This is the case in standard business operations, but also when considering environmental and climate-related impacts and actions.

Biodiversity is rising on the business agenda (WEF, 2022). Although many companies are still not fully addressing biodiversity impacts of their operations, it is becoming increasingly common for businesses to have high-level sustainability strategies that incorporate biodiversity impacts and actions (Addison et al., 2019; de Silva et al., 2019). These strategies may lay out what a company’s impacts are on biodiversity, and what actions a company is taking to mitigate that impact and restore biodiversity values. This can include impacts through direct operations, supply chains, and in their investments. Through its guidance, methods, and tools, the Science Based Targets Network (SBTN) aims to help companies determine what they can do today to begin aligning with science (or evidence) to ensure they are doing their part for an equitable, net-zero, nature-positive future.

A company may also have specific projects or activities that require a detailed assessment of impacts (e.g. through the environmental impact assessment process), and the creation of management plans for biodiversity. For example, a company may have a management plan to enhance biodiversity in an urban office site, a programme focused on taking action to reduce the impact of upstream supply chains on biodiversity, or a management plan to minimise and mitigate the impact of new infrastructure on biodiversity. Such strategies and plans will vary depending on the sector. Companies can also integrate biodiversity considerations into wider environmental programmes such as nature-based solutions for climate change, or developing new investment opportunities that have positive impacts on biodiversity and climate. With growing ambitions and commitments around net zero and nature restoration, businesses are looking for approaches that will support them in delivering on their objectives.

However, there are some barriers to evidence use in this sector (CISL, 2022): much of the relevant science is still developing, as are the methodologies and metrics. Executive management often feels there is insufficient data, information and evidence to make decisions. There is not always sufficient internal knowledge of biodiversity, which can slow acceptance. This is amplified by the fact that alternative, environmentally-beneficial approaches can be perceived as complex, costly, uncertain, or unable to deliver compared to traditional solutions.

Drivers for evidence use

There are a number of possible drivers for businesses to embed evidence in their environmental strategies and programmes. However, the drivers for evidence are not common across all sectors, all value chains within a sector, or all functional areas and leaders within a business.

- Effective action — A clear evidence base can support a business in determining the appropriate steps to meet environmental goals (for example, creating reductions in carbon emissions or creating resilient supply chains) by ensuring future supplies of raw materials. It may be that a company wants to reduce its impact on biodiversity, and an evidence base can be used to identify what the most effective and efficient practice or approach would be to achieve this.

- Lowered risk and realised opportunities — Action to mitigate biodiversity impacts in some sectors is driven by the operational, financial and reputational risks associated with negative impacts on nature. By increasing the effectiveness of proposed mitigation actions, evidence use can help lower these risks. Other sectors may be driven by operational opportunities associated with biodiversity restoration (e.g. improving pollination of agricultural crops, improving water quality), and using evidence can help realise these opportunities.

- Providing leadership, and raising the bar — Some companies will want to lead and raise the bar within their sectors. This could be something as ambitious as wanting to transform a sector such that it delivers sustainability opportunities and biodiversity benefits across a landscape. Evidence, decision tools, and sensible use of experts are needed to undertake this transformation. For example, in agribusiness it is often not clear what interventions can be undertaken at the farm and landscape level to successfully reduce the risks associated with nature degradation in order to deliver a more sustainable supply chain.

Using evidence in business decisions

White et al., (2022a) look at principles for incorporating evidence into business biodiversity strategies, which we briefly summarise below. These principles can help ensure that business-biodiversity actions to mitigate impacts and restore biodiversity are based on evidence. They can also be used by consultants working with businesses to ensure that recommendations are based on the best available information.

- Collating evidence of status, impacts, and actions — Relevant evidence, from a range of sources (see Chapter 2), should be collated and reviewed to inform proposed actions. This should include information on the status of biodiversity, the impacts of business activities (including through direct operations, upstream and downstream supply chains, and investments), and the effectiveness, costs, acceptability, and feasibility of the proposed action.

- Prioritising action based on evidence — The collated evidence should be used to decide upon and prioritise actions as part of strategies and environmental programmes, to ensure they are likely to be effective at delivering action to avoid and minimise impacts, and restore biodiversity. The rationale behind taking (or not taking) an action should be documented.

- Transparency — Information compiled on the status of biodiversity, negative impacts of business activities, the evidence base underlying mitigation and restoration actions, and the observed impact of positive actions should be transparently reported.

- Monitoring — Where feasible, actions taken should be monitored to assess their impact, and changes made where unexpected or ineffective outcomes are occurring. This is particularly important where the evidence base behind an action may be limited or if a business may carry out similar projects in the future

- Embedding evidence — Evidence use can be embedded across the organisation. This should involve engaging colleagues from across the business in evidence use, where a consistent evidence base can offer clarity and help streamline decision-making processes for example in new investments, or new products. Doing so can help remove barriers to action, such as scarcity of data and knowledge around particular subjects, challenges around identifying concrete benefits and the financial implications of biodiversity actions, and complexity of working in partnership with internal and external partners.

Businesses move at a fast pace and often want to make decisions swiftly. There is a challenge around wanting to make the right decision versus wanting to make a decision in a given timeframe. The 80–20 rule can be applied whereby the information needed to inform a decision is 80% there: it is not perfect but it is near enough to enable a business to move forward. Expansion of evidence bases from which to draw would be advantageous and could provide businesses with the confidence that they are making decisions based upon scientifically rigorous information.

9.8 Evidence-Based Writing and Journalism

Science is a rich source of stories on a wide variety of public-interest subjects, from climate change and energy to electronic cigarettes and vaccines. Over time, a body of evidence is built up to establish the facts. What journalism often does is report these pieces of evidence in isolation and without clarity around the uncertainty of any results. This is especially true for controversial subjects. These days, much journalism is written to shock and keep the interest of readers as long as possible. Whilst this can lead to entertaining headlines, the reality is that many articles are published daily that are not using and communicating evidence correctly. This misinformation can often have gross consequences.

There is an important distinction between evidence-based writing and conventional writing. Accuracy is important in all kinds of journalism, but in many science stories, it can be particularly misleading — and even harmful — if reporting is based on what people said or published, without considering the quality and the weight of the evidence. Ignorance of the quality of the evidence of the infamous Wakefield ‘MMR and autism’ study, and ignorance of the weight of evidence around climate change, did immense harm to public understanding of two of the defining issues of our times.

A key element of an evidence-based journalist is to make sources as clear as possible. If material is from a journal paper or report then provide sufficient clues to make it easy to find (such as author and journal name or doi or a hotlink if online).

There are a number of things journalists should do when reporting the science behind a story.

When researching a study:

- Consider the strength of the evidence, as described in Chapters 2 and 3. Is this a peer-reviewed paper in a reputable journal, a report from a recognised scientific body or a conference abstract with no data available for scrutiny? Not all science has equal weight.

- Take care with preprints. Preprints are scientific papers that have been posted online without any external peer review, meaning they have not yet been scrutinised by the wider scientific community. Whilst many of these may be excellent, others will never make it into the published body of scientific literature. Journalists should take even more care with conference abstracts, which usually do not even have data available. If used as a source for a story, it should be made clear when a piece of work has not been peer-reviewed or published.

- Look at the author list, especially if the claims strike you as hyperbolic. A group of authors with a track record of solid science may carry more weight than one or two individuals with a history of campaigning on the subject.

- Try to seek the opinions of other scientists actively researching the same field. If new findings attract serious scientific concerns, these need exploring; they will also be able to give you clues about the trustworthiness of an author or journal and help establish where the weight of evidence lies.

- Pay attention to the study design and consider whether the statistical analysis is appropriate, whether the sample size was sufficient, whether the trial was properly blinded/randomised, if there are any major limitations, and whether there is any extrapolation in their discussion. Not all of these will be relevant to every study but they are the kinds of things other scientists should be able to spot. Report these in the article where possible. Sutherland et al. (2013) provide a list of twenty tips for interpreting scientific claims and cover many common misinterpretations.

- Correlation does not equal causation. Be especially careful of this when reporting observational studies, as they are almost always completely incapable of establishing cause. These are very common, they are useful for generating scientific hypotheses or suggesting where future research should be directed, but they often prove nothing and can be misleading.

When reporting a study:

- Try to frame them in the context of other evidence. It is important to investigate the current scientific consensus on a subject; results that challenge or overturn the received wisdom should be handled with care — especially if the findings change or reinforce public beliefs or behaviour on an important subject. Extraordinary claims need extraordinary evidence!

- Always try to give a sense of the stage of the research (are these early provisional findings or is this the final conclusions of a long, major trial?)

- In all cases ensure you should represent the overall findings of a study, rather than focussing on extreme values, using ‘up to’, or cherry-picking data. It is important to distinguish clearly between the actual findings of a study and interpretation or speculation.

- Headlines should not mislead the reader about a story’s contents and quotation marks should not be used unless for a direct quote.

- Be clear about any uncertainties, because no scientific paper can ever answer all the questions. Take note of whether the authors themselves have been candid about the uncertainties and limitations in their own paper. Make clear the distinction between findings, interpretation and extrapolation.

9.9 Evidence-Based Funding

Our ability to meet conservation targets is highly dependent on how biodiversity conservation is funded. Because of this, funders have the potential to drive major decisions in conservation. However, it is surprisingly difficult to gather information on how much is spent on conservation projects and where it comes from. The data are scarce, and those which are available are often incomplete (Waldron et al. 2013; White et al. 2022b). In addition, it can be difficult to assess whether funding has been given primarily for a biodiversity-related project or for a project that also benefits biodiversity in addition to its primary goal, such as development (Miller, 2014). It was recently estimated that, globally, we spend $100–180bn a year on nature conservation, with previous studies suggesting that funding needs to be an order of magnitude greater (McCarthy, 2012) and better distributed (Waldron et al., 2013) to meet targets. Understanding the success of investing in biodiversity conservation has been limited by the lack of evidence to show how investments in interventions measurably affect biodiversity (Possingham and Gerber, 2017). If we are lacking in funds, then ensuring the funding that is available is going to the right places is vital. For this to be possible, we need to look at how funding is awarded and what approaches may help to improve this process.

Evidence-based grant-giving could be the gateway to effective, evidence-based conservation decision making. Under an evidence-based system, funds would be awarded to projects and programmes judged on the evidence of their effectiveness, potential effectiveness, or ability to fill a knowledge gap. This approach would be cost-effective, reduce risk, and promote a positive societal change, therefore, it is in the interest of the giver, the grantees, and society as a whole.

The ability to move to an evidence-based funding approach may be limited by the capacity of the funding organisation. Whilst some organisations may be able to spend money and time investigating the evidence behind projects from their end, many will not. Having applicants complete this process, and having free and easy-to-use resources (e.g. Conservation Evidence, Applied Ecology Resources) and guidance (provided in this chapter), significantly reduces this challenge. This simply requires that grant givers ensure that their grantees show the use of evidence, either by including a section on their application forms or demonstrating how they will monitor and include evidence in all aspects of their project, from planning to the dissemination of results. As well as helping the funders, this process should encourage grantees to scrutinise their own work and challenge assumptions more. In addition, if funders require that grantees report back their findings, whether results are successful or not, a better record of where funding has been effective can be kept and prevent the waste of future resources on projects that are unlikely to work (Catalano et al., 2019).

How could this be done?

Whilst the solution seems simple and obvious, the implementation may not be straightforward. There are now many platforms available to freely access evidence on a variety of topics (conservationevidence.com/content/page/127). This makes it easier for grantees to search for and find evidence, if it exists, in their subject area.

Box 9.3 shows different ways that funders can ask grantees to provide evidence during their projects at various different stages. These were developed by a group of conservation funders (Parks et al. 2022).

9.9.1 Assessing evidence-based funding applications

When assessing the evidence base provided by applicants, it is important to remain aware that reviewing evidence may be a new process for applicants and it is likely to be most useful if the process is supportive and collaborative rather than a strict judgement of the evidence base. It is particularly important to ensure the process is fair to projects working on species, ecosystems or regions where evidence is scarce.

The aim of asking applicants to describe the evidence base for their proposal is to aid the applicant, encourage transparent decision making, and improve the effectiveness of the final project. Therefore, it may, or may not, be appropriate for evidence use to be a criterion on the assessors’ score sheet; the main aspiration is to ensure that applicants have gone through a rigorous process as part of their decision-making, even if the formal scientific evidence is sparse.

Some key questions to consider when reviewing the application are as follows:

1. If the project proposal is delivered as described, would it be a good investment?

This question is asking about the main objectives of a proposal and whether they are a priority for funding.

- Is the target species/habitat/ecosystem a conservation priority?

- Are the proposed gains in the target species and/or habitats sufficient to warrant the funding requested (i.e. is it cost-effective)?

- Is there suitable monitoring in place to assess and demonstrate whether the project is meeting its proposed objectives?

- What is the legacy of the project? Will the benefits be lost as soon as funding ceases? Is the local community involved in a way that is more likely to ensure longevity?

Sometimes the objective may be easily justified, such as the protection of a globally threatened species in one of its few remaining sites. In other cases, evidence to support the local, regional or national importance of the target needs to be provided. Such evidence may include issues such as connectivity of habitats or populations or the delivery of public benefits, such as clean water or access to nature for local communities.

2. Is the proposal likely to succeed in achieving the described outcome?

This question relates to the likelihood that the proposal, and the actions described within it, will lead to the desired outcomes.

- Are the intended outcomes well defined?

- What actions have been proposed in order to achieve those outcomes?

- After reflecting on the available evidence, and its relevance, are the suggested actions likely to be effective for this project?

- Are there the resources needed available, for example, are there water sources, saplings, animals for grazing, or individuals for reintroducing?

3. Is there capacity to deliver the project?

This question relates to the likelihood that the organisations, institutions, structures, and people involved in the proposal are in place to enable successful delivery.

- Are people with the skills needed available and willing to contribute to delivery of the project?

- Is there agreement, acceptance and involvement within the local community/across relevant stakeholders?

- Is the funding requested adequate for successful delivery of the proposal?

4. Is there capacity to learn?

- Does the proposal suggest processes and capacity are in place to monitor progress and to learn and adjust plans accordingly?

If applicants are able to demonstrate that they have considered the evidence base behind key decisions in planning the objectives and implementation of their proposal this will provide assurance of transparent and carefully thought out planning processes, increasing the chances of delivering a successful conservation project.

Table 9.4 gives examples of potential text for describing how the evidence has been checked.

Table 9.4 Example text describing range of approaches for evidence checking.

9.9.2 Using Evidence to Learn from Funding Portfolios

Funders can play a vital role in collecting and providing evidence, and often have a unique opportunity through the sheer volume of evidence that is available across their portfolios. The evidence may exist in the form of primary data from field projects of grantee organisations, published documents and literature from funded projects and programmes, or project impact and effectiveness reporting. Collecting and sharing this evidence can improve the evidence base, including many topics, especially organisational, for which evidence is thin.

The MAVA Foundation has adopted a process to collect, assess, and share available evidence from its portfolio. The foundation has been funding conservation efforts for over 25 years and closes in 2022. With the closing date approaching, MAVA was keen to leave behind tangible conservation outcomes. In addition, the foundation also wanted to promote evidence‐based conservation and take responsibility for making its insights and learnings accessible to the broader conservation community. While collecting the evidence for selected learning topics, the foundation sought to bring together key actors in evidence-based conservation to compare and align their approaches and concepts and eventually ensure a broader uptake of a common evidence-based conservation practice in the discipline.

Building on a recent approach for defining and using evidence in conservation practice (Salafsky et al. 2019, 2022), MAVA and its partners Foundations of Success and Conservation Evidence adopted a five-step process to solicit learnings on a range of topics.

Step 1. Define the learning topic

To collect evidence from a considerable portfolio of grants in retrospect requires finding focus. The full portfolio of MAVA grants was grouped by conservation action, using the IUCN standard classification for conservation actions (IUCN 2022). A shortlist of actions with many grants was selected, with the final list based on the perceived relevance for the wider conservation community, and the interest of the project team in exploring those topics. This resulted in a final list of four topics: 1) flexible conservation funding; 2) partnerships and alliances; 3) capacity building; and 4) research and monitoring.

Step 2. Design learning questions

A theory of change (see sections 5, 4.7.5 and 7.6.5) for each topic can capture the pathway from conservation intervention to desired outcome. This helped formulate specific learning questions and associated assumptions that could be tested with evidence.

Step 3. Collect evidence

Evidence to test assumptions came from a range of sources, including MAVA grant proposals and reports, questionnaires distributed to grantees, systematic searches of the Conservation Evidence database, and exploratory searches of the wider literature.

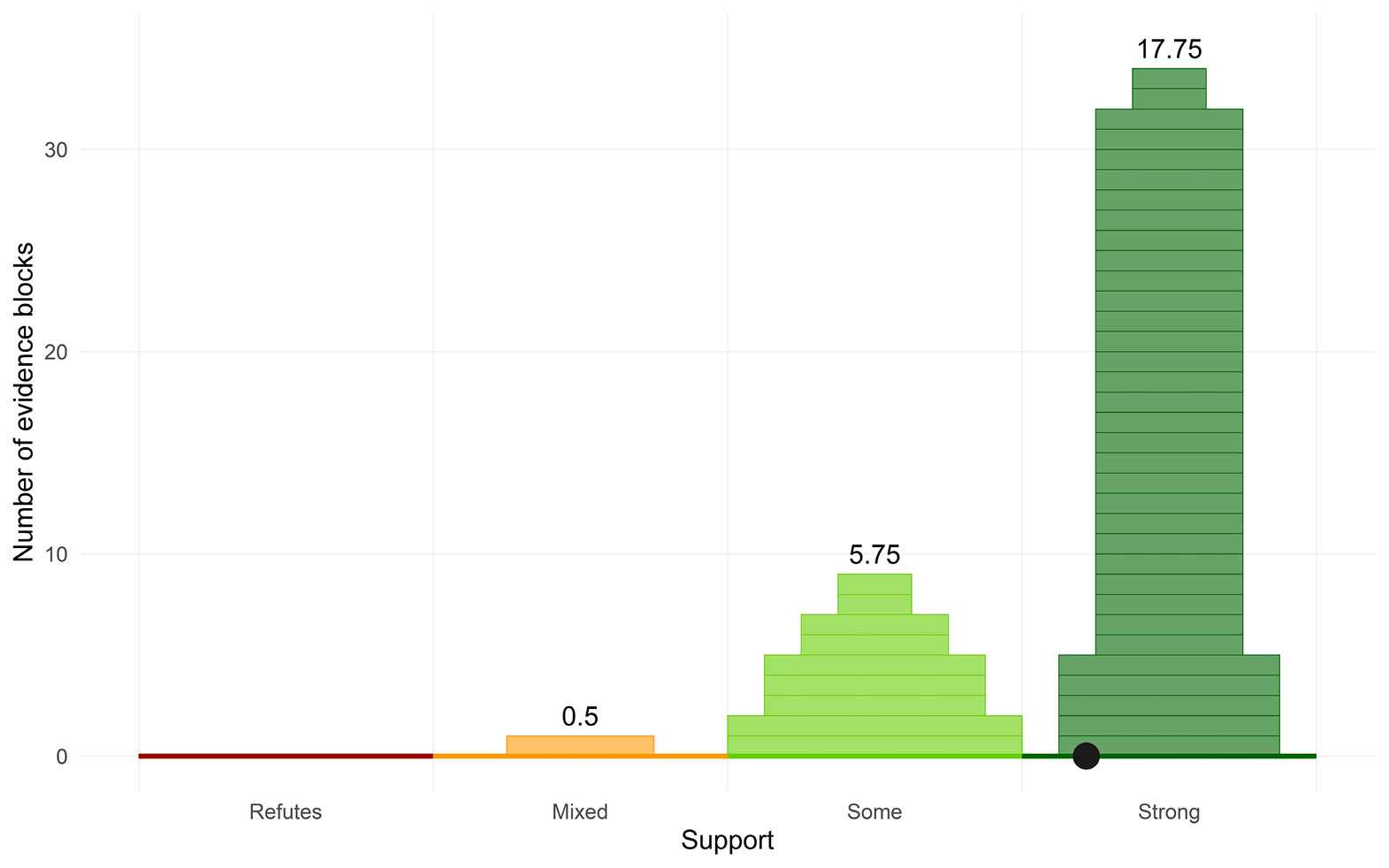

Step 4. Assess evidence

Each evidence piece entailed two issues: 1) whether the evidence supported or refuted the assumption, and 2) the weight of the evidence. The assessment of weight was based on the reliability of the information contained within the evidence, and the relevance of that information to the assumption in question.

Figure 9.2 shows all the combined evidence pieces for the assumption ‘Organisations use flexible funding to invest in organisational development and/or maturity (that is not affordable otherwise)’, displayed using a ziggurat plot (see Section 4.6.1).

Figure 9.2 A ziggurat plot showing strong support for the assumption that organisations use flexible funding to invest in organisational development and/or maturity. Each piece of evidence is a horizontal block whose width represents its weight. The maximum potential weight of a single block is one. The number above each pile of evidence blocks shows the total evidence score for that pile. The filled black point is a weighted mean, and represents the balance of evidence for the assumption. (Source: authors)

Step 5. Draw conclusions

The last step was to use the summary plots, along with a detailed consideration of all evidence pieces, to draw conclusions. This highlighted important knowledge gaps, where the available evidence was not sufficient for drawing conclusions.

The findings of the evidence assessment, key learnings, and further details on the five-step approach is provided on https://conservation-learning.org.

9.10 Evidence-Based Decision-Support Tools

Decision-support tools can help decision makers by leading them through logical decision steps. A number of decision-support tools are available to support evidence-based decisions in conservation, ranging from complex models to simple software specifically designed to be used by non-experts (Dicks et al., 2014, Christie et al., 2021). Extending the range of such tools is key to delivering evidence-based practice at scale for a range of communities.

Some of these tools embed evidence behind the scenes, drawing on a specific data set (e.g. the Cool Farm Tool Biodiversity metric, 9.10.1), whilst others allow the user to search for the relevant data themselves (e.g. Evidence-to-Decision Tool, 9.10.3). It is important that these tools also incorporate other information such as values, resource availability, and stakeholder views and are transparent and auditable to ensure stakeholder confidence. Here, we provide examples, to illustrate different ways to embed evidence in decision support tools.

9.10.1 Cool Farm Tool

The ‘Cool Farm Tool’, owned and managed by the Cool Farm Alliance, is a suite of online software tools that provide multi-metric sustainability assessments for farms. The tools provide a quantitative or semi-quantitative assessment of water footprint, greenhouse gas emissions and biodiversity management at the farm scale, based on the best available evidence. They are designed to be easy to use by growers, agronomists and suppliers of agricultural products, but also freely accessible for individual growers, and globally applicable. The Cool Farm Tool is widely used in global supply chains, enabling hundreds of thousands of growers globally to make more informed on-farm decisions that reduce their environmental impact.

The greenhouse gas and water footprint calculators in the Cool Farm Tool embed evidence by using models, emissions factors and approaches from published literature, where possible those recommended by the Intergovernmental Panel on Climate Change (IPCC), or approved for international standards (Hillier et al., 2011; Kayatz et al., 2019). Such evidence is not always entirely relevant (see section 9.11), and biases in evidence can lead to inaccurate calculations if tools are used in contexts not related to the evidence. For example, two greenhouse gas calculators, including the Cool Farm Tool, were shown by Richards et al. (2016) to frequently over-estimate emissions from farms in the tropics and to incorrectly predict the direction of change in response to changes in management in 41% of cases. Richards et al. argued that this was because the majority of data incorporated into the tools (e.g. over 90% of studies analysed for N20 fluxes following fertiliser application) came from temperate, not tropical agricultural systems (Richards et al., 2016).

The Cool Farm Biodiversity Metric (https://coolfarmtool.org/coolfarmtool/biodiversity/) takes a different approach than used for greenhouse gas measures. It embeds evidence for the effects of specific management interventions on biodiversity by scoring actions for their expected benefits to ‘general biodiversity’ (reflecting species — or habitat richness) and to a set of defined ‘species groups’, or biodiversity targets. The scores are partly drawn from the Conservation Evidence (https://www.conservationevidence.com) database of evidence assessments, and thus embed the rigorous subject-wide evidence synthesis method (section 3.3). The set of actions and species groups are selected in partnership with stakeholders, following participatory methods described by Macleod et al. (2021, 2022) for a particular biome, or set of biomes. The tool has different versions for temperate forest systems, Mediterranean and semi-arid systems, and (still in development) tropical forest systems. A similar tool is available for New Zealand farms (Macleod et al., 2021).

Expert judgement (Chapter 5) also contributes to the scores for each action in the Cool Farm Biodiversity Metric, for the simple reason that the Conservation Evidence database does not have complete coverage of farm management actions; for example, it does not consistently cover agronomic practices intended to reduce impacts of agrochemical use, or enhance agrobiodiversity (crop and livestock genetic diversity) while many other actions have insufficient evidence considering how widely they are practised. This mismatch between evidence generated by research and the evidence desired by practitioners has been called ‘evidence disparity’ and is a persistent problem in evidence-based conservation (Macleod et al., 2022).

The approach to embedding evidence in the Cool Farm Biodiversity Metric differs from the other metrics in the Cool Farm Tool largely because of the complex nature of biodiversity. Biodiversity outcomes are more varied and context-dependent than greenhouse gases, or water, both of which can be measured, predicted and reported in relatively simple units (tonnes of CO2-equivalent, or litres of water used, for example). Predictive models of biodiversity impacts or responses to land management, or even simplified numerical relationships between land management and outcome, are hard to build and even less likely to be reliable for biodiversity than for greenhouse gases or water. Such models are being actively developed to deal with certain aspects of biodiversity (e.g. Newbold et al., 2015; Duran et al., 2020; Schipper et al., 2020). To be embedded in an easy-to-use decision support tool, such as Cool Farm Biodiversity Metric, these models will need to be fully accessible and robust to any context, without vast data and processing demands.

9.10.2 Miradi

Miradi, https://www.miradishare.org (named after a Swahili word meaning ‘project’ or ‘goal’) has been developed over the past two decades to support the design, implementation, monitoring, and adaptive management of conservation and natural resource management projects at all scales. Miradi supports the assessment of the status of conservation targets, contributing factors and threats, setting conservation goals, and clarifying and tracking how actions are leading to desired outcomes and impacts. Miradi helps teams create diagram-based situation models and Theories of Change that can be used to model assumptions about a current situation and how an organisation’s strategies will lead to measurable impacts. Miradi helps document the information used throughout the planning cycle (preventing the loss of knowledge due to staff turnover) and identify knowledge gaps where more information is needed. Evidence can be documented in Miradi using open text for most factors that make up situation models and theories of change (e.g. target, threats, strategies, results), as well as using standard evidence typology, that is more easily used for data analysis (e.g. indicator viability ratings and measurements, rating of threats, selection of strategies, goals, and objectives). An upcoming release of the software will allow users to document analytical questions and assess the degree of support, weight, and confidence in assumptions based on the available evidence (Salafsky et al., 2019). Miradi also allows users to query the back-end relational database, analyse data across projects and information systems, and produce dashboards to inform evidence-based decision making.

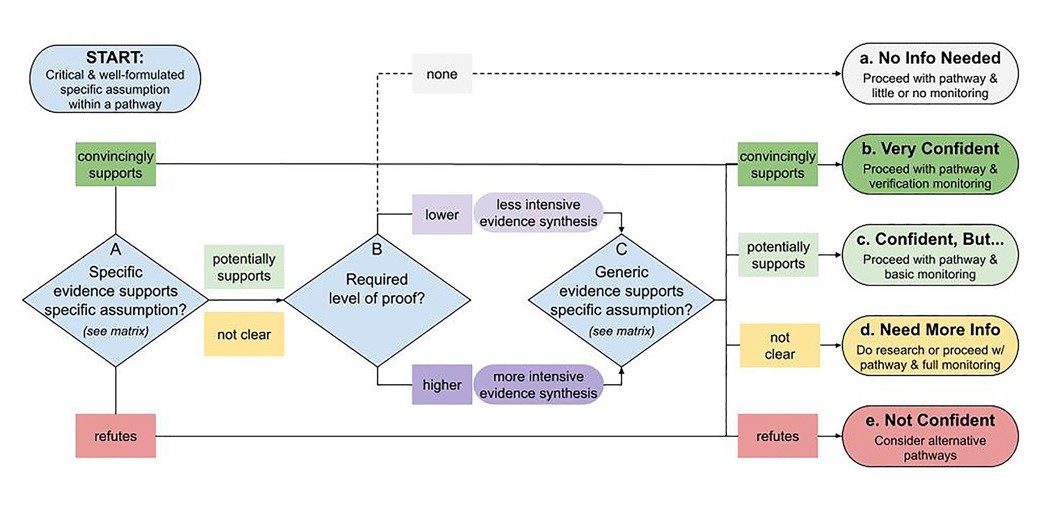

The Conservation Standards (https://conservationstandards.org/) can be used as a framework to use evidence for decision-making and the supporting Miradi software (https://www.miradishare.org) can be used to manage information and to define processes (Salafsky et al., 2019). Business Process Management can be used to discover, model, and improve business processes (Dumas et al., 2013). Simple models can also be used to support decisions on what actions to implement depending on the available evidence (see Figure 9.3).

Figure 9.3 Decision tree for using evidence in assessing a potential conservation action. (Source: Salafsky et al, 2022, CC-BY-4.0)

9.10.3 Evidence-to-Decision Tool

This tool www.evidence2decisiontool.com is a template designed to make clear the reasoning and evidence behind conservation management decisions (Christie et al 2022). The tool has three major steps: (1). Define the decision context; (2). Gather evidence; and (3). Make an evidence-based decision. In each step, practitioners enter information (e.g., from the scientific literature, practitioner knowledge and experience, and costs) to inform their decision-making and document their reasoning. The tool packages this information into a customized downloadable report. This report can be embedded into other material, simply stored to document decisions by the group (such as a reserve) and an organisation or exchanged so others can see the logic of decision made by others for shared problems.

The experience is that this is useful in bringing together those making the decision to complete the tool. The intention of the group who created this tool was that by enabling practitioners to revisit how, and why, decisions were made can help increase the transparency and quality of decision-making in conservation.

9.11 Evidence-Based Models

Chapter 2 describes how models can be evaluated as sources of evidence and Chapter 8 describes their use in decision making. This section considers how evidence can be embedded into models.

Models are critical sources of evidence and can be highly influential in decision-making. Despite this, they can be highly variable in the manner in which they use evidence. The outputs of models depend critically on the structure of the model, parameter values, and assumptions used in their formulation. Because of this, transparency around the sources of model structures, parameters, and assumptions used, any uncertainties around these, and how uncertainty affects conclusions and decisions is essential.

Models can vary from very general, with conclusions that can be broadly described, to the very specific, where conclusions are highly dependent on model structure and parameter values. General models can be useful in identifying broadly applicable principles. However, context dependence requires more specific models to be constructed, which rely strongly on particular parameter values derived from the available evidence. The transfer of model conclusions from one context to another can be problematic, making it particularly important that the boundaries and applicability of the model be clearly specified.

Models can be evidence based by adopting the same principles described in this book in which evidence is assessed and expert judgement carried out in ways that reduce bias. One model may require numerous sub-models, functions, and parameter values and some structures and values may be critical to the conclusions drawn from the modelling process. Identification of structures and parameters that strongly influence the conclusions of the modelling process can greatly facilitate the process. Indeed, a major advantage of models is the ability to explore plausible scenarios where evidence is lacking or even unobtainable. What then is responsible behaviour for creating evidence-based models?

- Determine how models will be used to inform decisions and where in the process models will be used. For example, models may be used to explore the effects of costs and/or benefits of different management actions or be used to contrast the effects of different ecological contexts.

- Explore which model structures, sub-models, functions, and parameter values are critical for decision making. Value of Information analysis and sensitivity analysis can show which assumptions and parameter values are critical for decision making. These analyses can inform on whether evidence collation has been sufficient and identify critical gaps in available evidence.

- Assess what evidence is available. Strong a priori evidence for a particular ecological process may reduce the need to explore multiple structures, whereas weak or missing evidence may necessitate a wider exploration of model structures and parameter space.

- Establish principles for extracting data and associated uncertainties to include in models. This is critical (garbage in — garbage out). As in Chapter 2, collate studies and extract values. Use analysis such as meta-analysis but also Delphi Technique to consider values.

- Be explicit about models for uncertainty, there can be big differences in outcomes from different probability distributions of a particular parameter (e.g. the difference between uniform and triangular distributions of a parameter value).

- When reporting the results of modelling, describe the effects of relaxing or changing assumptions, values of parameters, and uncertainties.

- Modelling is an imperfect and iterative procedure; be honest about the limits of the conclusions and where improvements need to be made.

References

Addison, P.F., Bull, J.W. and Milner‐Gulland, E.J. 2019. Using conservation science to advance corporate biodiversity accountability. Conservation Biology 33: 307–18, https://doi.org/10.1111/cobi.13190.

Barnes, A. and Parkhurst, J., 2014. Can global health policy be depoliticized? A critique of global calls for evidence-based policy. In Handbook of Global Health Policy ed. by G.W. Brown, et al. (Chichester, UK: John Wiley & Sons, Ltd), https://doi.org/10.1002/9781118509623.ch8.

Bass, S., Roe, D., Hou-Jones, X. et al. 2021. Mainstreaming nature in development: A brief guide to political economy analysis for non-specialists, UNEP-WCMC, Cambridge, UK, https://pubs.iied.org/sites/default/files/pdfs/2021-10/20566G_0.pdf.

Brancalion, P.H.S., Holl, K.D. 2020. Guidance for successful tree planting initiatives. Journal of Applied Ecology 57: 2349–61, https://doi.org/10.1111/1365-2664.13725.

The University of Cambridge Institute for Sustainability Leadership (CISL). (2022). Decision-making in a nature positive world: a corporate diagnostic tool to advance organisational understanding of nature-based solutions projects and accelerate their adoption. Cambridge: The University of Cambridge Institute for Sustainability Leadership, https://www.cisl.cam.ac.uk/resources/publications/decision-making-nature-positive-world.

Catalano, A.S., Lyons-White, J., Mills, M.M. et al. 2019. Learning from published project failures in conservation. Biological Conservation 238: 108223, https://doi.org/10.1016/j.biocon.2019.108223.

Christie, A.P., Amano, T., Martin, P.A. et al. 2021. The challenge of biased evidence in conservation. Conservation Biology, 35: 249–62, https://doi.org/10.1111/cobi.13577.

Christie, A.P., Downey, H., Frick, W.F., et al. 2022. A practical conservation tool to combine diverse types of evidence for transparent evidence‐based decision‐making. Conservation Science and Practice 4: e579, https://doi.org/10.1111/csp2.579.

Christie, A.P., Downey, H., Frick, W.F. et al. 2022. A practical conservation tool to combine diverse types of evidence for transparent evidence‐based decision‐making. Conservation Science and Practice 4: e579, https://doi.org/10.1111/csp2.579.

Corporation for National and Community Service (CNCS). 2020. Strategic Evidence Plan. Available for download: https://americorps.gov/sites/default/files/documents/CNCS%20Strategic%20Evidence%20Plan%20FY2020_508.pdf.

Cruickshanks, K. 2018. Building biodiversity through land management: An evidence-based assessment of the needs of butterflies and moths and the opportunities for a countryside rich in insects. Unpublished report: Butterfly Conservation Report Number S18–02.

de Silva, G.C., Regan, E.C., Pollard, E.H.B. et al. 2019. The evolution of corporate no net loss and net positive impact biodiversity commitments: understanding appetite and addressing challenges. Business Strategy and the Environment 28: 1481–95, https://doi.org/10.1002/bse.2379.

Dalbeck, L., Hachtel, M. and Campbell-Palmer, R. 2020. A review of the influence of beaver Castor fiber on amphibian assemblages in the floodplains of European temperate streams and rivers. The Herpetological Journal 30: 135–46, https://doi.org/10.33256/hj30.3.135146.

Dicks, L.V., Walsh, J.C. and Sutherland, W.J., 2014. Organising evidence for environmental management decisions: a ‘4S’ hierarchy. Trends in Ecology & Evolution 29: 607–13, https://doi.org/10.1016/j.tree.2014.09.004

Downey, H., Bretagnolle, V., Brick, C. et al. 2022. Principles for the production of evidence-based guidance for conservation actions. Conservation Science and Practice, e12663. https://doi.org/10.1111/csp2.12663.

Dumas, M., La Rosa, M., Mendling, J. et al. 2013. Fundamentals of Business Process Management.(Heidelberg: Springer).

Durán, A.P., Green, J.M.H., West, C.D. et al. 2020. A practical approach to measuring the biodiversity impacts of land conversion. Methods in Ecology and Evolution 11: 910–21, https://doi.org/10.1111/2041-210X.13427.

General Services Administration Office of Evaluation Sciences (GSA OES). 2020. Evidence Act Toolkit: A Guide to Developing Your Agency’s Learning Agenda. Available for download: https://oes.gsa.gov/toolkits/.

Government Accountability Office (GAO). 2019. Evidence-based policymaking: selected agencies coordinate activities but could enhance collaboration. (GAO Publication No. 20–119). Washington, D.C.: U.S. Government Printing Office. Available for download: https://www.gao.gov/assets/gao-20-119.pdf.

Gluckman, P.D., Bardsley, A. and Kaiser, M. 2021. Brokerage at the science–policy interface: from conceptual framework to practical guidance. Humanities and Social Sciences Communications 8: 1–10, https://www.nature.com/articles/s41599-021-00756-3.

Henderson, M. 2012. The Geek Manifest: Why Science Matters, Transworld Digital.

Hemery, G., Petrokofsky, G., Ambrose-Oji, B., et al. 2020. Awareness, action, and aspirations in the forestry sector in responding to environmental change: Report of the British Woodlands Survey 2020. 33pp. www.sylva.org.uk/bws.

Hemming, V., Camaclang, A. E., Adams, M. S., et al. 2022. An introduction to decision science for conservation. Conservation Biology, 36, e13868. https://doi.org/10.1111/cobi.13868.

Hillier, J., Walter, C., Malin, D. et al. 2011. A farm-focused calculator for emissions from crop and livestock production. Environmental Modelling & Software 26, 1070–78, https://doi.org/10.1016/j.envsoft.2011.03.014.

Hou-Jones, X., Bass, S., Roe, D. 2021. Mainstreaming biodiversity into government decision-making. (Cambridge: UNEP-WCMC). Available from: https://pubs.iied.org/20426g.

Hunter, S.B., zu Ermgassen, S.O.S.E., Downey, H. et al. 2021. Evidence shortfalls in the recommendations and guidance underpinning ecological mitigation for infrastructure developments. Ecological Solutions and Evidence 2: e12089, https://doi.org/10.1002/2688-8319.12089.

Hutchings, J.A., 2022. Tensions in the communication of science advice on fish and fisheries: northern cod, species at risk, sustainable seafood. ICES Journal of Marine Science 79: 308–318, https://doi.org/10.1093/icesjms/fsab271.

Hillier, J., Walter, C., Malin, D. et al. 2011. A farm-focused calculator for emissions from crop and livestock production. Environmental Modelling & Software 26: 1070–1078, https://doi.org/10.1016/j.envsoft.2011.03.014.

IUCN. 2022. Conservation Actions Classification Scheme (Version 2.0). https://www.iucnredlist.org/resources/conservation-actions-classification-scheme.

Kayatz, B., Baroni, G., Hillier, J. et al. 2019. Cool Farm Tool Water: A global on-line tool to assess water use in crop production. Journal of Cleaner Production 207: 1163–1179, https://doi.org/10.1016/j.jclepro.2018.09.160.

Keeney, R.L. 2004. Making better decision makers. Decision Analysis 1: 193–204, https://doi.org/10.1287/deca.1040.0009.

MacLeod, C.J., Brandt, A.J., Collins, K. et al. 2021. Giving stakeholders a voice in governance: Biodiversity priorities for New Zealand’s agriculture. People and Nature 4: 330–350, https://doi.org/10.1002/pan3.10285.

MacLeod, C.J., Brandt, A.J., Dicks, L.V., 2022. Facilitating the wise use of experts and evidence to inform local environmental decisions. People and Nature 4: 904–917, https://doi.org/10.1002/pan3.10328.

McCarthy, D.P., Donald, P.F., Scharlemann, J.P. et al. 2012. Financial costs of meeting global biodiversity conservation targets: Current spending and unmet needs. Science 338: 946–949. https://www.science.org/doi/10.1126/science.1229803.

Miller, D. C. 2014. Explaining global patterns of international aid for linked biodiversity conservation and development. World Development 59: 341–359. https://doi.org/10.1016/j.worlddev.2014.01.004.

Newbold, T., Hudson, L.N., Hill, S.L.L. et al. 2015. Global effects of land use on local terrestrial biodiversity. Nature 520: 45–50, https://www.nature.com/articles/nature14324.

Nightingale, D.S., Fudge, K., and Schupmann, W., 2018. Learning Agendas. Evidence-Based Policymaking Collaborative. Available for download: https://www.urban.org/sites/default/files/publication/97406/evidence_toolkit_learning_agendas_2.pdf.

Office of Management and Budget 2019. Phase 1 Implementation of the Foundations for Evidence-Based Policymaking Act of 2018: Learning Agendas, Personnel, and Planning Guidance. (OMB Memo M-19-23). Available for download: https://www.whitehouse.gov/wp-content/uploads/2019/07/M-19-23.pdf.

Office of Management and Budget 2021. Evidence-Based Policymaking: Learning Agendas and Annual Evaluation Plans. (OMB Memo M-21-27). Available for download: https://www.whitehouse.gov/wp-content/uploads/2021/06/M-21-27.pdf.

O’Brien, D., Hall, J.E., Miró, A. et al. 2021. A co‐development approach to conservation leads to informed habitat design and rapid establishment of amphibian communities. Ecological Solutions and Evidence, 2: e12038, https://doi.org/10.1002/2688-8319.12038.

Parkhurst, J. 2017. The Politics of Evidence: From Evidence-Based Policy to the Good Governance of Evidence (London: Taylor & Francis), https://doi.org/10.4324/9781315675008.

Parks, D., Al-Fulaij, N., Brook, C. et al. 2022. Funding evidence-based conservation. Conservation Biology, 36, e13991. https://doi.org/10.1111/cobi.13991:

Pe’er, G., Zinngrebe, Y., Moreira, F., et al. 2019. A greener path for the EU Common Agricultural Policy. Science 365: 449–51, https://doi.org/10.1126/science.aax3146.

Possingham, H. P., Wintle, B. A., Fuller, R. A. et al. 2012. The conservation return on investment from ecological monitoring. In: Biodiversity Monitoring in Australia, ed. by D.B. Lindenmayer and P. Gibbons (CSIRO Publishing).

Possingham, H.P. and Gerber, L.R. 2017. The effect of conservation spending. Nature 551: 309–310, https://doi.org/10.1038/nature24158.

Richards, M., Metzel, R., Chirinda, N. et al. 2016. Limits of agricultural greenhouse gas calculators to predict soil N2O and CH4 fluxes in tropical agriculture. Scientific Reports 6: 26279, https://doi.org/10.1038/srep26279.

Rose, D.C. 2014. Five ways to enhance the impact of climate science. Nature Climate Change 4: 522–524, https://doi.org/10.1038/nclimate2270.

Rose, D.C., Mukherjee, N., Simmons, B.I. et al. 2020. Policy windows for the environment: Tips for improving the uptake of scientific knowledge. Environmental Science & Policy 113: 47–54, https://doi.org/10.1016/j.biocon.2021.109126

Salafsky, N., Irvine, R., Boshoven, J. et al. 2022. A practical approach to assessing existing evidence for specific conservation strategies. Conservation Science and Practice 4: e12654. https://doi.org/10.1111/csp2.12654.

Salafsky, N., Boshoven, J., Burivalova, Z. et al. 2019. Defining and using evidence in conservation practice. Conservation Science and Practice, 1: e27, https://doi.org/10.1111/csp2.27.

Schipper, A.M., Hilbers, J.P., Meijer, J.R. et al. 2020. Projecting terrestrial biodiversity intactness with GLOBIO 4. Global Change Biology 26: 760–771, https://doi.org/10.1111/gcb.14848.

Stewart, A. 2016 Conservation Capability Maturity Model. A model for assessing organisational performance and identifying potential improvements. Conservation Standards, https://conservationstandards.org/wp-content/uploads/sites/3/2020/10/Conservation-Capability-Maturity-Model.pdf.

Sutherland, W.J., Downey, H., Frick, W.F. et al. 2021. Planning practical evidence-based decision making in conservation within time constraints: the Strategic Evidence Assessment Framework. Journal for Nature Conservation 60: 125975, https://doi.org/10.1016/j.jnc.2021.125975.

Sutherland, W.J. 2013. Review by quality not quantity for better policy. Nature 503: 167, https://www.nature.com/articles/503167a.

Sutherland, W., Spiegelhalter, D. and Burgman, M. 2013. Policy: Twenty tips for interpreting scientific claims. Nature 503: 335–337, https://doi.org/10.1038/503335a.

Sutherland, W.J., Burke, E., Chamberlain, B. et al. 2022. What are the forthcoming legislative issues of interest to ecologists and conservationists in 2022? The Niche, British Ecological Society 53: 28–35.

Tuttle, L.J., Donahue, M.J. 2022. Effects of sediment exposure on corals: a systematic review of experimental studies. Environmental Evidence 11: 4, https://doi.org/10.1186/s13750-022-00256-0.

Waldron, A., Moeers, A., Miller, D. C. et al. 2013. Targeting global conservation funding to limit immediate global biodiversity declines. Proceedings of the National Academy of Sciences 110: 12144–12148, https://doi.org/10.5061/dryad.p69t1.

Walsh, J.C., Dicks, L.V., Raymond, C.M. et al. 2019. A typology of barriers and enablers of scientific evidence use in conservation practice. Journal of Environmental Management 250: 109481, https://doi.org/10.1016/j.jenvman.2019.109481.

WEF (2022) Global Risks Report 2022. World Economic Forum, https://www.weforum.org/reports/global-risks-report-2022.

White, T.B., Petrovan, S.O., Bennun, L. et al. 2022a. Principles for using evidence to improve biodiversity impact mitigation by business. OSF Preprints, https://doi.org/10.31219/osf.io/427tc.

White, T.B., Petrovan, S.O., Christie, A.P. et al. 2022b What is the Price of Conservation? A Review of the Status Quo and Recommendations for Improving Cost Reporting, BioScience 72, biac007, https://doi.org/10.1093/biosci/biac007.

Wilson, K.A., Carwardine, J. and Possingham, H.P. 2009. Setting conservation priorities. Annals of the New York Academy of Sciences 1162: 237–264, https://doi.org/10.1111/j.1749-6632.2009.04149.x.