PART TWO–ANARCHY OF TRANSITION

In every age of well-marked transition there is the pattern of habitual dumb practice and emotion which is passing, and there is the oncoming of a new complex habit. Between the two lies a zone of anarchy.

–Alfred North Whitehead (1861–1947)1

The five chapters of Part One have followed a common pattern: building from historical context and example, and charting change over time, into and through the Information Age and connecting with health care.

Part Two adopts a different viewpoint: that of the impact of the adventure of ideas of Part One on life and medical sciences and health care services, in their connected transitions into and through the Information Age. Its two chapters might arguably comprise and merit books of their own. They tell stories of anarchic transition in which the author has been both eyewitness and participant and are thus integral with the songline that the book traverses.

As expressed by Alfred North Whitehead (1861–1947), radically new ideas and the anarchic transitions they unleash create new contexts and opportunities that become the focus of programmes for reform. Part Three of the book peers ahead, imaginatively, towards a new era where the experiences and learning that feature in Parts One and Two will lead us to understand, create and sustain health care and its services differently.

1 Adventures of Ideas (New York: Macmillan, 1933), p. 14.

6. Life and Information–Co-evolving Sciences

© 2023 David Ingram, CC BY-NC 4.0 https://doi.org/10.11647/OBP.0384.01

This chapter steps away from the practical engineering of Chapter Five into a new dimension, to consider where information itself, as an idea, now connects within life science and medicine. The current era has seen radical transition in scientific understanding of the nature of both information and life. Like particles and waves in quantum theory, perhaps they will come to be seen, in some emergent way, as another example of complementarity. Life as somewhere between material entity and immaterial essence. Information as somewhere between material and measurable entity and immaterial abstraction.

The question ‘What is Life?’, and its connection with the nature of information as a scientific concept, has captivated luminary thinkers, who have informed and challenged one another, and written landmark books on this theme. I have a collection of these, written from physics, life science, mathematics, computer science and cognitive neuroscience perspectives. I look in turn at an eclectic selection, over time. My purpose is to illustrate how these great and imaginative contributors have applied their evolving insights to elucidate connection of their disciplines with ideas about the nature of information and life.

The chapter concludes with a reflection on information policy for health care services in the present era of still extremely rapid transition on all fronts of information technology and life science. There can be no more important global goals than those that seek balance, continuity and governance of the natural environment. In health care, these three also predominate as concerns of our age. They pose challenges that can only be tackled based on shared knowledge and methods that connect coherently and transcend from local to global scale, building on common ground.

What is mind? No matter.

What is matter? Never mind.

–attributed to George Berkeley (1685–1753)1

‘What is’ questions are not new! What is reality? What is life? What is information? These, too, perplex! Erwin Schrödinger (1887–1961) opened a new window and provided insight into (or should that be outlook onto?) the first of these questions, in the theoretical physics of the quantum era. The Schrödinger equation started wheels turning and gained experimental traction. In later years, he peered into the misty future in raising the second question and made some suggestions, too, about the Berkeley question, which he considered even harder. ‘Leave it to the computer’, as an answer to the third question, does not really equate to ‘leave it to the Schrödinger equation’, in answer to the first! And resolving to ‘never mind’ about it may turn out to matter a lot more in the computer age!

I used to sit eating with physics student colleagues, tired after a day battling problems connecting with quantum theory and the ‘What is reality?’ question. Nearby, a group of lawyer colleagues were often in more lively debate over their ‘What is law?’ question. I do not recall discussion among the mathematicians about ‘What is mathematics?’ No doubt, they were resting after their days deeply immersed in theory of number, topology and symmetry; like us aspirant physicists, numbed by our mental struggles with vector calculus, tensor algebra and analytical solutions of differential equations!

The question ‘What is life?’ became a preoccupation of mathematics, physics and chemistry, as they cross-fertilized with one another and spread their interests and influence further around the circle of knowledge, to biology and medicine. And now filtering to the top of the pile of ‘what is’ questions in both physical and life science is the question ‘What is information?’ Theory of information has evolved in multiple contexts of mathematics, science and engineering over the past one hundred and fifty years. Some believe it holds the key to clarity about other ‘What is…’ questions in science. It may have significant impact on what comes next in the evolution of life science and health care. Peering into the mists for an Occam’s razor moment, perhaps an answer could only ever emerge alongside the untangling of the first great unknown: ‘What is reality?’ Perhaps the Hitchhiker’s Guide answer, ‘forty-two’, will prove the best we can do!2 Now that we know more about information and what we can make and do, by way of data and knowledge, do we know more about life? Zobaczymy [we will see]!3

What is matter? Maybe it’s information

What is information? Maybe it’s matter

How does information matter in life?

Does any of this connect with health care?

As we have seen throughout Part One, the Information Age has been one of disruptive transition in science, technology and society. The anarchy has played out into health care services. Uncertain and experimental, new and evolving insight into the science of life has coupled with the equally new and evolving science and technology of information systems, which have themselves underpinned this scientific revolution. It looks rather like an engineering control system (with information feeding forward in some way into science, and science feeding back in some way into information), and such systems can be unstable. This scene in health care, and its context, look to have some features in common with what happened to the world’s monetary system in 2008, a thought that I explore further in Chapter Eight.

In such times, we must be cautious about digging too rapidly or deeply into ‘What is?’ conundrums. They can be tiring, costly and a bit beside the point. As the quotation leading into Part Three emphasizes, sustainable progress comes iteratively and incrementally, with the need for careful testing at each stage. The Information Age of science and technology can readily dig bottomless holes and endless tunnels of discourse, excavating more and more data, ever faster. There are swallow holes lurking when we dig deeply into ‘What is?’ questions–it is easy to fall in and get stuck underground. Swallow holes are called solution features in the technical jargon because they dissolve underlying chalk, and the earth above falls in. In some places, chalk is what supports our houses and swallow holes can undermine these foundations, when our intent in digging is to underpin and make them better. There was once a very small one halfway down our garden, which is maybe why that analogy came to my mind!

As we survey our current era of anarchic transitions in science, technology and society, we need to shore up their necessary foundations that have become exposed and weakened. How we do this, matters. We need to focus more on the practicalities of how, and less on what we’re trying to achieve and why. Health care at the front line is clearly operating on expensive and shaking foundations at present. Part Three proposes one approach to the ‘how’ question of health care information policy, about how to make and do things better.

Finding good answers will matter for health care. We must neither fall under the spell of Siren voices of technological utopia nor fail to find a safe course through the gap between beckoning rocks, which health care must navigate, seeking a way onto a stronger and more resilient future home base. We’re not like Odysseus; his story is mythical, although the seascape he tells of maybe not. Our encounter with an anarchic seascape of health care information is all real. The difference is that we create the sea and can navigate it better.

The range of ideas embraced in postulated answers to the ‘What is information?’ and ‘What is life?’ questions is considerable and continuously evolving. Here, I can only seek to outline and connect their history and scope. We can look at life in evolutionary, historical and scientific contexts. We can look at information in the context of physical science and engineering, and how it has interfaced with life science and health care. This is the scope I will now venture to outline. It is a challenge better tuned to my physics brain of yesteryear and I set it out, here, only to encourage more flexible and knowledgeable modern brains to reflect on it, pick it apart and improve it.

Life in Evolutionary Context

Our understanding of life may in time arise from way beyond our tiny Earth. Search for extra-terrestrial life is fascinating astrobiology of our time, highlighted by the report, last week as I write, of the possible discovery of phosphine in the Venusian atmosphere, as a potential marking of microbial life there. Accounts of the experiments planned for the automated Mars lander when it arrives on Venus in a few years’ time also stir speculation. It will drill to collect samples and use Raman spectroscopy to characterize their minerals, looking for imprints of carbon-based materials that might also prove indicative of life.4 As I first revised this chapter on 18 December 2021, the National Geographic had an excited article about a pre-publication announcement of a strong candidate SETI intelligent signal from space, emanating from Proxima Centauri, our nearest stellar neighbour.5 In more recent months, the James Webb telescope has been successfully launched and positioned, starting to focus such investigation much further away. I will focus, here, on life on Earth and the connections made from mathematics and science to information and life.

Stephen Jay Gould (1941–2002) coined the term punctuated equilibrium to describe periods of quiescence and slow change, and periods of rapid and disruptive change in the natural world. Over such eons, humankind has played almost no part in evolution. We are the tiniest of dots on this landscape. The Information Age is punctuated evolution of a new kind, in which humans are, or should be, in control.

Earth as a planet has a 4.6-billion-year history within the solar system and the earliest life forms may have originated between 3.77 and 4.5 Ga (billion years) ago, 100 million years or so after the first appearance of liquid water. These dates are continuously under review and are recalibrated as new evidence emerges and gains sway. With increasing complexity and diversity, life evolved and emerged from the sea onto land and into the sky. By the time of the carboniferous period, 363 Ma (million years) ago, the earth began to look a bit like the earth today. Major extinction events 251.4 Ma and 66 Ma years ago destroyed and rebooted life, eliminating ninety to ninety-five percent of marine species in the first event and half of animal species in the second event. Life flourished again and genus homo appears 2 Ma years ago in the fossil record. Anatomically, modern humans appeared in Africa around 250 Ka (thousand years) ago, colonizing the other continents, replacing Neanderthals in Europe and other hominins in Asia.

Landscape can usually be relied on to evolve very slowly. Tectonic plates drift and collide, sometimes grumblingly in tremors and localized earthquakes, sometimes creating pressure valves released in volcanoes that spread their effects more widely around the planet. Humankind can do little if anything to influence geodynamic punctuations of our planetary history, save to seek out and build in safer places and employ robust and resilient construction methods and defences. The information landscape is now, and increasingly, intertwined with the physical environment, and shaping the living world and human experience. Its construction methods and defences are often proving inadequate for combatting the attrition it has engendered.

Major punctuations of evolution arise from beyond the earth as it is buffeted from elsewhere in the solar system. Meteors arrive in different sizes, as daily events. Much larger asteroid strikes, as at Chicxulub in Mexico, associated with the second major extinction of life 66 Ma years go, burned and scarred the earth for thousands of miles around and precipitated climate change that impacted life everywhere, immediately and over very long periods. This one’s size has been estimated in tens of kilometres and the energy it dissipated in tens of yottajoules–physical estimates of events on earth do not yet have units much larger! Sunspots, arising in the chaotic surface dynamics of the star, flare radiation that disrupts information systems on earth, nine minutes later.

We learn about such events in history from the science of our era. This learning shapes and illuminates our understanding of Earth, and life on Earth. We can now predict and assess some such external risks, but, like Nassim Taleb’s black swans,6 they also arrive unannounced and unpredicted. We hope that we will be spared from them, and mostly do not think about them, or adopt self-comforting denial. We are consumed with surviving present storms and our perspectives and decisions are biased by recency.

Life in Historical and Scientific Context

The nature of life is of enduring interest to conscious minds. Ancient chemists sought an elixir of life. Mystics and worshippers found meaning in patterns of the world they inhabited, and ascribed hardships and extreme events to the will of all-powerful gods. Living and dying were understood and ritualized in relationship with unseen creators and takers of life.

Greek philosophers found beauty and symbolic order in the material world and living creatures, expressing this in their writing and through the arts. Other cultures decried this as idolatrous imagery. Mechanisms of living organisms and their dysfunctions provided rich material for these pursuits and preoccupations. Birth and death, the transition into and out of life, now occupy centre stage–illness and disease likewise. The extant writings attributed to Hippocrates (c. 460 BCE–375 BCE)7 and those of Galen (c. 130 CE–210 CE),8 whose ancestral home was in Aesculapian (Aesculapius, the Roman god of medicine), tell the story of the stirrings of medical discipline and practice, as symbolized in the classical iconography of the caduceus–a sword or staff with twin venomous serpents entwined around it.

Skipping forward many centuries, the Italian Renaissance imagery of Leonardo da Vinci (1452–1519) captured human anatomy in artistic detail and his polymath range of interests made connections with mathematics, engineering, botany and astronomy. Jumping again, to the era of William Harvey (1578–1657), the inner function of the body was explored, and the circulatory system of blood discovered, as described in his book de Motu Cordis (1628). Medical practice slowly evolved from the mystical and pragmatic–leeching of blood and herbal medicaments and preoccupation with extracting vapours, seen as poisons–to an experimentally balanced science. The surgical profession started with the Barber-Surgeons in the mid-sixteenth century, as a trade guild and livery company of the City of London. As invasiveness of interventions increased, they battled infection and outcomes were poor. Surgery separated into its own domain and acquired its Royal Charter in the mid-nineteenth century.

Identification of patterns of disease and their classifications by pathologists such as Thomas Hodgkin (1798–1866), dissecting the bodies of deceased patients, opened insight into a new world of ordered and disordered bodies, and the time course of their life, from birth to death. This branch of science was predicated on advances in optical devices for magnifying minute detail. Experienced clinical observation remained central to professionalism.

Health and disease were increasingly of wider interest in society. Adverse outcomes as well as beneficial ones attracted attention and were related to good and bad standards of the professions as well as good and bad prognoses of patients. Reputation and remuneration were at stake. The legal profession became more active on the scene.

As with any innovation impacting on established skills and practice, there was kickback. Stethoscopes were an unproven irrelevance–the physician’s hand and a chronometer were all that was needed–thus ruled the rulers of the times. Limitations in what can be done to support patients and improve their clinical situation may render additional measurement irrelevant. But, if new measurement can potentially cast new light on a clinical situation, or open new opportunities for treating it effectively, there is a case for experiment. In striking a balance in what was done to and for a patient, with many interests in play, the patient’s interest–only slowly well-represented–became of greater concern. How people concerned about loss of livelihood or influence may see that balance, may differ significantly from how the eager innovator or the patient may see it.

Evolving science and technology ushered in a new era of measurement devices. Physicists became increasingly interested and involved in medicine, heralding new methods of investigation and treatment of disease. X-ray imaging devices, recording penetrating radiation on photographic emulsions, allowed visualization of internal organs. New devices, such as for radiation therapy, required significant engineering expertise to design and operate them safely. Medical and surgical procedures increased in ambition, alongside widening knowledge of pharmacology and pharmaceutics. Other devices of potential relevance to medical practice continued to appear. An increasing range of national bodies extended the regulation of medical practice.

An innovative thrust came from a different direction in the first half of the twentieth century, again, in part, from the cross-fertilization of physics and mathematics with physiology. At the turn of the century, the electrophysiology of the integrated nervous system was the experimental domain of Charles Sherrington (1857–1952). With Edgar Adrian (1889–1977), he was awarded the Nobel Prize in 1933 for their work at the University of Cambridge on the function of neurons. At the start of my songline, another illustrious Hodgkin, Alan Hodgkin (1914–98), and Andrew Huxley (1917–2012), were Nobel Prize winners in 1963 for their work at the Universityof Cambridge and the Marine Biological Laboratory in Plymouth, which included the development of the original mathematical model of the propagation of nerve action potential. And in the 1970s, towards the end of his career, the zoologist and neurophysiologist John Zachary Young (1907–97), at University College London (UCL), updated Sherrington’s concept of an integrative nervous system in his 1975–77 Gifford Lectures on the Programs of the Brain, an inukbook that I discuss below. I shared his academic affiliation with Magdalen College at the University of Oxford and UCL, although my tiny and invisible village school was very different from the visible majesty of his Marlborough College, which I know from friends was also a wonderful academic environment to experience.

The scientific understanding of living organisms has advanced beyond recognition in the past seventy years. Foremost among the discoveries that opened a new window onto the unknown, in the memorable phrase of the physicist Max Born (1882–1970), was that of the double helix structure of DNA, by Francis Crick (1916–2004) and James Watson in 1953. Max Born’s son, Gustav Born (1921–2018), worked alongside the pharmacologist John Vane (1927–2004), in my years at St Bartholomew’s Hospital (Bart’s). Advances in laboratory science and technology and insights gained from the tracking of genetic mutations through successive generations of reproduction of living organisms were developed and brought to fruition by an outstanding generation of scientists, many of them Nobel Prize winners, combining with the emerging capabilities and analytical methods of information technology and computer science. Together, these paved and led the way to astonishingly rapid advances in the sequencing of genomes and mapping of their component structures and functions.

Over much the same period, parallel insights from mathematics, physics, computer science and engineering, linking with biology and cognitive neuroscience, have shone new light on ideas about the nature of living systems and the human brain, framed within concepts of information networks. The studies of information networks–computer networks, gene networks, health information networks, social networks–have proliferated and cross-fertilized.

These multidisciplinary efforts have brought increasing focus on the nature of information itself. We are in a scientific era that seeks understanding of complex systems by building models that draw on many domains of knowledge: the mathematics of symmetry, topology and calculus; the physics of order, information and energy; computer science and formal logic; and the engineering of control systems. It is marrying these with chemistry and life science, from the level of atoms, molecules and cells to organs, bodies, populations and ecosystems. Information in this holistic perspective is conceived as fundamental and quantifiable, and even perhaps a physical property of matter.

Underlying such quests for unification, nature seems to place some restrictions–energy is conserved, it is impossible to travel faster than light, the universe proceeds towards states of increasing disorder. Physics looks for theory consistent with such appearances and constraints–if there is breakage, the experiment is flawed, or it is the theory that is broken. Fragmented ideas about information, from many domains, are distilling and evolving towards a coherent core of information theory and methods. They have a two-hundred-year songline dating from the time of the early steam engines–nice to have a train of thought connecting steam with information!

In whatever way these ideas may ultimately connect within the practical domain of health informatics, there is urgency to progress ideas on multiple fronts to improve understanding. We need good and enabled teams and environments in which to draw them together. Downplaying the complexity and challenges involved in unifying health informatics, and opting for single and fragmented communities, cannot work. Left to academia, health informatics becomes distracted into more and more disengaged words and airmiles. Left to governments and NGOs, it becomes corralled into political power struggles. Left to consultants and industry, it engenders wasteful gold rush. Left to managers and regulators it becomes disconnected and unimplementable. Left to clinicians and technologists, it has remained intractable.

To date, an Institute of Life Science and Health Care Informatics with this broad scope would likely be seen as both unworldly and unacademic. It would be worth a try! How we tackle what we do not know about (but must act on, learn about and implement) boils down to good ideas, tractable goals, capable teams and richly endowed and protected environments fostering creativity, experiment and learning. Multiprofessional and interdisciplinary teamwork and good environments are crucial. I seek to draw these thoughts together and show them in action in Part Three of the book.

Information in Context of Physical, Engineering and Life Sciences

Bertrand Russell (1872–1970) thought of mathematics and logic as one and the same–the basis of clear, precise and consistent thought and reasoning about the world. The answer to the ‘What is mathematics?’ question seems to encompass whatever you need in your armoury, to support you in achieving that lofty goal–as fully as you can, but not with a sense of completeness or perfection. Mathematics is what mathematicians decide to do, as it were–quite an attractive perspective for the academic mind, and important for the rest of us to enable them to get on with it, unhindered! New problems encountered may require new ideas and methods of mathematics. In this way of thinking, mathematics is akin to a model of logical reasoning (not a model of the way the human brain works, although neuromorphic computation seems on the up again, now). Its corpus of ideas and methods is its discipline, positioned close by to philosophy around the circle of knowledge.

Early science rattled the cages of religion and philosophy and was allowed out under the guise of the label ‘natural philosophy’. It entails many ‘What is?’ questions that still baffle. It evolves models and methods–ways of describing and reasoning–seeking to understand better, as times change, and the world moves on. The ‘What is reality?’ question may be destined never to be resolved, but theoretical physics continues to posit ideas and keep trying.

Physics poses other ‘What is?’ questions–what is gravity, quantum entanglement, dark matter, dark energy, entropy, time? Armed with the ideas and methods of mathematics to help keep it on track, it ventures to describe and tame the observed physical world, in the shape of theory and experiment that embrace manifolds of space and time, fields, forces, energies, elementary particles, nuclei, atoms, molecules and their ensembles, and now of information. Unanswered or partially answered questions spur new endeavours.

New mathematics and science are discovered, to model and simulate patterns and behaviours encountered, and bring rigour to their analysis. As Karl Popper (1902–94) is reported to have said, the essence of modelling is to discover what can safely be left out. Faced with increasing complexity, how can a problem be reframed, drawing on new ideas and methods, to achieve a goal of simplifying perhaps hitherto intractable descriptions, and enhance understanding and ability to reason consistently.

William of Ockham’s (c. 1285–1347) Razor points to virtue in simplicity in this process. In the physical world, there is poetic simplicity and profound science in hydrogen–the simplest element, just one proton and one electron, and the origin of all the other elements in the evolving universe. Its name means maker of water, itself a quite simple molecule–an assembly of two hydrogen and one oxygen atoms. The polarized charge distribution of the water molecule gives rise to its complex physical behaviours–solid ice expands from and floats on liquid water, and aqueous solutions exhibit complex behaviours which play out throughout the chemistry and complexity of life.

Early in my postgraduate career, I read beyond classical and quantum physics into the connections from mathematics into computer science and electrical engineering. A pivotal stage was the mathematician Claude Shannon’s (1916–2001) characterization of the information content of electrical signals and their digital communication through transmitted messages. John von Neumann (1903–57) advised him of the parallel with Ludwig Boltzmann’s (1844–1906) and James Clerk Maxwell’s (1831–79) earlier ground-breaking connection of theory of order and disorder of physical systems with the concept of entropy, which unfolded in the field of statistical mechanics and thermodynamics. Thus arose the term information entropy, and ideas connecting order, information and life, as I further describe, below. This has evolved into a complex chain of ideas, probing at the limits of what we know and can know, with new ideas extending into life science, medical science and health care. The early history is a great example of the pioneering connections that von Neumann made, between mathematics, science and engineering, leading into the Information Age. His early death from cancer was a great loss. The book of his 1956 Silliman Lectures on The Computer and the Brain, which he worked on as he came close to death, is one of the landmark contributions I introduce in this chapter.

I read further through the connections from mathematics and physics into theory of complexity, and emergent properties of physical systems in states of thermodynamic disequilibrium and irreversible change. Ilya Prigogine (1917–2003) and René Thom (1923–2002) are remembered storytellers. The Belousov–Zhabotinsky reaction (Boris Belousov (1893–1970) and Anatol Zhabotinsky (1938–2008)) was a captivating chemical example of non-equilibrium thermodynamics appearing as an oscillating perpetuum mobile. A hypothesis of the times was of life as an emergent property of dynamical systems far from equilibrium. And other conjectures arose.

Around the same time that Shannon alighted on his concept of information entropy, there was increasing cross-over into study of the living world, where the ‘What is life?’ question was in search of an answer. Human life plays out from the physics and chemistry of energy and membrane into the biology of organelles and cells, and into organs and organ systems, bodies, families, populations and species. The quest is for increasing precision and traction in ways of describing and reasoning about living systems. It may require new science and new mathematics. It is a field in which mathematics bridges into informatics, and physical science into biology and medicine, around the circle of knowledge. Informatics in this broad context might be characterized as a science of information that spans from mathematics, through natural science to engineering science.

‘What is informatics?’ is a question that I was teased about in my early medical school academic post. I decided to stick it out and not retreat to a safe distance from this sometimes indulgent, sometimes slightly menacing mockery, sheltered in the mathematics or science establishments. I wanted to find out what informatics is, by working inside the world of life science and medicine, engaging as broadly as possible with problems I came across there. I was given carte blanche to live out an experimental enactment of ‘informatics is what informaticians do’, in the way that the Nobel Laureate physicist, John Archibald Wheeler (1911–2008) was content with mathematics being what mathematicians do.

As mentioned above, early perspectives about the scientific nature of information connected with the study of the behaviour of physical ensembles (groupings) and systems of things that interact with one another. This evolved from the study of the properties of gases and connected the experimental study of thermodynamics, as classically expressed in the laws governing their physical behaviour (measurements of pressures, volumes, temperatures and so on) with theory of statistical mechanics, which modelled the behaviour of the gas in terms of ensembles of gaseous molecules.

A key, but elusive concept of classical thermodynamics, relating to the capacity of a heated gas in a steam engine to expand and thereby be organized to perform useful work, was entropy. This was quantified in terms of properties that could be measured, but the answer to the ‘What is entropy?’ question was elusive. No one knew the answer to that question. Conjectures about its connection with other ‘What is?’ questions persist today. But, as with quantum theory battling the ‘What is reality?’ question, today, there was theory and method that enabled the classical thermodynamic system to be modelled mathematically and used to simulate and predict its observed behaviours, making use of this concept and calculating its changing value. In his kinetic theory of gases, Boltzmann’s crowning achievement in 1877 was to connect the entropy of the gas, seen as a macrosystem state in classical thermodynamics, with theory of statistical mechanics and the number of equiprobable microsystem states of the component gas molecules in which the system could exist. This was a measure of the order exhibited by the description of the microsystem: if highly ordered, only a few descriptive states are possible; if highly disordered, very many. Entropy emerged as a measure of disorder–increasing entropy being in a negative logarithmic relationship to the Boltzmann quantification of order. James Clerk Maxwell and Max Planck (1858–1947) shared in the later mathematical formulation of these ideas.

Many decades later, as presaged above, a further connection was made between concepts of order and information, in the context of communication of electrical signals. This seminal contribution was made by Shannon, who arrived on the scene as the Third Industrial Revolution of electronics and communication devices came into view. He thought about electrical signals and their faithful transmission within telecommunications systems. Electronics was opening into the new world of digitization and communication of signals. In 1948, he published his seminal paper entitled ‘A Mathematical Theory of Communication’.9 For Shannon, the thing communicated by the signal (its content) was information. Thinking about how to quantify this information, he alighted on a logarithmic transform of binary numbers that looked useful. Shannon’s fellow mathematician, von Neumann, knew the physics history and was deeply engaged with the emerging fields of computer science and engineering–and thinking about how brains worked, as well! Unbeknown to Shannon, but pointed out to him by von Neumann, his quantification of information content of a communication was a mirror of that discovered by Boltzmann for entropy. ‘What is entropy?’ and ‘What is information?’ started to share common foundations. Information content became an entropy.10 Shannon took von Neumann’s advice and called his construct information entropy. This idea started to permeate into methods of statistical data analysis.

The burgeoning electronic and information technology worlds extended into characterizing and analyzing the behaviour of ever more complex electrical circuits and communications networks, and then, in recent decades, into the study of quantum computation and quantum circuits. Physics and informatics ‘What is?’ questions became further entrained. The enmeshing of theoretical and experimental quantum physics with theory of information brought imaginative new conjectures about these connections, and extraordinarily precise new methods of experimental measurement arrived to test these ideas. Perhaps theory of information and entropy will emerge further as a unifying conceptual framework linking from thermodynamics and its second law, through to the nature of time, and other ‘what is’ unknowns of physics and universal physical law. Where will theory of information come to sit in relation to the basic measures of length, time, amount of substance, electric current, temperature, luminous intensity and mass? Is information an abstract concept or is it real? Is it an energy? Is it the same as entropy? And how may new discoveries about life and living systems reflect into new physics of the organization of complex systems? Zobaczymy!

Wheeler is remembered for his words of wisdom about the ‘What is’ of reality and information, which he characterized as ‘It from Bit’.11 He described his career in three stages–‘Everything is Particles’, ‘Everything is Fields’, and ‘Everything is Information’. In summarizing this perspective, he wrote:

It from bit symbolises the idea that every item of the physical world has at bottom–at a very deep bottom, in most instances–an immaterial source and explanation; that what we call reality arises in the last analysis from the posing of yes-no questions and the registering of equipment-evoked responses; in short, that all things physical are information-theoretic in origin and this is a participatory universe.12

Biology has connected ever more closely with the physical sciences, mathematics and computer science. Mathematical and computational biology have extended into the modelling of networks of genes, and biochemical pathways were reimagined as information circuits, by analogy with circuits in electronic engineering. Conjecture has extended to the connection of quantum level processes with biological mechanisms and from the emergent properties of complex systems to the evolution of living systems. Informatics has extended into the study of all manner of networks of communication–physical, biological and social.

At the start of my life and songline, Schrödinger was grappling with the question ‘What is Life?’ With amazing prescience, he reasoned on the grounds of physics and genetics of the time to envisage an information code of life, embodied in the chromatin and chromosomes of the cell nucleus. DNA was at that time revealing itself through the crystallographers’ images of X-ray diffraction patterns. And von Neumann, who, as we have seen in Chapter Five, conceived a simple model for the architecture of electronic computational machines, that bears his name, was grappling with analogy between the computer and the brain. The double helix of DNA was described and characterized as information–as both Turing machine paper tape and von Neumann universal constructor, embodying also the self-referencing ability to reproduce itself. Here, the analogy made is that DNA is, in a sense, all three of knowledge, program and data. It is knowledge that enables growth, maintenance and reproduction of the living world. It is program code that bootstraps those abilities, functions and actions. It is data on which those programs operate.

Skipping along the timeline to the 2020s, the language of life science is now the language of biomathematics, biophysics, biochemistry and bioinformatics. Electron transfer and energy gradients across membranes are minutely described as the flux and driving force of life. Quantum chemistry and bioinformatics have transformed pharmacology. And the nature of information, at the heart of all this, is a much-debated issue. The mathematics of symmetry and topology has advanced within particle physics and field theory. It has illuminated general principles and how simple rules and constraints can determine the envelope through which complex living systems emerge and evolve.

As well as ‘What is?’ questions there are also ‘Why are things the way they are?’ questions that relate to life and information. This pairing of questions is illustrated in the progression in physics from the ‘What is reality’ question to the question ‘Why is reality the way it is?’ as a mathematical and scientific, as well as a philosophical, question. The laws that appear to govern the physical world appear finely tuned to basic constants, such that, were they even slightly different, the best current models we have would break apart, predicting a destructive physical Armageddon that would have prevented anything of the observed universe from ever happening. Are there scientific principles that underpin the observed reality revealed by these constants, and are other realities possible and do they exist?

My inukbook by Nick Lane, described in the section below on landmark contributions, argues that ‘Why is life the way it is?’ is as important a question as ‘What is life?’ and suggests how we should balance the two. Does biology need to look further than current physics–are more abstract models of information needed, bearing in mind the advice only to keep what is needed in the models we create? These questions cross into the realm of metaphysics and belief. Mathematics and science will always push on the boundaries of how fully and accurately they can describe the pattern of observed living systems, building on these insights and methods. In 2000, John Maddox (1925–2009) summarized What Remains to Be Discovered, and in 2019, Marcus du Sautoy summarized how such ambition eventually runs into the sands of What We Cannot Know.13 Others have speculated about how all this may connect over time with health care. I introduce inukbooks expressing these ideas in the section below on landmark contributions.

New Frontiers of Information

Just before I started studying physics at Oxford, Rolf Landauer (1927–99) showed something quite unexpected, I think. That when we destroy information, we increase physical entropy. Information sounds abstract, but maybe it is real. That was 1961 and this insight registered nowhere within the information feeding to my heated brain, battling theoretical physics at that time. What was taking shape was the revisiting of a conundrum first explored by the Scottish physicist James Clerk Maxwell, who looked at Clausius’s entropy law and wondered whether intelligent life could defeat it. He envisioned this intelligent entity in the shape of a demon that he described, that could sit in the middle of a gas chamber and route gas molecules in a manner to sort them into a lower entropy order, thus defying the second law of thermodynamics. Could life overcome thermodynamic law in this sort of way? A hundred years later, Charles Bennett showed it to be a consequence of Landauer’s insight about the connection between information destruction and entropy production, that no intelligent entity can defeat the second law.

We have seen how entropy is related to increasing disorder. Since order and disorder are inversely related, the mathematics of logarithms enables us to relate order to negative entropy, termed negentropy. If living systems cannot buck physics, how do they acquire and sustain their order from their environment, thus compensating for the entropy they produce with the negentropy they acquire? It is apparent that they do succeed in ‘cheating’ this fundamental law, at least for a while. From the fertilized egg to the developing embryo and the growing and living body, animal life maintains its low entropy order and cohesion, and disorder of bodily function is in the realm of error rather than natural and progressive growth of entropy. Is there some unknown physics that can reconcile the observation of increasing disorder in physical systems with the observation of sustained order in living systems? What is the life that makes a system alive, and how can it be characterized and described within experimentally verified and consistent theory?

This was the conundrum that Schrödinger addressed, and his reasoning was set out seventy years ago, in the landmark inukbook discussed in the section below, in which he described a living system as feeding on negative entropy from its surroundings. Today, the argument, which Schrödinger himself agreed with, would be phrased in terms of free energy exchange and this aligns more clearly with the now better understood biophysics of electron transfer and electrochemical energy gradients, characterizing the bioenergetics and biochemistry of life science.

In this evolving story, the study of information has permeated from mathematics, physics and engineering, through life science and into the bioinformatics of living systems, as a unifying concept of science. It is now debated in the realm of neuroscience and is moving into medical science and towards health care. What is unknown, at this stage of the story, is how far this science will connect with the concept of information as a utility in everyday life, and specifically in support of health care. In this direction, the discussion of the nature of life moves up further levels in the brain, to the concept of mind–to consciousness and artificial intelligence. Here the contemporary interplay of neuroscience and computer science is very much alive.

As we drill down like this on information, we must keep in mind its dark side; it is not an assured good. It is sometimes harmful, and sometimes better not to know. Economy based on energy creates utility of food, shelter and safety. It consumes oil to generate power and that consumption pollutes environment–and oil runs out. There are no free meals and no free wheels. Francis Bacon (1561–1626) said that knowledge itself was a kind of power, and David Deutsch’s characterization of knowledge as information with causal power conveys much the same idea. Information is closely coupled with energy, health and economy and articulation and appreciation of these links is rapidly unfolding. Economy based on information systems consumes electrical power and thus pollutes. The Cloud is now said to be consuming some twenty percent of the energy distributed through the world electricity grid. Bitcoin mining currently consumes electrical power equivalent to the entire economy of Argentina, and ocean and ice-buried data centres are warming the planet. Information technology consumes rare earths and these, too, run out. Data-ism creates noise and bias in actions based on information, and thus interacts with the power of knowledge.

From Life and Information to Mind and Intelligence

It is at the level where multidisciplinary science extends into matters of mind that the model and analogy of life as an information engine merges with matters of philosophy. Along my songline, this connected with Gilbert Ryle (1900–76) at Magdalen College, and his philosophy of mind. Another luminary figure encountered was Willard Van Orman Quine (1908–2000), whose perspective has been described as ‘naturalistic, empiricist, and behaviourist’.14

There is much drawing and defending of red lines. One common dividing line is that consciously felt sensory experience is the hard problem to understand, and unrelated to the mathematics of information flow, which has little to say about the deep problems of neuroscience and cognitive psychology. This perspective has been championed today by the neuropsychologist Nicholas Humphrey. Accusations of egregious error talk past one another in these circles, as they do in all manner of deep discussions of the many ‘What is?’ and ‘Why is?’ questions that remain unplaced around the circle of knowledge. There is now either a great deal more or very little left to be said on the topic of ‘What is mind?’–a topic that Berkeley gave up on in the quotation that headed this chapter! Zobaczymy!

Rather than trespassing foolishly onto these enduringly shifting philosophical sands, risking being swallowed there, it seems relevant to start from the perspective of an engineer. Placed at the interface of neuroscience, computer science and cognitive psychology, and with a keen eye on the health care needs of society, what can be built there using the insights and methods from these disciplines to improve and contribute usefully to health care? It seems much clearer, now that artificial intelligence is advancing in such leaps and bounds, that there is a lot that can be done in this spirit–the challenge is to keep faith, and in balance with both the science and the values in play. As ever, how this balance is approached will be crucial to success and sustainability. As we have begun to see, and will see more, these are potentially very harmful and costly places in which to get things wrong.

The interplay of computer science, neuroscience and cognitive psychology presents a Popperian Open Society of the mind. An unbounded set of possibilities. A place for humble learning. Discussion of human and machine intelligence has brought experiment in this forest to forking paths in the way ahead. This was foreseen by Richard Feynman (1918–88), writing that:

Some people look at the activity of the brain in action and see that in many respects it surpasses the computer of today, and in many other respects the computer surpasses ourselves. This inspires people to design machines that can do more. What often happens is that an engineer makes up how the brain works in his opinion, and then designs a machine that behaves that way. This new machine may in fact work very well. But I must warn you that it does not tell us anything about how the brain actually works, nor is it necessary to ever really know that in order to make a computer very capable. It is not necessary to understand the way birds flap their wings and how the feathers are designed in order to make a flying machine. It is not necessary to understand the lever system in the legs of a cheetah, that is an animal that runs fast, in order to make an automobile with wheels that goes very fast. It is therefore not necessary to imitate the behaviour of nature in detail in order to engineer a device which can in many respects surpass natures abilities.15

There is an analogy, here, with how physics has come to terms with the extent of its unknowing, as previously described. Experimental and theoretical quantum science reached a boundary of understanding of the nature of reality and decided to duck the question and get on with calculating well-corroborated solutions of the Schrödinger equation, to learn what they tell us in specific cases. Continuing perplexity about the nature of the entanglement of quantum states has not held back advances in quantum computing, and this in turn has led to new ways of thinking about the issue. Continuing perplexity about the nature of gravity alongside the other fundamental forces and the relationships of concepts of mass, energy and time in the observed universe have not impeded space travel. Likewise, perplexity about the nature of mind has not held back interplay of computer science and neuroscience. There is rich potential for exploring the interplay of machine intelligence with theory of mind, and with other still perplexing problems in mathematics, science and medicine. There is similar potential to explore its interplay with problems of social and environmental policy and practice. Machine intelligence has the potential to change life in almost every way, but it cannot be allowed just to happen. It requires the mixture of enterprise and innovation anchored in a common ground of values, principles and goals.

Artificial Intelligence

The seventy years of my songline have seen the emergence of artificial intelligence (AI). It has variously been described and referred to in every chapter of this book. I came across it from the time of its origins in the expert systems of the 1960s: in Dendral, Meta-Dendral and Heuristic Dendral at Stanford University and Massachusetts Institute of Technology (MIT);16 in the LISP language, a pioneering language of computational method designed by John McCarthy (1927–2011); in concerns about computer science and human reasoning (Joseph Weizenbaum (1923–2008);17 in Donald Michie’s (1923–2007) work that developed from his wartime connections with Alan Turing (1912–54) in the United Kingdom.18

Generic and domain-specific systems of medical decision logic and decision making came and went: Caduceus/Internist-1,19 Iliad,20 DxPlain,21 MYCIN.22 Methods of image classification–for example automated chromosome karyotyping and identification of abnormal histopathology slides–came and went, too. The power of the computer industry created and disseminated powerhouse systems such as Watson and Jeopardy. And seemingly more generic and agile methods, such as underpin AlphaFold and ChatGPT, for example, are gaining traction.

Theory of machine learning has drawn on and evolved from the mathematics of Bayesian networks, neural networks, genetic algorithms and more. It is proving of increasingly high economic significance for the world of automation, robotics and autonomous systems. Demonstrations of its powerful applications are causing increasing concern about governance and impact on human society. The number of words said and written about AI systems is the latest explosion of the Information Age. Such patterns of verbal excess tend to reflect chaotic times and correlate inversely with what is known, experimentally. The problem is that the knowledge they draw on and express tends to be cloaked or hidden, often for reasons of commercial propriety. Mathematical methods are not patentable–if kept secret they convey no advantage to mathematicians or to those who depend on mathematics. AI methods are being pursued as protected intellectual property. Kept secret, they confer commercial advantage but do not advance the common ground of knowledge on which all depend, in the way that shared and co-developed mathematics discipline and mathematical methods do. This is a revolution where assessment of its implications and consequences (Zhou Enlai-like, about the French Revolution!) is ‘too early to decide’!23

In his recent televised discussion with Alan Yentob, the prize-winning novelist Kazuo Ishiguro spoke softly and clearly about these concerns.24 They talked about his novels in context of his life and times in Japan and England. Roughly one novel every five years–I like him for that and his explanation of why his creativity evolves in five-year epochs. The most recent novel, Klara and the Sun, explores an evolving (he says it is not far in the future) world of humans and artificial friends (AFs).25 Klara is Lucy’s AF and Lucy is ill and may not live. The book is Klara’s account. Her concern is, will she evolve to become and continue sick Lucy’s life? Ishiguro was pensive.

Landmark Contributions

Many who have specialized in mathematics, science and engineering have reflected on how their different disciplines can connect with and illuminate the origins and fundamental nature of life and living systems. As we have seen, the term information has travelled widely through these connections, in the search for greater understanding of what life is, how it came into being and how it functions and evolves. These connections link theory and experiment with concepts such as symmetry, topology, calculus, order, communication, control, energy and computation.

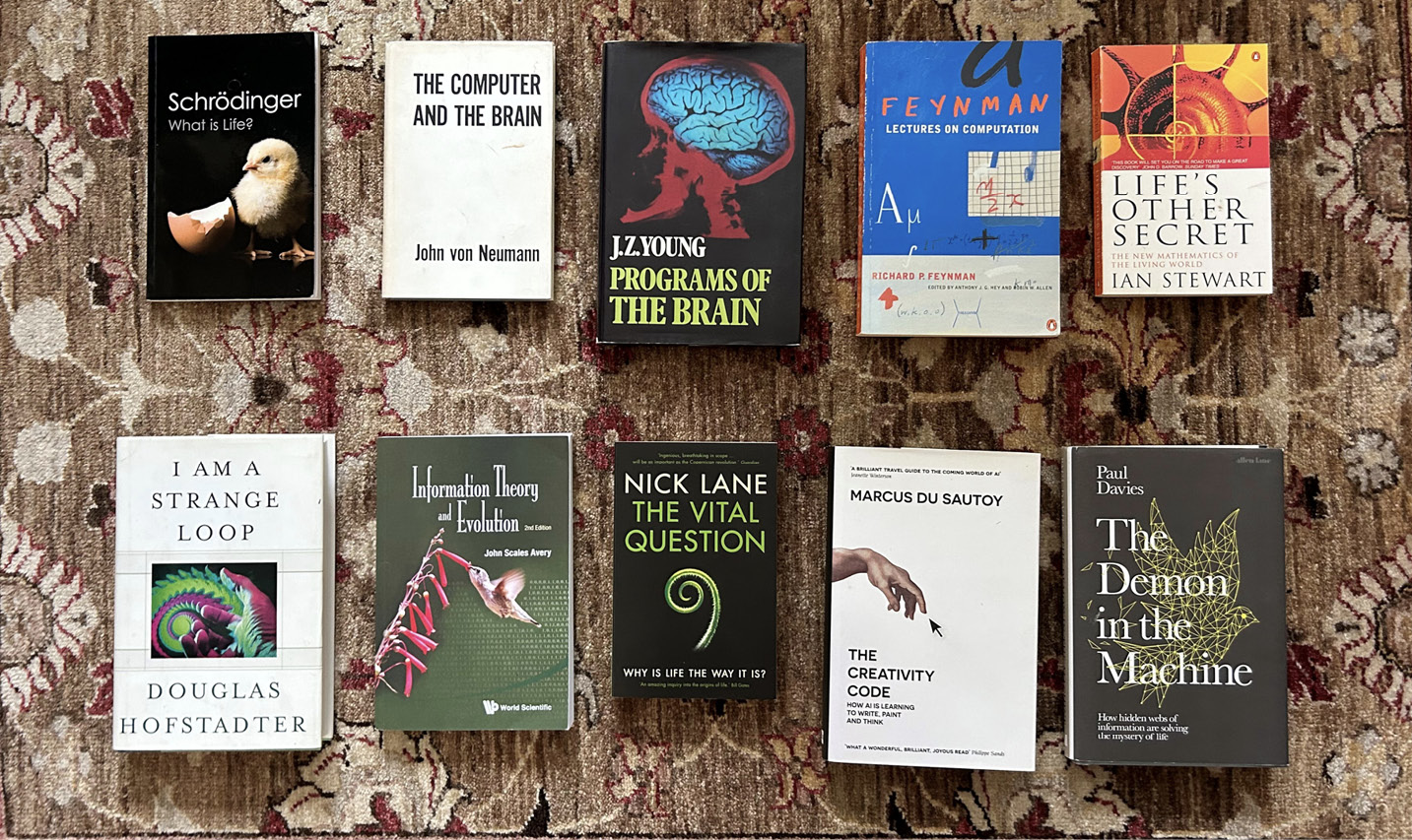

It seems fitting to celebrate here some of these pioneers, decade by decade over nearly eighty years. Some inukbooks that remind me of them every day are: What Is Life? by Schrödinger; The Computer and the Brain, by von Neumann; Programs of the Brain, by Young; Feynman Lectures on Computation, by Feynman; Life’s Other Secret, by Ian Stewart; I Am a Strange Loop, by Douglas Hofstadter; Information Theory and Evolution, by John Scales Avery; The Vital Question, by Lane; The Creative Code, by du Sautoy; and The Demon in the Machine, by Paul Davies.

In drawing together this selection–a synthesis of syntheses–I risk making an even greater than usual fool of myself as their individual contents are, in themselves, wide-ranging and well beyond my detailed knowledge, such is the range and pace of advance. I have collected these books around me and consulted them for inspiration, as I write. Here they are:

Fig. 6.1 The inukbooks that draw together a number of career-long contributions that have illuminated the ‘what is’ and ‘why is’ questions about life and information, discussed in this chapter. Photograph by David Ingram (2023), CC BY-NC.

The books track back over a century in exploring information as a fundamental concept in relation to living systems. This is a domain that Schrödinger brought to life in his series of landmark lectures published in 1944, entitled ‘What is Life?’.26 I then introduce the Silliman Lectures on The Computer and the Brain, which looked at the brain from the perspective of computer science, as a computational machine.27 These lectures were delivered by the mathematician von Neumann, the originator of the eponymous von Neumann architecture of the computer Central Processing Unit (CPU).

I move next to The Programs of the Brain by Young, remembered for his treatise of the times on the life of mammals and research on nerve function.28 The book is based on his 1975–77 Gifford Lectures at the University of Aberdeen. I remember this tousled grey-haired figure, a legendary UCL personality, striding along Gower Street outside the medical school where I worked in my PhD days. His is the broadest ranging review, embracing philosophy of mind and revisiting the pioneering work of Sherrington, a hundred years ago, who was the first to characterize the integrative nature of the human nervous system.

Next in the selection is the Feynman Lectures on Computation, edited by Anthony Hey and first published in 1996, which is the best entry route I know to the science of computation.29 Following this, Stewart’s Life’s Other Secret stands out by bringing a mathematical perspective on the subject.30 I also visit the polymath Hofstadter and his book I Am a Strange Loop, which weaves Gödel numbers with cognitive psychology in an imaginative conjecture about the nature of conscious thought.31 I include next the book by Avery, Information Theory and Evolution.32 This is a good source of reference that collates a wide range of materials and provides more mathematical content than the other books in the selection. I then introduce another UCL life science colleague, Lane, and his The Vital Question, which sets biology, bioinformatics and bioenergetics side by side in context of his question.33

Coming back to mathematicians, du Sautoy wrote The Creativity Code, which reaches beyond computation and machine intelligence to machine creativity.34 And coming back to physics, Davies has brought things up to date in 2020 with The Demon in the Machine, opening the subject out into speculation about how the story will evolve into medicine of the future.35 He was a physics PhD student at UCL at the time I was doing my own PhD there, as he told me when we met very briefly, when I was collecting this inukbook directly from him, at a New Scientist Live event in London where I heard him speak.

1944–Erwin Schrödinger: What Is Life?

Schrödinger has an amazing and vivid biography. It extends through two World Wars and the turmoil between them, studying physics in Vienna in 1906, working in a succession of appointments in Austria, Germany, England and Ireland, succeeding or in parallel with the great names of Boltzmann, Einstein, Planck and Born. The intermissions for military service came first in 1910–11 (which he spent in what he describes as the beautiful old town of Krakow), and then from 1914, during subsequent service in Italy (which he describes as uneventful and giving plenty of time for study of Einstein’s 1916 paper on relativity theory, a subject which he struggled to understand). From 1933–36, he was a fellow of Magdalen College, Oxford, sponsored there by Frederick Lindemann (1886–1957–later Lord Cherwell, Winston Churchill’s science advisor). Around this time, he started to turn his mind to the connections of physics and chemistry with biology. Later, when settled in wartime years in Ireland, and working at Trinity College Dublin, he delivered a set of lectures on the question, ‘What is Life?’ In subsequent years, Trinity was a leading European centre in Health Informatics, under the computer scientist and my colleague, Jane Grimson, for whom I acted as a visiting examiner for some years. Jane was subsequently Vice-Provost of the College and a leader of the engineering profession across Europe. In the history of Trinity College, there is thus a close connection between the three dimensions of information–information and life science, information for health, and information technology. Schrödinger supplemented his lectures on life with further lectures on mind and matter, which he considered an even more exacting intellectual challenge!

The following excerpt is quoted at length from the Preface to What Is Life? to showcase his magnificent clarity of thought and style:

Let me use the word ‘pattern’ of an Organism in the sense in which the biologist calls it ‘the four-dimensional pattern’ meaning not only the structure and functioning of that Organism in the adult, or in any other particular stage, but the whole of its ontogenic development from the fertilized egg cell to the stage of maturity, when the organism begins to reproduce itself. Now, this whole four-dimensional pattern is known to be determined by the structure of that one cell, the fertilized egg. Moreover, we know that it is essentially determined by the structure of only a small part of that cell, its nucleus. This nucleus, in the ordinary ‘resting state’ of the cell, usually appears as a network of chromatin, distributed over the cell. But in the vitally important processes of cell division (mitosis and meiosis) it is seen to consist of a set of particles, usually fibre-shaped or rodlike, called the chromosomes […]36

To reconcile the high durability of the hereditary substance with its minute size, we had to evade the tendency to disorder by ‘inventing the molecule’, in fact, an unusually large molecule which has to be a masterpiece of highly differentiated order, safeguarded by the quantum rod of quantum theory. The laws of chance are not invalidated by this ‘invention’, but their outcome is modified. The physicist is familiar with the fact that the classical laws of physics are modified by quantum theory, especially at low temperature. There are many instances of this. Life seems to be one of them, a particularly striking one. Life seems to be orderly and lawful behaviour of matter, not based exclusively on its tendency to go over from order to disorder but based partly on existing order that is kept up […]37

What is the characteristic feature of life? When is a piece of matter said to be alive? When it goes on ‘doing something’, moving, exchanging material with its environment, and so forth, and that for a much longer period than we would expect an inanimate piece of matter to ‘keep going’ under similar circumstances.38

He wrote of the chromosome as containing a ‘code-script’, the entire pattern of the individual’s future development and of its functioning in the mature state.

Schrödinger’s was, as he himself acknowledged, a bold foray into the domain of living systems. He argued from the outset that a living organism requires exact physical laws, otherwise life would be impossible to sustain and for humans to be capable of orderly thought. He recognized the incompleteness of his analysis and the implication that greater understanding would reveal a need for new physics.39

The question he posed at the outset of his lectures was: ‘How can the events in space and time which take place within the spatial boundaries of a living organism be accounted for by physics and chemistry?’ He took the issue of brain organization into the subsequent lectures on Mind and Matter, in 1956.

Schrödinger reasoned from principles of statistical thermodynamics, the quantum physics of the atom and the chemistry of molecules, showing the need for new scientific insight into how orderly life succeeded in persisting and reproducing, given the composition, size and sensitivity of the materials from which it was made.

The science of genetics had evolved to that point through experiment: selective breeding, microscopic analysis and studies of mutations induced by X-rays. And from these, a picture of genes structured within chromosomes had emerged, with experimental methods to relate changes in the band patterns in images of the chromosomes with changes in the molecular structure of specific genes. From microscopy images revealing the pattern of the fertilized egg cell, to the patterns of inheritance in breeding experiments, to the effect of different doses of ionizing radiation on mutation of the gene as revealed in images of subsequent development of the organism, he brought together estimates of numbers of genes present, and their sizes, in terms of number of atoms they contained.

From the quantum theory and experimental science of molecular mutations, he reasoned about the challenge living systems overcome in persisting for many years and over generations, inherited and communicated through fertilized eggs. He reasoned, from physical principles, about the scale and composition of the genetic material of the cell and its persistence over time. He argued that the classical statistical physics of the preceding century could not account for such reliable persistence over time being generated from the amount of material present at such small scale. Reasoning then at the level of molecular chemistry, and using the explanation provided by quantum theory for the stability of chemical bonds and molecular structures, he went on to show this theory to also be deficient for explaining genetic variety of expression. Reflecting on X-ray crystallographers’ insights on material structure, he reasoned that a regular periodic crystal structure for the chromatin would not suffice to account for the observed patterns of scale, variety and persistence.

From the estimates of the size of the genes he reasoned that they must have the form of what he termed an ‘aperiodic solid molecule’ (as opposed to liquid or gas). This molecule was non-repeating, in the sense that every element would be capable of carrying information (his coding script), enabling the relatively small number of atoms and genes comprising the molecule to code for the growth and variety of structures and functions of the living organism, as evidenced by the development of the embryo from a single egg cell, as observed in life.

Here were the origins of a theory that made connections between information and living systems. Schrödinger also reviewed in depth the connections of classical with statistical thermodynamics. To recap from the sections above, in the former, the measurable physical quantity of entropy is calculated in linear proportion to heat energy flux and inversely in proportion to temperature. Boltzmann’s fundamental advance described this system in terms of the dynamic distributions of gas molecules and their natural evolution from orderly towards disorderly states. He invented a mathematical model to characterize this order, which he connected with entropy measurement. In this way, entropy is characterized by a logarithmic relation with Boltzmann derived disorder, and likewise, since order and disorder are inversely related to one another (high disorder implies low order, and vice versa), it follows that order may be quantified as a negative entropy. This opens the door for an image of living systems that defy the second law of thermodynamics, whereby entropy always only increases. At issue was the question of how to reconcile the observed sustained order of living systems, from cell to embryo and living organism, with the classical physics of entropy as expressed in the second law of thermodynamics. In looking at the energy balance of living systems, the thermodynamics view expressed was that a living organism ‘feeds’ from order, and the associated negative entropy acquired balances the natural production of entropy in its everyday functions, thus preserving its living state of order. It was a subtle and contested argument, and he later adjusted it, in response to criticism.40

1956–John von Neumann: The Computer and the Brain

In this book, based on a manuscript prepared for the Yale University Silliman Lectures of 1956, we encounter the voice of the Hungarian mathematician and early pioneer of computer science and technology, von Neumann. He was unable to deliver the lectures and died of cancer in early 1957. His wife, Klara, completed the manuscript and added a wonderful Preface, connecting the book with her husband’s work on mathematics and later as an early pioneer of electronic computer architecture. He made many contributions in pure and applied mathematics through the era in which Kurt Gödel (1906–78) upset the apple cart of Principia Mathematica, approving of his reasoning about its incompleteness. This was the era in which mathematics and formal logic found its way into the foundations of computer science. After wartime work on the Manhattan Project, he was a member of the team at Princeton University that produced the early prototype electronic calculator, called JONIAC. This work led him to study the brain and nervous system, looking for inspiration there for its design principles. He was clearly a towering figure in the optimistic postwar era of America–recognized in senior roles in the government of President Dwight Eisenhower.

As the title describes, the topic of the book is an analogy between brain and computer, seen from the perspective of computer science, as a computing machine. It is interesting in how it reveals the thinking that bootstrapped early machine architecture. It is a mindset focused on crafting technology to perform mathematics. This technology-inspired thread has been taken forward by Raymond Kurzweil in his How to Create a Mind, in 2012.41

Von Neumann’s book is quite short–just eighty-two pages–and in two halves. I read it again last night. The first is about machinery of numeric calculation. That is, about arithmetic and logic–the basic operations involved and how these have been enacted by different machines, starting from mechanical analogue computers, and translating on into the early world of electronic computers and hybrids of the two. He was clearly closely involved with the engineering involved, as he gives chapter and verse about kinds and numbers of components and the precision with which they worked and were coupled together in the machines. The picture is one of a selection from a toolbox of components, choosing and customizing them to perform calculations. He describes the requirements for arithmetic, memory and programming, and for honing these together. In the analogue case, he makes a connection with the Babbage engine-like world of differential gears used in car transmissions, showing their utility in combining and averaging inputs, and of rotating discs driven to integrate inputs, and how these were used as basic operations of the mechanical machine. He places himself in the middle, knowing what he needs as a mathematician and what the engineer can provide him with, as component methods, and marries the two.

Moving to electronic computers, he gives details of circuits of thermionic valves, rectifiers, capacitors, resistors and their component magnetic and electrical properties, size and speed of operation, and precision with which they worked. He sketches a hierarchy of devices for storing, processing and transporting data around the machine, considering which of these needed to operate rapidly on the critical path of the calculation, and which needed to operate more slowly in support, in the background of the calculation. The options ranged widely over acoustic delay lines, electromechanical storage devices and electronic components based on ferromagnetic and ferroelectric materials.

Von Neumann focuses on the fast-acting memory registers used for number crunching, showing the scale of logic operations required for the digital arithmetic that had to be performed on the numbers they contained. For example, he talks of a twelve decimal digit number system requiring 196-tube (thermionic valve) registers. These were huge, power hungry and heat-producing machines. The ENIAC at Los Alamos had twenty-two thousand such valves. He works through design considerations around data and program, starting from early ‘plug and play’ programming, where the program consisted of wires patched between electronic components. From there he moves on to stored programs and the greater sophistication of calculations they enabled.

The first part of the book is interesting in showing the creative engineering involved in matching capability of components with design of machine, to meet requirements of calculation. The second part of the book is interesting in a completely different way–it reveals how von Neumann, as computer architect, was deeply engrossed in the structure and understanding of the human nervous system. In creative engineering, it is common to work from a prototype model, treating this as a test bed in which to explore further necessary refinement. The final design may have born limited resemblance to the prototype but arriving at that design depended on going through the prototype stage. It cannot be reasoned into existence because its design is an art of the possible, and possibility is only explored experimentally, by making and doing things, working with models and improving or rejecting methods.

Neurology at that time was much focused on sensory mechanisms, the action potential through which information is transmitted and the pathways of connection and interaction within the brain and nervous system. Neuroscience and philosophy of mind are in a wholly different era today, compared with the time of von Neumann, as is the world of nanoscale semiconductor and optical technology compared with that of thermionic valves and acoustic delay lines.42 Functional brain imaging methods have been pivotal to the evolving science. Von Neumann touches lightly on the connections of mathematics, physics and chemistry with description of sensory mechanisms. His commentary is interesting in the eye he casts over the analogy of computer and brain:43

- The speed of the computer processing unit being 104–105 times faster;

- The natural component of the brain being smaller by the order of 108–109;

- The brain as having more numerous processing units, operating slower and in parallel, as compared with the computer operating with fewer units, faster and serially;

- The neuron as the ‘basic digital organ’ of the brain, which he characterizes and compares with the computer circuits, in terms of threshold of activation and time to stabilize (‘summation time’);

- In discussing the nature and location of memory, he describes the modern computer as needing 105–106 bits of memory;

- He suggests ‘genetic memory’ in chromosomes as a component of the brain’s memory;

- He suggests a parallel between analogue/digital, hybrid processes and genes connecting with enzyme processes;

- In considering logical structure and arithmetic function, he compares the propagation of error in digital arithmetic, requiring 10–12 decimal points of precision of number representation to alleviate this acceptably, to the human brain, which he describes as doing mental arithmetic with just 2–3 decimal points of precision. By comparison he believes the brain to achieve greater reliability in logical operations;

- He talks about messages in the brain communicated as periodic pulse-trains, conjecturing that statistical relationships between such time-series might also convey information–thinking there of ‘correlation-coefficients, and the like’.

In his summary,44 he talks about the language of the brain and how this differs from the language of the machine. He talks of the nervous system being based on two types of communication–what he calls orders (logical ones) and numbers (arithmetic ones). He suggests that variety of spoken language might indicate that there is nothing absolute and necessary about them, and that logic and mathematics are themselves, historical and accidental forms of human expression, which might exist in other forms than those we are accustomed to. He uses the example of visual perception and compares what the brain achieves in three synapses of logical processing along the optic nerve, and subsequent low precision arithmetic in the central nervous system, with a machine built in an analogous manner, which would, he says, clearly fail to perform at all. His conclusion is that ‘logics and mathematics in the central nervous system, when viewed as languages, must structurally be essentially different from those languages to which our common experience refers’.

It would be so interesting to have him sitting here, now, reviewing how technology, computer science and machine architecture, neuroscience and machine intelligence have evolved in the sixty years that followed the sadly so shortened sixty years of his own life.45 It would be interesting to have Noam Chomsky with us, as well, to add his thoughts on the language of the brain. It would be interesting to see how the two human personalities would have gelled. Von Neumann died a year after a diagnosis of prostate cancer that quickly spread to bone and brain. What he pioneered was instrumental to the ability today to prolong and save the lives of such patients that followed him.

1978–John Zachary Young: Programs of the Brain

The UCL anatomist Young is remembered for his major work, The Life of Mammals, a book I read when expanding my learning from mathematics and physics into biology, medicine and computer science, in 1971. This later inukbook is based on his Gifford Lectures of 1975–77 at the University of Aberdeen.46 He was for twenty-seven years the Head of the Department of Anatomy–quite a stint but in a different era when academic leaders answered to themselves, by and large, leading and managing royally. A bit like hospital consultants! Times have changed in academia, and in medicine, too–leaders cannot, should not and would not wish to persist that long. They answer more widely and lose energy through the exigencies of being royally managed, more than managing royally. Creative souls keep their heads down and away from management pressures, if they wish to survive the time it takes to make an enduring difference in their field of endeavour, the likes of which Young brilliantly exemplified.

Notwithstanding the advance of anatomical neuroscience since its publication in the late 1970s, unleashed by new experimental methods such as nuclear magnetic resonance (NMR) imaging, this book is still spellbinding in its breadth and majesty. I speed-read it again, last night, getting ready to write about it today. The chapter titles summarize the scope embraced and the author’s immense knowledge and wisdom:

1. What’s in a brain?; 2. Programs of the brain; 3. Living and choosing; 4. Growing, repairing, and ageing; 5. Beginning; 6. Evolving; 7. Controlling, coding and communicating; 8. Repeating; 9. Unfolding; 10. Learning, remembering, and forgetting; 11. Touching, feeling, and hurting; 12. Seeing; 13. Needing, nourishing, and valuing; 14. Loving and caring; 15. Fearing, hating and fighting; 16. Hearing, speaking, and writing; 17. Knowing and thinking; 18. Sleeping, dreaming, and consciousness; 19. Helping, commanding, and obeying; 20. Enjoying, playing, and creating; 21. Believing and worshipping; 22. Concluding and continuing.